Cloud computing

From Citizendium - Reading time: 27 min

From Citizendium - Reading time: 27 min

Cloud computing refers to accessing computing resources that are typically owned and operated by a third-party provider on a consolidated basis in one, or usually more, data center locations. They feature on-demand provisioning and pay-as-you go resource billing, with minimal up-front investment. It is aimed at delivering cost-effective computing power over the Internet, including virtual private networks (VPN). From the perspective of a reasonable cloud proponent, cloud services minimize capital expense of computing, tie operating expense to actual use, and reduce staffing costs.

NIST researchers Peter Mell and Tim Grance presented public information about cloud economics. [1]

- There are 11.8 million servers in data centers

- Servers are used at only 15% of their capacity

- $800 billion are spent yearly on purchasing and maintaining enterprise software

- Eighty percent of enterprise software expenditure is on installation and maintenance of software

- Data centers typically consume up to 100 times more per square foot than a typical office building

- Average power consumption per server quadrupled from 2001 to 2006.

- Number of servers doubled from 2001 to 2006

Power consumption, for the servers themselves and cooling, is an increasingly critical issue; Google engineers have commented they may be spending more for power than for hardware.

It has been suggested that cloud computing accelerated in a recession, because using it requires little capital and it lowers risk, according to Tim Minahan, head of marketing for Software as a Service vendor Ariba. "CIOs consider agility more important than cost -- namely the ability to scale up, whether it's processing power or workflow functionality, and scale that back down should they need to. Should the economy go into a 'W' or double dip, they don't want to get caught flat-footed with unnecessary infrastructure, resources or costs on their balance sheets."[2]

NIST researchers Peter Mell and Tim Grance observe that this approach to computing is really the third generation of "cloud" models:

- Networking (TCP/IP abstraction and "agnosticism" to the transmission system)

- Documents (World Wide Web and HTML WWW data abstraction)

- The emerging cloud abstracts infrastructure complexities of servers, applications, data, and heterogeneous platforms They address security concerns by describing cloud computing as "Clouds are massively complex systems can be reduced to simple primitives that are replicated thousands of times and common functional units," and describe cloud security as a "tractable problem."[3]

Business positioning[edit]

Speaking to a financially-oriented community at the Goldman Sachs Technology Conference, a senior vice-president of virtualization and cloud platforms for major software vendor VMWare 90% of all functions performed by a dedicated traditional server from Dell (Nasdaq: DELL) or IBM (NYSE: IBM) could easily move to a virtual server. "There are workflows that might require specialized hardware devices and so on, which could be more tricky," he said. "But the regular commercial workloads that they are talking about could pretty easily fit in a much smaller platform on a pure technology basis."[4] In the presentation, the emphasis was on replacement rather than new construction; clouds were described as ways to save money. Mell and Grace cited this opportunity both in avoiding routine server underutilization, as well as the need, with in-house servers, to provision for peak loads.

Consumers of cloud computing services purchase computing capacity on-demand and are not generally concerned with the underlying technologies used to achieve the increase in server capability. These techniques, however, always include virtualization of processing capability.

There is legitimate criticism that the information technology industry, whose marketing people are always eager to use a new buzzword, calls so many things "clouds" that the term has no meaning. Oracle CEO Larry Ellison somewhat dramatically argued that cloud computing that it is a driven by buzzwords and fashion rather than by actual technical innovation; indeed, many calmer individuals would agree that it is more a change in business models than in technology.

Clouds are water vapor. My objection to cloud computing is... cloud computing is not only the future of computing, it is the present and the entire past of computing is all cloud....Google is now cloud computing. Everyone is cloud computing. My objection to cloud computing is that everyone looks around and "yeah, I've always been doing computing"... everything is cloud. My objection is the absurdity - no, it's not that I don't like the idea - but what is - this nonsense.[5]

Perhaps the greatest risk with clouds, especially if they are defined loosely as no more than marketing buzzwords, is that cloud service buyers will no longer exercise due diligence in information assurance, including both confidentiality and continuity of operations. Cloud service providers, even though they may have multiple data centers, have repeatedly shown that they do not make use of their own capabilities, to move processing, dynamically, to a different data center while the infrastructure fails in one center. The Cloud Security Alliance has been formed to address some of these issues.[6]

When mass storage is outsourced to a cloud, the customer must ensure that someone is still responsible for the customary standard of data protection and backup. In a well-publicized outage, hundreds of thousands of T-Mobile cellular phone users lost their address books, calendars, photo albums, and other personal data in the Sidekick application. T-Mobile had outsourced the Sidekick application from a Microsoft subsidiary called Danger, which, in turn, had outsourced its mass storage to Hitachi. When Hitachi converted the data to new storage servers, it failed to make backups before committing to the new servers. [7]

Much clearer definition of clouds is needed to avoid Larry Ellison's complaint

The interesting thing about cloud computing is that we've redefined cloud computing to include everything that we already do. I can't think of anything that isn't cloud computing with all of these announcements. The computer industry is the only industry that is more fashion-driven than women's fashion. Maybe I'm an idiot, but I have no idea what anyone is talking about. What is it? It's complete gibberish. It's insane. When is this idiocy going to stop?[5]

Rationally defined, a cloud has many more attributes than a computer attached to a network. Rather than being a new technology, it is a new business model of providing services at potentially lower cost.

Defining clouds[edit]

Cloud services differ in their

- Delivery model: their user interface

- Deployment model: responsibility and sharing of infrastructure

- Delivery assurance:

Again, it is first a business model. [8] It exploits that most servers are underutilized, and, if geographically distributed among multiple data centers, exploit time-of-day variations as well as the potential for improved failover. Joe Weinman of AT&T coined a "backronym" of "CLOUD" that best applies to public clouds and, to some extent, private clouds.[9]

- Common, Location-independent, Online Utility on Demand

- Common: implies multi-tenancy, not single or isolated tenancy

- Utility implies: pay-for-use pricing

- onDemand implies: infinite, immediate, invisible scalability

Many commercial cloud offerings, however, are really no more than traditional managed hosting being marketed as clouds. While much of the trade press is legitimately concerned with security in the cloud, the issue of disaster recovery may be even more important. Several major cloud providers have had significant outages, which proved to be localized to a single physical data center — they had not exploited the inherent failover capabilities of some of the technologies that enable clouds. Being a "cloud" excuses neither the consumer or provider for, at the very least, the data protection and failover requirements of managed hosting.

It has similarities to a number of network-enabled computing methods, but some unique properties of its own. The core point is that users, whether end users or programmers, request resources, without knowing the location of those resources, and are not obliged to maintain the resources. The resource may be anything from low-level programming to a virtual machine instance, on which the customer writes an application, to Software as a Service (SaaS), where the application is predefined and the customer can parameterize but not program. Free services such as Google and Hotmail are free SaaS, while some well-defined business applications, such as customer resource management as provided by Salesforce.com, are among the most successful paid SaaS applications. PayPal and eBay arguably are SaaS models, paid, at the low-end, on a transaction basis.

"What goes on in the cloud manages multiple infrastructures across multiple organizations and consists of one or more frameworks overlaid on top of the infrastructures tying them together. Frameworks provide mechanisms for:

- self-healing

- self monitoring

- resource registration and discovery

- service level agreement definitions

- automatic reconfiguration

The cloud is a virtualization of resources that maintains and manages itself. There are of course people resources to keep hardware, operation systems and networking in proper order. But from the perspective of a user or application developer only the cloud is referenced[10]

It is, by no means, a new concept in computing. Bruce Schneier reminds us of that it has distinct similarities in the processing model, although not the communications model, with the timesharing services of the 1960s, made obsolete by personal computers. "Any IT outsourcing -- network infrastructure, security monitoring, remote hosting -- is a form of cloud computing."

The old timesharing model arose because computers were expensive and hard to maintain. Modern computers and networks are drastically cheaper, but they're still hard to maintain. As networks have become faster, it is again easier to have someone else do the hard work. Computing has become more of a utility; users are more concerned with results than technical details, so the tech fades into the background.[11]

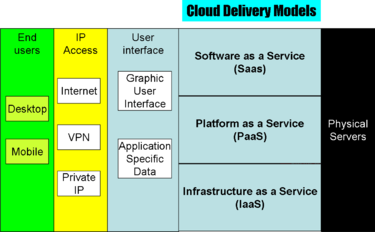

Delivery models[edit]

There is no single industry-accepted definition.[12] While the details of the service vary, some common features of sizing apply:

- Separation of application code from physical resources.

- Ability to use external assets to handle peak loads (not having to engineer for highest possible load levels).

- Not having to purchase assets for one-time or infrequent intensive computing tasks.

These definitions are converging, however, on three or four major models, which the Cloud Security Alliance groups into the "SPI" model, based on work at the National Institute of Standards and Technology:[13]

- Software as a Service (SaaS): the user interface is a human interface, usually a GUI, or a structured data exchange using XML or industry-specific file formats

- Platform as a Service (PaaS): customers program the cloud, but at a relatively high level, such as Web Services

- Infrastructure as a Service (IaaS): customers program the cloud, at a low level, either by a guest copy of an operating system on which they program, or, in some cases, at the low level of emulated hardware (e.g., block structured disk drivers)

- Data as a Service (DaaS): The cloud is more a repository than an active programming environment; often considered a subset of IaaS or sometimes of PaaS

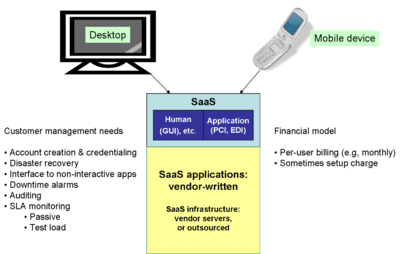

Software as a Service[edit]

Software as a Service responsibilities

Software as a Service appears to the user either as a Web-based graphic user interface, or as a data exchange format specific to an application, especially on a mobile device. The most general type of application-specific data would be application-specific transactions, such as Health Level 7, which use a general data representation such as XML. Alternatively, the file format might only be understandable by the vendor's application.

Free email and search engines are SaaS, with limited customization, and sometimes premium offerings with more functionality. Some use an advertising-supported revenue model, such as basic webmail services such as Hotmail and Gmail. Free customers have no leverage with their cloud providers; there is very little recourse in the event of failures. Some vendors, however, have paid versions of these services, with higher levels of support and customization.

The second type of SaaS has a subscription-based revenue model', such as to Salesforce.com. These customers have more leverage, providing they write their service contracts correctly. With both types, the customer depends on the provider for disaster recovery capability since the applications only are available on the vendor's servers.

Quite a few business services are really SaaS, such as PayPal and eBay; they are hybrid cloud services that facilitate transactions between users. These services may mix subscription-based basic fees with a transaction-based revenue model. The creation of various credit card and check payment features are examples of how SaaS can be customized without programming. Especially in areas where there are significant compliance requirements, such as the Payment Card Industry for credit cards, SaaS providers such as Savvis also assume a professional services revenue model. [14] IaaS provider Rackspace added professional services offerings. [15]

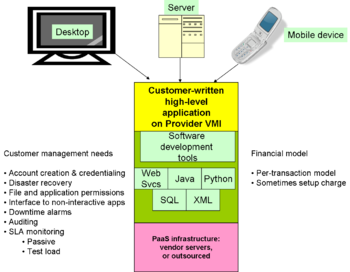

Platform as a Service[edit]

PaaS is like SaaS in that low-level programming is not necessary, but does require customer programming at a middleware level, such as Web services, Java Virtual Machine, XML or SQL databases, etc. Some are restricted to an business function specific set of APIs (e.g., Strike Iron and Xignite) to a wider range of APIs in Google Maps, the U.S. Postal Service, Bloomberg, and even conventional credit card processing services. Billing for PaaS is most commonly on a per-transaction model.

Salesforce.com now offers an application-building system with this term, called Force.Com, as well as its original SaaS products. [16] Google App Engine and other products allow use of higher-level abstractions such as Java and Python virtual machines in combination with web services. Mashup-specific products include Yahoo Pipes or Dapper.net.

A Java Virtual Machine is middleware, not the operating systems or virtual processors in IaaS but below SaaS applications. Java is used both for non-interactive and interactive applications. Nikita Ivanov describes two basic approaches, which are not mutually exclusive, but different products tend to have one or the other dominate. [17] The first is much like the way a traditional data center is organized, where the developers have little control over infrastructure. "The second approach is something new and evolving as we speak. It aims to dissolve the boundaries between a local workstation and the cloud (internal or external) by providing relative location transparency so that developers write their code, build and run it in exact the same way whether it is done on a local workstation or on the cloud thousands miles away or on both."

Google App Engine uses the second model, encouraging testing and development on a local workstation, and then uploading the production version to Google's cloud. Its first version used a Python virtual machine, but has been extended to Java, both with web tools.

Elastic Grid adds value to a Java virtual machine platform with what they call a "Cloud Management Fabric" and a "Cloud Virtualization Layer".[18] The latter provides Elastic Grid customers with an overlay onto IaaS providers such as Amazon EC2 and Rackspace. The former allows the customer to "dynamically instantiate, monitor & manage application components. The deployment provides context on service requirements, dependencies, associations and operational parameters."

Messaging[edit]

Messaging services are inherently distributed, and now extend to service beyond email, such as short message services such as texting, and pager services. When sent in a corporate context, for example, instant messaging services may be less formal to the user than email, but still need to be archived for possible litigation or law enforcement discovery.

Outsourced services that are commercially available meet different sets of customer needs for the same basic messaging functions. Archiving is a basic function, but a public company under Sarbanes-Oxley Act regulation will need more rigorous archiving than a small business.[19]

Especially with a distributed workforce, it may be wise to have messaging service that will continue to operate if a customer-owned server fails, or even if the data center is put out of service by a disaster. Various commercial services provide backup email services with standard protocols such as Simple Mail Transfer Protocol, Post Office Protocol, and Internet Message Access Protocol; proprietary servers with mixed proprietary and standard protocols such as Microsoft Exchange; and end user access such as webmail, Blackberry or other personal devices and text-to-speech. This may be done purely for disaster recovery, but also can be for legal reasons of compliance or discovery, and for cheaper storage of old data.

Data specialization[edit]

Some PaaS vendors specialize in handling specific kinds of data. StrikeIron, for example, might be considered a computer-to-computer mashup, integrating external data bases with enterprise data, and combining them within a common business functions such as call centers, customer resource management and eCommerce. It accepts U.S. Postal Service data. [20]. Xignite is more specific to the financial industry, retrieving data such as stock quotes, financial reference data, currency exchange rates, etc.

Audio and video information are normally accessed with applications rather than at the filesystem level, so PaaS is an appropriate interface level.

Cloud interconnection[edit]

- See also: #hybrid clouds

If the interchange among clouds is well-defined data among hosts, it is far less difficult than actually passing virtual machines among clouds, which may not always be necessary. If, however, virtual machines must pass, a Gartner Group analyst, Cameron Haight, says it is really several years away, with issues such as how "one cloud provider can consume the metadata associated with a virtual machine from another vendor," the metadata describing the service requirements of that virtual machine. There is a controversy over the business approach taken by VMWare, whose management tools will support only its own hypervisor, as opposed to the more general approach of Citrix, Microsoft [21] and Red Hat. Red Hat LINUX uses an open source project called DeltaSource.org, to facilitate private-public cloud integration. [22]

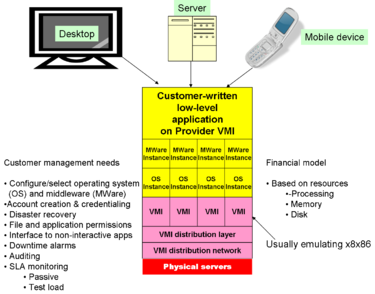

Infrastructure as a Service[edit]

In Infrastructure as a Service, the provider offers the customer the ability

to provision processing, storage, networks, and other fundamental computing resources

where the consumer is able to deploy and run arbitrary software, which can include operating systems and applications. The consumer does not manage or control the underlying cloud infrastructure but has control over operating systems, storage, deployed applications, and possibly limited control of select networking components (e.g., host

firewalls).[23]

One variable is whether the cloud is built on open source technology such as the Xen hypervisor, or uses proprietary VMWare. Amazon, for example, is principally open source with a do-it-yourself assumption about the customer, while AT&T and Savvis are VMWare and plan to provide professional services.

Vendors often call each instance of an operating system a virtual appliance. Each of the four stacks, below the common application in the graphic above, is a virtual appliance. Virtual appliances, regardless of their physical location, can be grouped into virtual data centers with management consoles, logging, and other facilities characteristic of data center operations. Such detailed facilities are the responsibility of SaaS and PaaS vendors.

The charging model is based on the use of resources, such as processing time, memory, and mass storage. There are, however, different ways to offer these resources. Some IaaS vendors are targeting SaaS and PaaS cloud providers, allowing them to dynamically configure systems of physical servers, as required by their customer demand, but not requiring the customer to construct a data center.

With a start of operations in March 2006, Amazon Elastic Compute Cloud (Amazon EC2) was the first to market. It allows build virtual appliances to be built from choices among operating systems, data bases, web servers, etc. Amazon offers a variety of preconfigured stacks called Amazon Machine Images (AMI), such as:[24]

In early 2009, a customer could organize 1500 virtual appliances into a data center. A number of PaaS and SaaS clouds are known to run over it. Some of the AMIs are customer-contributed and the security of their configurations is not guaranteed by Amazon.

Competition began about a year after EC2's launch, principally from existing managed hosting providers:[25]

- Rackspace’s Mosso in February 2008

- Terremark’s cloud in June 2008

- AT&T’s cloud in August 2008

- An IBM supported service on EC2 in February 2009

- Savvis in February 2009

Some cloud computing services specialize in compute-intensive supercomputer applications using highly distributed parallel processing. [26] Terremark differentiates its approach from grid computing, which is intended for compute-intensive but non-interactive services. "Grid is different. In that, you're paying for minutes (of computing power) and you're on a shared infrastructure in which your grid job is typically not designed to execute at any given instance in time. Grid is great for scientific applications, supercomputing applications, where you need a lot of power but don't need a split-second response time."[27]

While not necessarily marketed as clouds, certain infrastructure services, such as Domain Name System, are available as on-demand services. Messaging services that may be outsourced are broader than email, especially when there are regulatory requirements for archiving, audit or security. IaaS applies when the programming interface is low-level, while access at the application programming level is PaaS.

Data as a Service[edit]

Data as a Service is a subset of IaaS, offering virtual disk storage, sometimes with options such as encryption, file sharing, incremental backup, etc. It is not a distributed data base; an SQL or XML interface is at a higher level of abstraction, so that cloud data bases would be PaaS.[28] Another way to differentiate DaaS and PaaS: filesystem calls are DaaS; database manager calls are PaaS. DaaS extends to consumer services for the small and home office (SOHO).

Underlying architecture[edit]

Internally, cloud computing almost always uses several kinds of virtualization. The application software will run on virtual machines, which can migrate among colocated or networked physical processors.

The main kinds of virtualization software are:

- Desktop platforms (IaaS level)

- Server platforms (IaaS level)

- Cloud management functions

- Higher-level software development and display (Web Services, Java and Python virtual machines)

Most of the products have some expectations of the hardware, which is present in most processors: certain extensions to the processor extension set that make virtual context switching more efficient.

Interoperability[edit]

For reasons of commercial reliability, however, the resources will rarely be consumer-grade PCs, either from a machine resource or form factor viewpoint. Disks, for example, are apt to use Redundant Array of Inexpensive Disks technology for fault protection. Blade server, or at least rack mounted server chassis will be used to decrease the data center floor space, and often cooling and power distribution, complexity. These details are hidden from the application user.

The cloud provider can place infrastructure in geographic areas that have reduced costs of land, electricity, and cooling. While Google's developers may be in Silicon Valley, the data centers are in rural areas further north, in cooler climates.

For full generality, Citrix CEO Simon Crosby said customers shouldn't have "to ask the cloud vendor whose virtual infrastructure platform was used to build the cloud. Citrix supports Xen, the main nonproprietary hypervisor used in cloud computing. Amazon and Rackspace use Xen. Network World does suggest that Citrix, with its smaller market share, must support other hypervisors including VMware and Microsoft, while the reverse is not true.[21]

From the provider perspective, some of the advantages of offering cloud, rather than more conventional services, include:

- Sharing of peak-load capacity among a large pool of users, improving overall utilization.

- Separation of infrastructure maintenance duties from domain-specific application development.

- Ability to scale to meet changing user demands quickly, usually within minutes

Virtual versus physical security[edit]

A Gartner Group report said virtual servers are less secure than the physical servers they replace, although this situation should improve after 2012.[29]

Some of the known issues of security include:

- Virtual machine images, on a given physical server, are rarely encrypted. A miscreant's VMI, co-resident on the same machine, may be able to copy or modify sensitive information such as passwords or session keys.

- While routinely encrypting VM files is wise, it is only a start. One countermeasure is to keep the authentication and credentialing in a separate server (e.g., Kerberos or RADIUS), so a loose VM image still has no access to the most sensitive information. Still, unless explicitly designed for the role, network-based security devices are blind to communications between virtual machines within a single [physical] host;

- The hypervisor becomes the most sensitive access security target in the data center and should, like a kernel, given minimal function, but closely monitored.

- "workloads of different trust levels are consolidated onto single hosts without sufficient separation; virtualization technologies do not provide adequate control of administrative access to the hypervisor and virtual machine layer

- "when physical servers are combined into a single machine, there is risk that [virtual] system administrators and users could gain access to data or functions for which they have not been granted access

Dark clouds[edit]

While conceptual clouds move virtual machine instances among multiple data centers, the reality is that a number of cloud providers, even those that have multiple data centers, have had significant outages due to single data center malfunctions and a failure to move the VMIs to another data center. Rackspace, for example, is paying several million dollars to customers after a power failure in its Dallas, Texas facility, [30]

Clouds have been affected by other failures. A distributed denial of service attack on specialized infrastructure provider of Domain Name System (DNS) services, Neustar, was reported, by a Neustar competor, to have "knocked offline" Amazon and Salesforce. [31] Without independent log analysis, this is hard to assess. Just as a cloud should not be dependent on a single data center, even a single data center of any appreciable size should not depend on a single DNS provider. SaaS providers should have internal DNS for their own and customer machines, and would typically need only infrequent access to external DNS, although IaaS and PaaS would have more DNS dependency. With two independent DNS providers, degradation but not outage could be expected on the data center side. Customers and users with only one DNS might be unable to find the cloud. This is another example where the truth is hard to find.

Disappearing clouds[edit]

Cogshead, a Platform-as-a-Service (PaaS) vendor, had financial problems, and had its technology, but not its customers or cloud, bought by application software vendor SAP. In February 2009, Cogshead customers were told they had until April to move their data. Unfortunately, while they could retrieve XML versions of the data, the applications they had written to process it were dependent on Cogshead technology and could not move. [32]

At least in the Cogshead case, the users had XML available. Had their vendor been IaaS, it would have been much simpler to move, because their lower-level programming would be more portable, as, for example, to any other machine or cloud that supported LAMP, Microsoft Server stacks, or other utilities. SaaS users might or might not be able to get data in machine-readable format, unlike XML, and would have a longer road to rebuild applications.

Deployment models[edit]

Deployment models, at their most basic, deal with whether a physical server can be shared among multiple customers. This can be challenging when the cloud provider actually uses another cloud for infrastructure; the first provider must make verifiable contract arrangements with the second to ensure server separation.

Guidance from the Cloud Security Alliance suggests analyzing the delivery in terms of:[33]

- "The types of assets, resources, and information being managed

- Who manages them and how

- Which controls are selected and how they are integrated

- Compliance issues

For example a LAMP stack deployed on Amazon’s AWS EC2 would be classified as a public, off-premise, third-party managed-IaaS solution; even if the instances and applications/data contained within them were managed by the consumer or a third party. A custom application stack serving multiple business units; deployed on Eucalyptus under a corporation’s control, management, and ownership; could be described as a private, on-premise, self-managed SaaS solution."

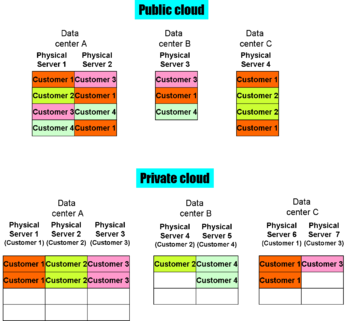

Public cloud[edit]

In public clouds, access is over the Internet, although it may use per-session host-to-host security and sometimes on-demand VPNs. The physical servers are shared among multiple customers of the cloud.

Public clouds offer the greatest economy of scale, but also raise security concerns. Public clouds are more efficient from the service provider standpoint, giving better resource utilization and potentially giving more opportunities for disaster recovery, but they present additional security concerns. Sometimes, there may simply be a regulatory requirement, such as HIPAA or PCI, which requires the application data to be on a server completely under the control, at least contractually, of the data owner. In other cases, there are technical security concerns, such as the potential ability of a virtual machine instance to snoop on a paused virtual machine instance of another computer, the paused image residing on the same physical server disk.

Public clouds have also suffered from availability problems, when compared to enterprise networks built for reliability.

Private cloud[edit]

Private clouds dedicate servers to specific customers or customer groups. Access may be by secure Internet session or over a virtual private network.

Purely from a server standpoint, they cannot offer the same economy of scale as a public cloud. With good capacity planning for a sufficiently large number of server instances, can still be cheaper than in-house server farms because the data structure infrastructure is shared.

The servers in a private cloud could actually be located on the customer's premises, but managed by a third party.

Community cloud[edit]

Community clouds are variants of private clouds, run by a customer manager such as the General Services Administration of the U.S. government, or of a banking cooperative, but with multiple trusted, but separately administered, user organizations as tenants in the community cloud. Where a single-user private cloud is more an outsourced intranet, a community cloud is an outsourced extranet.

The Department of Defense community cloud, RACE, initially committed 99.999 percent uptime, while Google offers 99.9 percent availability. Over a year, that translates to 5 versus 526 minutes of downtime.

Hybrid cloud[edit]

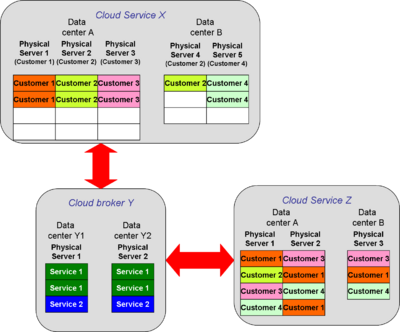

Hybrid clouds are made up of interconnected private or public clouds. Interconnection may run through a third, mutually trusted cloud.

Information brokerage[edit]

In the illustration, assume the private cloud is a set of banks, which may, in fact, run their own clouds. The public cloud might serve e-commerce providers, hosting their websites.

The broker, Y, is a payment clearinghouse, trusted by both sides. Payment clearinghouses run multiple services, such as credit card authorization, and reconciliation of bank payments to merchants. Some, such as PayPal, may also have end user interfaces, or be purely business-to-business.

Alternatively, the broker could be a wholesaler for private content providers, such as pay-for-view entertainment.

In a hybrid cloud, the private networks can maintain their own security policies. If they have a compliance overseer, that organization can audit the broker much more easily than auditing all the possible untrusted-to-trusted relationships.

There are no particular connectivity assumptions about hybrid clouds, although all links to the broker are apt to be secure, and the links to the private clouds are more likely to be on secure VPNs or even private IP networks.

Cooperating clouds[edit]

Several cloud vendors have established frameworks for customer- or third-party software developer building of applications involving multiple clouds. As described by Daniel Burton, Senior Vice President for Global Public Policy, Salesforce.com, three such frameworks are: [34]

- Between Salesforce and Amazon involves:

- Bring Amazon Web Services To Force.com Applications

- Develop in Java, Ruby on Rails, LAMP StackAccess

- Mega Storage from Amazon S3

- Burst a Force.com App to Amazon EC2

- Between Google App Engine and Salesforce:

- Python library and test harness

- Access Force.com Web Services API from within Google App Engine applications

- FaceBook and Salesforce:

- SitesForce.com Developers Build Social Apps for the enterprise

- Facebook Users Access Apps on Force.com SitesForce

Delivery assurance[edit]

One of the great challenges of clouds is that interfaces and boundaries, previously under the control of the owning organization, may now be in unknown or changing places. Moving to a cloud, especially in environments that have regulatory compliance requirements, does not relieve the data owner of responsibility.

Mell and Grance do observe that there are potential security advantages of clouds, but these require the cloud to implement a variety of functions not always available in commercial services. For example, distributed, replicated storage with no single point of failure is certainly possible in a storage cloud, but the Sidekick failure demonstrated that a service provider might not even implement single-point backup.

Security, which the Federal Information Security Management Act of 2002 defines as includeing availability, confidentiality and integrity may well be the greatest obstacle to deploying cloud technology. It simply may not be possible for the user to audit and control certain security mechanisms in the cloud. There is a spectrum of risk-benefit: few would worry about the read-only webcam that shows a view of the nearby harbor being on any cloud; few would accept a military system that controls the use of nuclear weapons being on other than a highly isolated network.

In other words, it is probably realistic to say clouds, as of early 2010, are secure enough for some missions but not others. The security implementations for some purposes simply are not sufficiently mature. Trust and audit, however, is a broader issue than security controls alone.

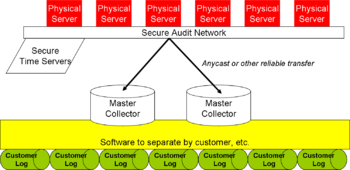

A particularly challenging problem is logging that meets audit requirements. When a virtual machine instance produces events that should be logged, and that VMI moves among different physical servers, the log disks on any one VM hosting server no longer can keep full awareness of processing. Instead, log events need to be sent to independent logging servers.

Security: a mixed blessing[edit]

Security, including security with third-party add-ons, is a critical part of cloud solutions, but experience shows the solution can be a problem. Bill Brenner, a senior editor for CSO (Chief Security Officer) tells of the five mistakes made by one such third-party security vendor. Mistakes compounded when a buggy update was deployed with neither adequate warning nor recovery mechanisms:[35]

- Updating the SaaS product [with the security update] without telling customers or letting them opt out. The update affected endpoint security, so it was visible to surprised users.

- Not offering a rollback to the last prior version

- Not offering customers a choice to select timing of an upgrade or allowing a period of testing

- New versions ignore prior configurations or settings, which creates instability in the customer environment... it disregarded whitelist and firewall settings programmed into the previous version, causing computers to suddenly bog down with pop-up warnings for a variety of commonly-used applications, including those built and maintained in-house. "The client now doesn't trust itself and blocks everything. Integrity between a cloud and an endpoint is essential, and this sort of disconnect could be exploited for denial-of-service attacks and the like. Vendors need to be thinking about this."

- Not offering a safety valve

Auditing[edit]

Trust is not only a cloud issue. Alan Murphy points out that to get the benefits of clouds, one has to trust the providers for certain things, but doing so continues a trend in information technology. "I have to modify my level of trust, and apply new and stronger safeguards to the rest of my workflow processes (personal and professional) to make sure I’m able to recover if/when there is a massive breach that’s beyond my control. My recovery is something I can control, and I definitely trust myself." In his early work, he did detailed on-site audits of traditional physical data centers.

What I took from that multi-year experience: It’s extremely expensive to conduct these types of audits, and at some point the liability baton is passed to the people actually implementing the technology, away from those who designed it. I could interview people all day, and spend weeks walking through their network, but once I left the premises and filed my report, it was up to them to stick to those procedures. We had to trust (in our case legally) that what I saw remained in place...in the cloud model, we have to trust so many new components in the stack. Of course we can have safeguards (SSL) and checks and balances (pen-tests, people who responsibly disclose security flaws) but at a minimum, those require near unfettered access to systems that are no longer in our control and require knowledgeable people to address them. In my auditing days I had unfettered access, during a specific window of time. Once I was done my access went away.[36]

Clouds will need different audit models than do traditional data centers. The illustration shows physical servers onto which the virtual machine instances (VMI) may map. As each VMI generates a loggable event, typically using calls to syslog or snmp, the physical server inserts a timestamp from a trusted (i.e., cryptographically signed) network time protocol server, and then transmits multiple copies of the log events to distributed master logs within the provider infrastructure. At those locations, software and servers sort the log events by VMI and customer, and create viewable, secure logs that the customers can audit. This design is a synthesis of multiple techniques, although some ideas came from a informal presentation by Peter Mell and Tim Grance of NIST. [37]

Alternatively, in a community cloud, independent auditors can apply suitable tests to the customer data extractors and certify them, perhaps for a given digitally signed version of the code. This design approach does assume that the VMI-hypervisor is trusted; there have been some experimental side channel attacks from one VMI to another. This is an area, especially when there are legal requirements to demonstrate due diligence, in which recognized expert help may be needed.

Unexpected consequences[edit]

In a white paper, Riverbed, a maker of WAN acceleration devices, pointed out that if computing services that previously ran on LAN-connected servers in an enterprise headquarters move to a cloud, the headquarters users, accustomed to LAN speed, now become remote workers just as are people in branch offices and mobile workers. All need to go through a WAN.

As long as WAN speeds are adequate, there should be no impact. If, however, speed or distance-related latency has a noticeable impact on performance, there could be substantial headquarters dissatisfaction. Public clouds, in which the customer has little or no control on the server locations, may be more likely to be impacted.[38] Riverbed encourages use of its WAN accelerators, but the idea of changed performance for previously LAN-attached users is a legitimate concern.

Acceptable use[edit]

If the application or data is controversial, it may be appropriate to operate on parallel clouds, possibly in different countries. Amazon terminated the Amazon Elastic Compute Cloud (EC2) Infrastructure as a Service contract of WikiLeaks, saying

When companies or people go about securing and storing large quantities of data that isn’t rightfully theirs, and publishing this data without ensuring it won’t injure others, it’s a violation of our terms of service, and folks need to go operate elsewhere[39]

decision led to questions on the pure business risk of putting applications on cloud computing, since a cloud provider might abruptly terminate service for an acceptable use policy violation -- although this also can happen with hosted servers. [40] The Electronic Frontier Foundation observed that "online free speech is only as strong as the weakest intermediate"; First Amendment to the U.S. Constitution rights do not apply to private contracts. "...a web hosting company isn't the government. It's a private actor and it certainly can choose what to publish and what not to publish. Indeed, Amazon has its own First Amendment right to do so."[41] An online publisher or hosting service may yield to informal government pressure, or simply decide to sever a relationship that brings bad publicity.

Compliance concerns[edit]

Various sectors and industries have compliance requirements, such as Payment Card Industry Data Card Standard (PCI DSS), HIPAA in health care, and FISMA in the U.S. Government. There are no general answers if cloud computing can be trusted for compliance, but analysis may show some customer-cloud combinations where it can, and some when it cannot.

PCI DSS[edit]

Several vendors have said they either are PCI DSS compliant, or, like Amazon, “in the process of, and will continue our efforts to obtain the strictest of industry certifications in order to verify our commitment to provide a secure, world-class cloud computing environment.” [42] Savvis describes PCI compliance in some detail; [43] Terremark states it is compliant but does not go into detail.

HIPAA[edit]

HIPAA requires that there be protection against physical theft of servers containing Protected Health Information. Google, for some customers that have a requirement for physical control of the data, has responded with an architecture that splits files across geographically separated servers, so no one theft can capture an entire file.

Sarbanes-Oxley Act[edit]

Section 302 of the Sarbanes-Oxley Act makes the Chief Executive Officer and Chief Financial Officer personally responsible for internal controls. If some of the internal controls are implemented with clouds, is the cloud provider a reliable agent? For such control to exist, the cloud must adequately disclose its audit mechanisms and provides the customer with adequate visibility at the boundaries. The analogy has been made that telecommunications logs, such as telephone billing, is frequently outsourced.

Sarbanes-Oxley auditors may require penetration testing of the computing service, but many cloud vendors, such as Amazon EC2, contractually forbid their customers to do penetration testing.

Legal discovery[edit]

Rule 34 of the Federal Rules of Civil Procedure states "a party may serve on any other party a request .... to produce and permit the requesting party or its representative to inspect, copy, test, or sample the ... items in the responding party's possession, custody, or control." In this context, "control" is ill-defined. Does the data owner or the cloud operator have control? If the provider, is it the customer account, spread over multiple physical servers, which is the target of discovery? Alternatively, in a public network, might the discovery request return the data of all customers on those servers?

Many new technologies separate identity from physical location. The U.S. PATRIOT Act did update the concept of warrants for telephone surveillance by letting a warrant be directed at a subscriber, dealing with the problem of "throwaway" cellular phones and ever-changing phone numbers. Earlier, the Communications Assistance to Law Enforcement Act (CALEA) dealt with the problem that a warrant for tapping a physical line made no sense when thousands of calls were multiplexed into switches.

Licensing[edit]

Richard Stallman, founder of the GNU project and the Free Software Foundation, has stated that cloud computing is a 'trap' being hyped by proprietary software vendors: "It's stupidity. It's worse than stupidity: it's a marketing hype campaign".[44] The rise in popularity of online applications and cloud computing has prompted the FSF to offer an alternative licence more suited to online software - the Affero GPL. This combines the GPL with an extra restriction that basically makes offering an online service count as equivalent to distribution, so a hosted online service must release the custom modifications they made to the codebase for their hosted version.

References[edit]

- ↑ Peter Mell, Tim Grance (7 October 2009), Effectively and Securely Using the Cloud Computing Paradigm, NIST Information Technology Laboratory, pp. 76-79

- ↑ Carl Brooks (16 Nov 2009), SaaS and the future of cloud rosy, says Ariba marketing chief, SearchCloudComputing.com

- ↑ Mell and Grance, October 2009, p. 8, 17-18}}

- ↑ Anders Bylund (25 February 2010), "How Big Is the Cloud Computing Opportunity?", Motley Fool

- ↑ 5.0 5.1 Why Larry Ellison Hates Cloud Computing on YouTube, excerpted from a conversation with Ed Zander, former CEO of Motorola, at the Commonwealth Club in California.

- ↑ Cloud Security Alliance

- ↑ Chris Ziegler (11 October 2009), "Sidekick failure rumors point fingers at outsourcing, lack of backups", Engadget

- ↑ V. Bertocci (April 2008), Cloud Computing and Identity, MSDN

- ↑ Joe Weinman, Vice President of Solutions Sales, AT&T, 3 Nov. 2008, cited by Burton

- ↑ Kevin Hartig (15 April 2009), "What is Cloud Computing?", Cloud Computing Journal

- ↑ "Cloud Computing", Schneier on Security, June 4, 2009

- ↑ Eric Knorr, Galen Gruman (7 April 2008), What cloud computing really means

- ↑ Security Guidance for Critical Areas of Focus in Cloud Computing (V2.1 ed.), Cloud Security Alliance, December 2009, pp. 14-18

- ↑ SaaS: Seizing Opportunities, Meeting Challenges, Mitigating Risks, Saavis

- ↑ Robert Collazzo (10 March 2010), Introducing ProServe In The Cloud, Rackspace

- ↑ Force.com Platform, Salesforce.com

- ↑ Nikita Ivanov, Java Cloud Computing - Two Approaches, GridGain Computing Platform

- ↑ Elastic Grid, Products

- ↑ White paper: Solving On-Premise Email Management Services with On-Demand Services, Dell Modular Services, 2009

- ↑ Solutions, StrikeIron Data as a Service

- ↑ 21.0 21.1 Jon Brodkin (31 August 2009), "VMware cloud initiative raises vendor lock-in issue", Network World, p. 1, 19

- ↑ John Fontana (31 August 2009), "Red Hat targets heavyweights in virtualization, cloud computing", Network World, p. 1, 24

- ↑ CSA Guide, p. 15

- ↑ Amazon Machine Images, Amazon.com

- ↑ "How credible is EC2’s competition?", ShareVM Blog, 8 March 2009

- ↑ Aaron Ricadela (16 November 2007), "Computing Heads for Clouds", Business Week

- ↑ Chris Preimesberger (4 June 2008), "Terremark Launches 'Enterprise Cloud'", eWeek

- ↑ Lucas Mearian (13 July 2009), "Consumers find rich array of cloud storage options: Which online service is right for you?", Computerworld

- ↑ Jon Brodkin (15 March 2009), "60% of virtual servers less secure than physical machines, Gartner says: As virtualization adoption grows, so do security risks", Network World

- ↑ Jon Brodkin A (6 July 2009), "Rackspace to issue as much as $3.5M in customer credits after outagek", Network World

- ↑ Andrew Nusca (1 April 2009), DDoS attack on UltraDNS affects Amazon.com, SalesForce.com, Petco.com, ZDNet

- ↑ Scott Wilson (24 February 2009), "Further failures cast cloud into question", CIO Blog

- ↑ CSA Guidance, p. 21

- ↑ Daniel Burton (23 March 2009), Salesforce.com Presentation at Cloud Computing Interoperability Workshop, Object Management Group

- ↑ Bill Brenner (30 September 2009), "5 Mistakes a Security Vendor Made in the Cloud: Here's the cautionary tale of how one security vendor went astray in the computing cloud, and what customers can learn from it. (Part 3 in a series)", CSO

- ↑ Alan Murphy, "Cloud Computing: a New Level of Trust", Virtual Data Center

- ↑ Peter Mell and Tim Grance, Centralizing security monitoring in a cloud architecture, Information Technology Laboratory, NIST

- ↑ Unleashing Cloud Performance: Making the promise of the cloud a reality, Riverbed, 2009

- ↑ Ravi Somaiya and J. David Goodman (3 December 2010), "WikiLeaks Struggles to Stay Online After Attacks", New York Times

- ↑ Keir Thomas (2 December 2010), "Amazon's Wikileaks Rejection Raises Cloud Trust Concerns", PCWorld

- ↑ Rainey Reitman and Marcia Hofmann, Amazon and WikiLeaks - Online Speech is Only as Strong as the Weakest Intermediary, Electronic Frontier Foundation

- ↑ Rich Miller (2 January 2009), "Can Cloud Computing Handle Compliance?", Data Center Knowledge

- ↑ Payment Card Industry (PCI) Data Assessment Solutions, Savvis

- ↑ Bobbie Johnson, Cloud computing is a trap, warns GNU founder Richard Stallman, The Guardian, 29 September 2008

KSF

KSF