Artificial intelligence

Topic: Engineering

From HandWiki - Reading time: 65 min

From HandWiki - Reading time: 65 min

| Artificial intelligence |

|---|

| Major goals |

| Approaches |

| Philosophy |

| History |

| Technology |

| Glossary |

Artificial intelligence (AI) is intelligence demonstrated by machines, as opposed to intelligence displayed by humans or by other animals. "Intelligence" encompasses the ability to learn and to reason, to generalize, and to infer meaning.[1] Example tasks in which this is done include speech recognition, computer vision, translation between (natural) languages, as well as other mappings of inputs.[2]

AI applications include advanced web search engines (e.g., Google Search), recommendation systems (used by YouTube, Amazon, and Netflix), understanding human speech (such as Siri and Alexa), self-driving cars (e.g., Waymo), generative or creative tools (ChatGPT and AI art), automated decision-making, and competing at the highest level in strategic game systems (such as chess and Go).[3]

As machines become increasingly capable, tasks considered to require "intelligence" are often removed from the definition of AI, a phenomenon known as the AI effect.[4] For instance, optical character recognition is frequently excluded from things considered to be AI, having become a routine technology.[5]

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism,[6][7] followed by disappointment and the loss of funding (known as an "AI winter"),[8][9] followed by new approaches, success, and renewed funding.[7][10] AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.[10][11]

The various sub-fields of AI research are centered around particular goals and the use of particular tools. The traditional goals of AI research include reasoning, knowledge representation, planning, learning, natural language processing, perception, and the ability to move and manipulate objects.[lower-alpha 1] General intelligence (the ability to solve an arbitrary problem) is among the field's long-term goals.[12] To solve these problems, AI researchers have adapted and integrated a wide range of problem-solving techniques, including search and mathematical optimization, formal logic, artificial neural networks, and methods based on statistics, probability, and economics. AI also draws upon computer science, psychology, linguistics, philosophy, and many other fields.

The field was founded on the assumption that human intelligence "can be so precisely described that a machine can be made to simulate it".[lower-alpha 2] This raised philosophical arguments about the mind and the ethical consequences of creating artificial beings endowed with human-like intelligence; these issues have previously been explored by myth, fiction (science fiction), and philosophy since antiquity.[14] Computer scientists and philosophers have since suggested that AI may become an existential risk to humanity if its rational capacities are not steered towards goals beneficial to humankind.[lower-alpha 3] Economists have frequently highlighted the risks of redundancies from AI, and speculated about unemployment if there is no adequate social policy for full employment.[15] The term artificial intelligence has also been criticized for overhyping AI's true technological capabilities.[16][17][18]

History

Examples

See also

References

- ↑ "Artificial intelligence (AI)" (in en). 16 June 2023. https://www.britannica.com/technology/artificial-intelligence. "The term is frequently applied to the project of developing systems endowed with the intellectual processes characteristic of humans, such as the ability to reason, discover meaning, generalize, or learn from past experience."

- ↑ Winston, P H (1984). Artificial intelligence. Second edition. United States. https://dl.acm.org/doi/abs/10.5555/30424.

- ↑ Google (2016).

- ↑ McCorduck (2004), p. 204.

- ↑ Schank (1991), p. 38.

- ↑ Crevier (1993), p. 109.

- ↑ 7.0 7.1 Cite error: Invalid

<ref>tag; no text was provided for refs namedAI in the 80s - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedFirst AI winter - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedSecond AI winter - ↑ 10.0 10.1 Clark (2015b).

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedAI widely used 1990s - ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedArtificial General Intelligence - ↑ McCarthy et al. (1955).

- ↑ Newquist (1994), pp. 45–53.

- ↑ E McGaughey, 'Will Robots Automate Your Job Away? Full Employment, Basic Income, and Economic Democracy' (2022) 51(3) Industrial Law Journal 511–559

- ↑ "s AI Overhyped in 2022? Getting the Truth About the True Power". Analytics Insight. 21 March 2022. https://www.analyticsinsight.net/is-ai-overhyped-in-2022-getting-the-truth-about-the-true-power/. Retrieved 11 March 2023.

- ↑ Giles, Martin (13 September 2018). "Artificial intelligence is often overhyped—and here's why that's dangerous". MIT Technology. https://www.technologyreview.com/2018/09/13/240156/artificial-intelligence-is-often-overhypedand-heres-why-thats-dangerous/. Retrieved 11 March 2023.

- ↑ Basen, Ira (21 February 2020). "Is AI overhyped? Researchers weigh in on technology's promise and problems". Canadian Broadcasting Corporation. https://www.cbc.ca/radio/sunday/the-sunday-edition-for-february-23-2020-1.5468283/is-ai-overhyped-researchers-weigh-in-on-technology-s-promise-and-problems-1.5468289. Retrieved 11 March 2023.

- ↑ "circa". Dictionary.com. http://dictionary.reference.com/browse/circa.

External links

Artificial beings with intelligence appeared as storytelling devices in antiquity,[1] and have been common in fiction, as in Mary Shelley's Frankenstein or Karel Čapek's R.U.R.[2] These characters and their fates raised many of the same issues now discussed in the ethics of artificial intelligence.[3]

The study of mechanical or "formal" reasoning began with philosophers and mathematicians in antiquity. The study of mathematical logic led directly to Alan Turing's theory of computation, which suggested that a machine, by shuffling symbols as simple as "0" and "1", could simulate any conceivable act of mathematical deduction. This insight that digital computers can simulate any process of formal reasoning is known as the Church–Turing thesis.[4] This, along with concurrent discoveries in neurobiology, information theory and cybernetics, led researchers to consider the possibility of building an electronic brain.[5] The first work that is now generally recognized as AI was McCullouch and Pitts' 1943 formal design for Turing-complete "artificial neurons".[6]

By the 1950s, two visions for how to achieve machine intelligence emerged. One vision, known as Symbolic AI or GOFAI, was to use computers to create a symbolic representation of the world and systems that could reason about the world. Proponents included Allen Newell, Herbert A. Simon, and Marvin Minsky. Closely associated with this approach was the "heuristic search" approach, which likened intelligence to a problem of exploring a space of possibilities for answers.

The second vision, known as the connectionist approach, sought to achieve intelligence through learning. Proponents of this approach, most prominently Frank Rosenblatt, sought to connect Perceptron in ways inspired by connections of neurons.[7] James Manyika and others have compared the two approaches to the mind (Symbolic AI) and the brain (connectionist). Manyika argues that symbolic approaches dominated the push for artificial intelligence in this period, due in part to its connection to intellectual traditions of Descartes, Boole, Gottlob Frege, Bertrand Russell, and others. Connectionist approaches based on cybernetics or artificial neural networks were pushed to the background but have gained new prominence in recent decades.[8]

The field of AI research was born at a workshop at Dartmouth College in 1956.[lower-alpha 4][11] The attendees became the founders and leaders of AI research.[lower-alpha 5] They and their students produced programs that the press described as "astonishing":[lower-alpha 6] computers were learning checkers strategies, solving word problems in algebra, proving logical theorems and speaking English.[lower-alpha 7][13]

By the middle of the 1960s, research in the U.S. was heavily funded by the Department of Defense[14] and laboratories had been established around the world.[15]

Researchers in the 1960s and the 1970s were convinced that symbolic approaches would eventually succeed in creating a machine with artificial general intelligence and considered this the goal of their field.[16] Herbert Simon predicted, "machines will be capable, within twenty years, of doing any work a man can do".[17] Marvin Minsky agreed, writing, "within a generation ... the problem of creating 'artificial intelligence' will substantially be solved".[18]

They had failed to recognize the difficulty of some of the remaining tasks. Progress slowed and in 1974, in response to the criticism of Sir James Lighthill[19] and ongoing pressure from the US Congress to fund more productive projects, both the U.S. and British governments cut off exploratory research in AI. The next few years would later be called an "AI winter", a period when obtaining funding for AI projects was difficult.[20]

In the early 1980s, AI research was revived by the commercial success of expert systems,[21] a form of AI program that simulated the knowledge and analytical skills of human experts. By 1985, the market for AI had reached over a billion dollars. At the same time, Japan's fifth generation computer project inspired the U.S. and British governments to restore funding for academic research.[22] However, beginning with the collapse of the Lisp Machine market in 1987, AI once again fell into disrepute, and a second, longer-lasting winter began.[23]

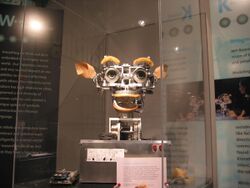

Many researchers began to doubt that the symbolic approach would be able to imitate all the processes of human cognition, especially perception, robotics, learning and pattern recognition. A number of researchers began to look into "sub-symbolic" approaches to specific AI problems.[24] Robotics researchers, such as Rodney Brooks, rejected symbolic AI and focused on the basic engineering problems that would allow robots to move, survive, and learn their environment.[lower-alpha 8]

Interest in neural networks and "connectionism" was revived by Geoffrey Hinton, David Rumelhart and others in the middle of the 1980s.[29] Soft computing tools were developed in the 1980s, such as neural networks, fuzzy systems, Grey system theory, evolutionary computation and many tools drawn from statistics or mathematical optimization.

AI gradually restored its reputation in the late 1990s and early 21st century by finding specific solutions to specific problems. The narrow focus allowed researchers to produce verifiable results, exploit more mathematical methods, and collaborate with other fields (such as statistics, economics and mathematics).[30] By 2000, solutions developed by AI researchers were being widely used, although in the 1990s they were rarely described as "artificial intelligence".[31]

Faster computers, algorithmic improvements and access to large amounts of data enabled advances in machine learning and perception; data-hungry deep learning methods started to dominate accuracy benchmarks around 2012.[32] According to Bloomberg News Jack Clark, 2015 was a landmark year for artificial intelligence, with the number of software projects that use AI within Google increased from a "sporadic usage" in 2012 to more than 2,700 projects.[lower-alpha 9] He attributed this to an increase in affordable neural networks, due to a rise in cloud computing infrastructure and to an increase in research tools and datasets.[33]

In a 2017 survey, one in five companies reported they had "incorporated AI in some offerings or processes".[34] The amount of research into AI (measured by total publications) increased by 50% in the years 2015–2019.[35] According to AI Impacts at Stanford, around 2022 about $50 billion annually is invested in artificial intelligence in the US, and about 20% of new US Computer Science PhD graduates have specialized in artificial intelligence;[36] about 800,000 AI-related US job openings existed in 2022.[37]

Numerous academic researchers became concerned that AI was no longer pursuing the original goal of creating versatile, fully intelligent machines. Much of current research involves statistical AI, which is overwhelmingly used to solve specific problems, even highly successful techniques such as deep learning. This concern has led to the subfield of artificial general intelligence (or "AGI"), which had several well-funded institutions by the 2010s.[38]

Goals

The general problem of simulating (or creating) intelligence has been broken down into sub-problems. These consist of particular traits or capabilities that researchers expect an intelligent system to display. The traits described below have received the most attention.[lower-alpha 1]

Reasoning, problem-solving

Early researchers developed algorithms that imitated step-by-step reasoning that humans use when they solve puzzles or make logical deductions.[39] By the late 1980s and 1990s, AI research had developed methods for dealing with uncertain or incomplete information, employing concepts from probability and economics.[40]

Many of these algorithms proved to be insufficient for solving large reasoning problems because they experienced a "combinatorial explosion": they became exponentially slower as the problems grew larger.[41] Even humans rarely use the step-by-step deduction that early AI research could model. They solve most of their problems using fast, intuitive judgments.[42]

Knowledge representation

Knowledge representation and knowledge engineering[43] allow AI programs to answer questions intelligently and make deductions about real-world facts.

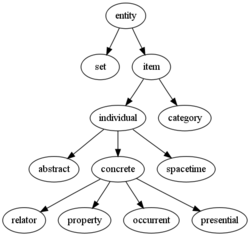

A representation of "what exists" is an ontology: the set of objects, relations, concepts, and properties formally described so that software agents can interpret them.[44] The most general ontologies are called upper ontologies, which attempt to provide a foundation for all other knowledge and act as mediators between domain ontologies that cover specific knowledge about a particular knowledge domain (field of interest or area of concern). A truly intelligent program would also need access to commonsense knowledge, the set of facts that an average person knows. The semantics of an ontology is typically represented in description logic, such as the Web Ontology Language.[45]

AI research has developed tools to represent specific domains, such as objects, properties, categories and relations between objects;[45] situations, events, states and time;[46] causes and effects;[47] knowledge about knowledge (what we know about what other people know); and [48] default reasoning (things that humans assume are true until they are told differently and will remain true even when other facts are changing);[49]. Among the most difficult problems in AI are: the breadth of commonsense knowledge (the number of atomic facts that the average person knows is enormous);[50] and the sub-symbolic form of most commonsense knowledge (much of what people know is not represented as "facts" or "statements" that they could express verbally).[42]

Formal knowledge representations are used in content-based indexing and retrieval,[51] scene interpretation,[52] clinical decision support,[53] knowledge discovery (mining "interesting" and actionable inferences from large databases),[54] and other areas.[55]

Learning

Machine learning (ML), a fundamental concept of AI research since the field's inception,[lower-alpha 10] is the study of computer algorithms that improve automatically through experience.[lower-alpha 11]

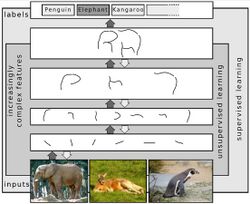

Unsupervised learning finds patterns in a stream of input.

Supervised learning requires a human to label the input data first, and comes in two main varieties: classification and numerical regression. Classification is used to determine what category something belongs in – the program sees a number of examples of things from several categories and will learn to classify new inputs. Regression is the attempt to produce a function that describes the relationship between inputs and outputs and predicts how the outputs should change as the inputs change. Both classifiers and regression learners can be viewed as "function approximators" trying to learn an unknown (possibly implicit) function; for example, a spam classifier can be viewed as learning a function that maps from the text of an email to one of two categories, "spam" or "not spam".[59]

In reinforcement learning the agent is rewarded for good responses and punished for bad ones. The agent classifies its responses to form a strategy for operating in its problem space.[60]

Transfer learning is when the knowledge gained from one problem is applied to a new problem.[61]

Computational learning theory can assess learners by computational complexity, by sample complexity (how much data is required), or by other notions of optimization.[62]

Natural language processing

Natural language processing (NLP)[63] allows machines to read and understand human language. A sufficiently powerful natural language processing system would enable natural-language user interfaces and the acquisition of knowledge directly from human-written sources, such as newswire texts. Some straightforward applications of NLP include information retrieval, question answering and machine translation.[64]

Symbolic AI used formal syntax to translate the deep structure of sentences into logic. This failed to produce useful applications, due to the intractability of logic[41] and the breadth of commonsense knowledge.[50] Modern statistical techniques include co-occurrence frequencies (how often one word appears near another), "Keyword spotting" (searching for a particular word to retrieve information), transformer-based deep learning (which finds patterns in text), and others.[65] They have achieved acceptable accuracy at the page or paragraph level, and, by 2019, could generate coherent text.[66]

Perception

Machine perception[67] is the ability to use input from sensors (such as cameras, microphones, wireless signals, and active lidar, sonar, radar, and tactile sensors) to deduce aspects of the world. Applications include speech recognition,[68] facial recognition, and object recognition.[69] Computer vision is the ability to analyze visual input.[70]

Social intelligence

Affective computing is an interdisciplinary umbrella that comprises systems that recognize, interpret, process or simulate human feeling, emotion and mood.[72] For example, some virtual assistants are programmed to speak conversationally or even to banter humorously; it makes them appear more sensitive to the emotional dynamics of human interaction, or to otherwise facilitate human–computer interaction. However, this tends to give naïve users an unrealistic conception of how intelligent existing computer agents actually are.[73] Moderate successes related to affective computing include textual sentiment analysis and, more recently, multimodal sentiment analysis, wherein AI classifies the affects displayed by a videotaped subject.[74]

General intelligence

A machine with general intelligence can solve a wide variety of problems with breadth and versatility similar to human intelligence. There are several competing ideas about how to develop artificial general intelligence. Hans Moravec and Marvin Minsky argue that work in different individual domains can be incorporated into an advanced multi-agent system or cognitive architecture with general intelligence.[75] Pedro Domingos hopes that there is a conceptually straightforward, but mathematically difficult, "master algorithm" that could lead to AGI.[76] Others believe that anthropomorphic features like an artificial brain[77] or simulated child development[lower-alpha 12] will someday reach a critical point where general intelligence emerges.

Tools

Search and optimization

AI can solve many problems by intelligently searching through many possible solutions.[78] Reasoning can be reduced to performing a search. For example, logical proof can be viewed as searching for a path that leads from premises to conclusions, where each step is the application of an inference rule.[79] Planning algorithms search through trees of goals and subgoals, attempting to find a path to a target goal, a process called means-ends analysis.[80] Robotics algorithms for moving limbs and grasping objects use local searches in configuration space.[81]

Simple exhaustive searches[82] are rarely sufficient for most real-world problems: the search space (the number of places to search) quickly grows to astronomical numbers. The result is a search that is too slow or never completes. The solution, for many problems, is to use "heuristics" or "rules of thumb" that prioritize choices in favor of those more likely to reach a goal and to do so in a shorter number of steps. In some search methodologies, heuristics can also serve to eliminate some choices unlikely to lead to a goal (called "pruning the search tree"). Heuristics supply the program with a "best guess" for the path on which the solution lies.[83] Heuristics limit the search for solutions into a smaller sample size.[84]

A very different kind of search came to prominence in the 1990s, based on the mathematical theory of optimization. For many problems, it is possible to begin the search with some form of a guess and then refine the guess incrementally until no more refinements can be made. These algorithms can be visualized as blind hill climbing: we begin the search at a random point on the landscape, and then, by jumps or steps, we keep moving our guess uphill, until we reach the top. Other related optimization algorithms include random optimization, beam search and metaheuristics like simulated annealing.[85] Evolutionary computation uses a form of optimization search. For example, they may begin with a population of organisms (the guesses) and then allow them to mutate and recombine, selecting only the fittest to survive each generation (refining the guesses). Classic evolutionary algorithms include genetic algorithms, gene expression programming, and genetic programming.[86] Alternatively, distributed search processes can coordinate via swarm intelligence algorithms. Two popular swarm algorithms used in search are particle swarm optimization (inspired by bird flocking) and ant colony optimization (inspired by ant trails).[87]

Logic

Logic[88] is used for knowledge representation and problem-solving, but it can be applied to other problems as well. For example, the satplan algorithm uses logic for planning[89] and inductive logic programming is a method for learning.[90]

Several different forms of logic are used in AI research. Propositional logic[91] involves truth functions such as "or" and "not". First-order logic[92] adds quantifiers and predicates and can express facts about objects, their properties, and their relations with each other. Fuzzy logic assigns a "degree of truth" (between 0 and 1) to vague statements such as "Alice is old" (or rich, or tall, or hungry), that are too linguistically imprecise to be completely true or false.[93] Default logics, non-monotonic logics and circumscription are forms of logic designed to help with default reasoning and the qualification problem.[49] Several extensions of logic have been designed to handle specific domains of knowledge, such as description logics;[45] situation calculus, event calculus and fluent calculus (for representing events and time);[46] causal calculus;[47] belief calculus (belief revision); and modal logics.[48] Logics to model contradictory or inconsistent statements arising in multi-agent systems have also been designed, such as paraconsistent logics.[94]

Probabilistic methods for uncertain reasoning

Many problems in AI (including in reasoning, planning, learning, perception, and robotics) require the agent to operate with incomplete or uncertain information. AI researchers have devised a number of tools to solve these problems using methods from probability theory and economics.[95] Bayesian networks[96] are a very general tool that can be used for various problems, including reasoning (using the Bayesian inference algorithm),[lower-alpha 13][98] learning (using the expectation-maximization algorithm),[lower-alpha 14][100] planning (using decision networks)[101] and perception (using dynamic Bayesian networks).[102] Probabilistic algorithms can also be used for filtering, prediction, smoothing and finding explanations for streams of data, helping perception systems to analyze processes that occur over time (e.g., hidden Markov models or Kalman filters).[102]

A key concept from the science of economics is "utility", a measure of how valuable something is to an intelligent agent. Precise mathematical tools have been developed that analyze how an agent can make choices and plan, using decision theory, decision analysis,[103] and information value theory.[104] These tools include models such as Markov decision processes,[105] dynamic decision networks,[102] game theory and mechanism design.[106]

Classifiers and statistical learning methods

The simplest AI applications can be divided into two types: classifiers ("if shiny then diamond") and controllers ("if diamond then pick up"). Controllers do, however, also classify conditions before inferring actions, and therefore classification forms a central part of many AI systems. Classifiers are functions that use pattern matching to determine the closest match. They can be tuned according to examples, making them very attractive for use in AI. These examples are known as observations or patterns. In supervised learning, each pattern belongs to a certain predefined class. A class is a decision that has to be made. All the observations combined with their class labels are known as a data set. When a new observation is received, that observation is classified based on previous experience.[107]

A classifier can be trained in various ways; there are many statistical and machine learning approaches. The decision tree is the simplest and most widely used symbolic machine learning algorithm.[108] K-nearest neighbor algorithm was the most widely used analogical AI until the mid-1990s.[109] Kernel methods such as the support vector machine (SVM) displaced k-nearest neighbor in the 1990s.[110] The naive Bayes classifier is reportedly the "most widely used learner"[111] at Google, due in part to its scalability.[112] Neural networks are also used for classification.[113]

Classifier performance depends greatly on the characteristics of the data to be classified, such as the dataset size, distribution of samples across classes, dimensionality, and the level of noise. Model-based classifiers perform well if the assumed model is an extremely good fit for the actual data. Otherwise, if no matching model is available, and if accuracy (rather than speed or scalability) is the sole concern, conventional wisdom is that discriminative classifiers (especially SVM) tend to be more accurate than model-based classifiers such as "naive Bayes" on most practical data sets.[114]

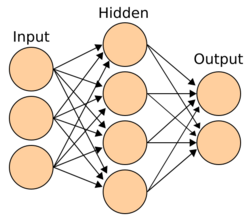

Artificial neural networks

Neural networks[113] were inspired by the architecture of neurons in the human brain. A simple "neuron" N accepts input from other neurons, each of which, when activated (or "fired"), casts a weighted "vote" for or against whether neuron N should itself activate. Learning requires an algorithm to adjust these weights based on the training data; one simple algorithm (dubbed "fire together, wire together") is to increase the weight between two connected neurons when the activation of one triggers the successful activation of another. Neurons have a continuous spectrum of activation; in addition, neurons can process inputs in a nonlinear way rather than weighing straightforward votes.

Modern neural networks model complex relationships between inputs and outputs and find patterns in data. They can learn continuous functions and even digital logical operations. Neural networks can be viewed as a type of mathematical optimization – they perform gradient descent on a multi-dimensional topology that was created by training the network. The most common training technique is the backpropagation algorithm.[115] Other learning techniques for neural networks are Hebbian learning ("fire together, wire together"), GMDH or competitive learning.[116]

The main categories of networks are acyclic or feedforward neural networks (where the signal passes in only one direction) and recurrent neural networks (which allow feedback and short-term memories of previous input events). Among the most popular feedforward networks are perceptrons, multi-layer perceptrons and radial basis networks.[117]

Deep learning

Deep learning[119] uses several layers of neurons between the network's inputs and outputs. The multiple layers can progressively extract higher-level features from the raw input. For example, in image processing, lower layers may identify edges, while higher layers may identify the concepts relevant to a human such as digits or letters or faces.[120] Deep learning has drastically improved the performance of programs in many important subfields of artificial intelligence, including computer vision, speech recognition, image classification[121] and others.

Deep learning often uses convolutional neural networks for many or all of its layers. In a convolutional layer, each neuron receives input from only a restricted area of the previous layer called the neuron's receptive field. This can substantially reduce the number of weighted connections between neurons,[122] and creates a hierarchy similar to the organization of the animal visual cortex.[123]

In a recurrent neural network (RNN) the signal will propagate through a layer more than once;[124] thus, an RNN is an example of deep learning.[125] RNNs can be trained by gradient descent,[126] however long-term gradients which are back-propagated can "vanish" (that is, they can tend to zero) or "explode" (that is, they can tend to infinity), known as the vanishing gradient problem.[127] The long short term memory (LSTM) technique can prevent this in most cases.[128]

Specialized languages and hardware

Specialized languages for artificial intelligence have been developed, such as Lisp, Prolog, TensorFlow and many others. Hardware developed for AI includes AI accelerators and neuromorphic computing. By 2019, graphics processing units (GPUs), often with AI-specific enhancements, had displaced central processing unit (CPUs) as the dominant means to train large-scale commercial cloud AI.[129]

Applications

AI is relevant to any intellectual task.[130] Modern artificial intelligence techniques are pervasive and are too numerous to list here.[131] Frequently, when a technique reaches mainstream use, it is no longer considered artificial intelligence; this phenomenon is described as the AI effect.[132]

In the 2010s, AI applications were at the heart of the most commercially successful areas of computing, and have become a ubiquitous feature of daily life. AI is used in search engines (such as Google Search), targeting online advertisements,[133] recommendation systems (offered by Netflix, YouTube or Amazon), driving internet traffic,[134][135] targeted advertising (AdSense, Facebook), virtual assistants (such as Siri or Alexa),[136] autonomous vehicles (including drones, ADAS and self-driving cars), automatic language translation (Microsoft Translator, Google Translate), facial recognition (Apple's Face ID or Microsoft's DeepFace), image labeling (used by Facebook, Apple's iPhoto and TikTok), spam filtering and chatbots (such as ChatGPT).

There are also thousands of successful AI applications used to solve problems for specific industries or institutions. A few examples are energy storage,[137] deepfakes,[138] medical diagnosis, military logistics, foreign policy,[139] or supply chain management.

Game playing has been a test of AI's strength since the 1950s. Deep Blue became the first computer chess-playing system to beat a reigning world chess champion, Garry Kasparov, on 11 May 1997.[140] In 2011, in a Jeopardy! quiz show exhibition match, IBM's question answering system, Watson, defeated the two greatest Jeopardy! champions, Brad Rutter and Ken Jennings, by a significant margin.[141] In March 2016, AlphaGo won 4 out of 5 games of Go in a match with Go champion Lee Sedol, becoming the first computer Go-playing system to beat a professional Go player without handicaps.[142] Other programs handle imperfect-information games; such as for poker at a superhuman level, Pluribus[lower-alpha 15] and Cepheus.[144] DeepMind in the 2010s developed a "generalized artificial intelligence" that could learn many diverse Atari games on its own.[145]

DeepMind's AlphaFold 2 (2020) demonstrated the ability to approximate, in hours rather than months, the 3D structure of a protein.[146] Other applications predict the result of judicial decisions,[147] create art (such as poetry or painting) and prove mathematical theorems.

Generative AI gained widespread prominence in the 2020s. "Large language model" systems such as GPT-3 (2020), with 175 billion parameters, matched human performance on pre-existing benchmarks, albeit without the system attaining a commonsense understanding of the contents of the benchmarks.[148] A Pew Research poll conducted in March 2023 found that 14% of Americans adults had tried ChatGPT, a large language model fine-tuned using reinforcement learning from human feedback.[149] While estimates varied wildly, Goldman Sachs suggested in 2023 that generative language AI could increase global GDP by 7% in the next ten years.[150][151]

In 2023, the increasing realism and ease-of-use of AI-based text-to-image generators such as Midjourney, DALL-E, and Stable Diffusion[152][153] sparked a trend of viral AI-generated photos. Widespread attention was gained by a fake photo of Pope Francis wearing a white puffer coat,[154] the fictional arrest of Donald Trump,[155] and a hoax of an attack on the Pentagon,[156] as well as the usage in professional creative arts.[157][158]

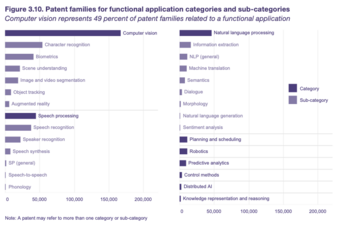

Intellectual property

In 2019, WIPO reported that AI was the most prolific emerging technology in terms of the number of patent applications and granted patents, the Internet of things was estimated to be the largest in terms of market size. It was followed, again in market size, by big data technologies, robotics, AI, 3D printing and the fifth generation of mobile services (5G).[159] Since AI emerged in the 1950s, 340,000 AI-related patent applications were filed by innovators and 1.6 million scientific papers have been published by researchers, with the majority of all AI-related patent filings published since 2013. Companies represent 26 out of the top 30 AI patent applicants, with universities or public research organizations accounting for the remaining four.[160] The ratio of scientific papers to inventions has significantly decreased from 8:1 in 2010 to 3:1 in 2016, which is attributed to be indicative of a shift from theoretical research to the use of AI technologies in commercial products and services. Machine learning is the dominant AI technique disclosed in patents and is included in more than one-third of all identified inventions (134,777 machine learning patents filed for a total of 167,038 AI patents filed in 2016), with computer vision being the most popular functional application. AI-related patents not only disclose AI techniques and applications, they often also refer to an application field or industry. Twenty application fields were identified in 2016 and included, in order of magnitude: telecommunications (15 percent), transportation (15 percent), life and medical sciences (12 percent), and personal devices, computing and human–computer interaction (11 percent). Other sectors included banking, entertainment, security, industry and manufacturing, agriculture, and networks (including social networks, smart cities and the Internet of things). IBM has the largest portfolio of AI patents with 8,290 patent applications, followed by Microsoft with 5,930 patent applications.[160]

Philosophy

Defining artificial intelligence

Alan Turing wrote in 1950 "I propose to consider the question 'can machines think'?"[161] He advised changing the question from whether a machine "thinks", to "whether or not it is possible for machinery to show intelligent behaviour".[161] He devised the Turing test, which measures the ability of a machine to simulate human conversation.[162] Since we can only observe the behavior of the machine, it does not matter if it is "actually" thinking or literally has a "mind". Turing notes that we can not determine these things about other people[lower-alpha 16] but "it is usual to have a polite convention that everyone thinks"[163]

Russell and Norvig agree with Turing that AI must be defined in terms of "acting" and not "thinking".[164] However, they are critical that the test compares machines to people. "Aeronautical engineering texts," they wrote, "do not define the goal of their field as making 'machines that fly so exactly like pigeons that they can fool other pigeons.'"[165] AI founder John McCarthy agreed, writing that "Artificial intelligence is not, by definition, simulation of human intelligence".[166]

McCarthy defines intelligence as "the computational part of the ability to achieve goals in the world."[167] Another AI founder, Marvin Minsky similarly defines it as "the ability to solve hard problems".[168] These definitions view intelligence in terms of well-defined problems with well-defined solutions, where both the difficulty of the problem and the performance of the program are direct measures of the "intelligence" of the machine—and no other philosophical discussion is required, or may not even be possible.

A definition that has also been adopted by Google[169][better source needed] – major practitionary in the field of AI. This definition stipulated the ability of systems to synthesize information as the manifestation of intelligence, similar to the way it is defined in biological intelligence.

Evaluating approaches to AI

No established unifying theory or paradigm has guided AI research for most of its history.[lower-alpha 17] The unprecedented success of statistical machine learning in the 2010s eclipsed all other approaches (so much so that some sources, especially in the business world, use the term "artificial intelligence" to mean "machine learning with neural networks"). This approach is mostly sub-symbolic, neat, soft and narrow (see below). Critics argue that these questions may have to be revisited by future generations of AI researchers.

Symbolic AI and its limits

Symbolic AI (or "GOFAI")[171] simulated the high-level conscious reasoning that people use when they solve puzzles, express legal reasoning and do mathematics. They were highly successful at "intelligent" tasks such as algebra or IQ tests. In the 1960s, Newell and Simon proposed the physical symbol systems hypothesis: "A physical symbol system has the necessary and sufficient means of general intelligent action."[172]

However, the symbolic approach failed on many tasks that humans solve easily, such as learning, recognizing an object or commonsense reasoning. Moravec's paradox is the discovery that high-level "intelligent" tasks were easy for AI, but low level "instinctive" tasks were extremely difficult.[173] Philosopher Hubert Dreyfus had argued since the 1960s that human expertise depends on unconscious instinct rather than conscious symbol manipulation, and on having a "feel" for the situation, rather than explicit symbolic knowledge.[174] Although his arguments had been ridiculed and ignored when they were first presented, eventually, AI research came to agree.[lower-alpha 18][42]

The issue is not resolved: sub-symbolic reasoning can make many of the same inscrutable mistakes that human intuition does, such as algorithmic bias. Critics such as Noam Chomsky argue continuing research into symbolic AI will still be necessary to attain general intelligence,[176][177] in part because sub-symbolic AI is a move away from explainable AI: it can be difficult or impossible to understand why a modern statistical AI program made a particular decision. The emerging field of neuro-symbolic artificial intelligence attempts to bridge the two approaches.

Neat vs. scruffy

"Neats" hope that intelligent behavior is described using simple, elegant principles (such as logic, optimization, or neural networks). "Scruffies" expect that it necessarily requires solving a large number of unrelated problems (especially in areas like common sense reasoning). This issue was actively discussed in the 70s and 80s,[178] but in the 1990s mathematical methods and solid scientific standards became the norm, a transition that Russell and Norvig termed "the victory of the neats".[179]

Soft vs. hard computing

Finding a provably correct or optimal solution is intractable for many important problems.[41] Soft computing is a set of techniques, including genetic algorithms, fuzzy logic and neural networks, that are tolerant of imprecision, uncertainty, partial truth and approximation. Soft computing was introduced in the late 80s and most successful AI programs in the 21st century are examples of soft computing with neural networks.

Narrow vs. general AI

AI researchers are divided as to whether to pursue the goals of artificial general intelligence and superintelligence (general AI) directly or to solve as many specific problems as possible (narrow AI) in hopes these solutions will lead indirectly to the field's long-term goals.[180][181] General intelligence is difficult to define and difficult to measure, and modern AI has had more verifiable successes by focusing on specific problems with specific solutions. The experimental sub-field of artificial general intelligence studies this area exclusively.

Machine consciousness, sentience and mind

The philosophy of mind does not know whether a machine can have a mind, consciousness and mental states, in the same sense that human beings do. This issue considers the internal experiences of the machine, rather than its external behavior. Mainstream AI research considers this issue irrelevant because it does not affect the goals of the field. Stuart Russell and Peter Norvig observe that most AI researchers "don't care about the [philosophy of AI] – as long as the program works, they don't care whether you call it a simulation of intelligence or real intelligence."[182] However, the question has become central to the philosophy of mind. It is also typically the central question at issue in artificial intelligence in fiction.

Consciousness

David Chalmers identified two problems in understanding the mind, which he named the "hard" and "easy" problems of consciousness.[183] The easy problem is understanding how the brain processes signals, makes plans and controls behavior. The hard problem is explaining how this feels or why it should feel like anything at all, assuming we are right in thinking that it truly does feel like something (Dennett's consciousness illusionism says this is an illusion). Human information processing is easy to explain, however, human subjective experience is difficult to explain. For example, it is easy to imagine a color-blind person who has learned to identify which objects in their field of view are red, but it is not clear what would be required for the person to know what red looks like.[184]

Computationalism and functionalism

Computationalism is the position in the philosophy of mind that the human mind is an information processing system and that thinking is a form of computing. Computationalism argues that the relationship between mind and body is similar or identical to the relationship between software and hardware and thus may be a solution to the mind-body problem. This philosophical position was inspired by the work of AI researchers and cognitive scientists in the 1960s and was originally proposed by philosophers Jerry Fodor and Hilary Putnam.[185]

Philosopher John Searle characterized this position as "strong AI": "The appropriately programmed computer with the right inputs and outputs would thereby have a mind in exactly the same sense human beings have minds."[lower-alpha 19] Searle counters this assertion with his Chinese room argument, which attempts to show that, even if a machine perfectly simulates human behavior, there is still no reason to suppose it also has a mind.[188]

Robot rights

If a machine has a mind and subjective experience, then it may also have sentience (the ability to feel), and if so it could also suffer; it has been argued that this could entitle it to certain rights.[189] Any hypothetical robot rights would lie on a spectrum with animal rights and human rights.[190] This issue has been considered in fiction for centuries,[191] and is now being considered by, for example, California's Institute for the Future; however, critics argue that the discussion is premature.[192]

Future

Superintelligence

A superintelligence is a hypothetical agent that would possess intelligence far surpassing that of the brightest and most gifted human mind.[181]

If research into artificial general intelligence produced sufficiently intelligent software, it might be able to reprogram and improve itself. The improved software would be even better at improving itself, leading to recursive self-improvement.[193] Its intelligence would increase exponentially in an intelligence explosion and could dramatically surpass humans. Science fiction writer Vernor Vinge named this scenario the "singularity".[194] Because it is difficult or impossible to know the limits of intelligence or the capabilities of superintelligent machines, the technological singularity is an occurrence beyond which events are unpredictable or even unfathomable.[195]

Robot designer Hans Moravec, cyberneticist Kevin Warwick, and inventor Ray Kurzweil have predicted that humans and machines will merge in the future into cyborgs that are more capable and powerful than either. This idea, called transhumanism, has roots in Aldous Huxley and Robert Ettinger.[196]

Edward Fredkin argues that "artificial intelligence is the next stage in evolution", an idea first proposed by Samuel Butler's "Darwin among the Machines" as far back as 1863, and expanded upon by George Dyson in his book of the same name in 1998.[197]

Risks

Technological unemployment

In the past, technology has tended to increase rather than reduce total employment, but economists acknowledge that "we're in uncharted territory" with AI.[198] A survey of economists showed disagreement about whether the increasing use of robots and AI will cause a substantial increase in long-term unemployment, but they generally agree that it could be a net benefit if productivity gains are redistributed.[199] Risk estimates vary; for example, in the 2010s Michael Osborne and Carl Benedikt Frey estimated 47% of U.S. jobs are at "high risk" of potential automation, while an OECD report classified only 9% of U.S. jobs as "high risk".[lower-alpha 20][201] The methodology of speculating about future employment levels has been criticised as lacking evidential foundation, and for implying that technology (rather than social policy) creates unemployment (as opposed to redundancies).[202]

Unlike previous waves of automation, many middle-class jobs may be eliminated by artificial intelligence; The Economist stated in 2015 that "the worry that AI could do to white-collar jobs what steam power did to blue-collar ones during the Industrial Revolution" is "worth taking seriously".[203] Jobs at extreme risk range from paralegals to fast food cooks, while job demand is likely to increase for care-related professions ranging from personal healthcare to the clergy.[204]

Bad actors and weaponized AI

AI provides a number of tools that are particularly useful for authoritarian governments: smart spyware, face recognition and voice recognition allow widespread surveillance; such surveillance allows machine learning to classify potential enemies of the state and can prevent them from hiding; recommendation systems can precisely target propaganda and misinformation for maximum effect; deepfakes aid in producing misinformation; advanced AI can make centralized decision making more competitive with liberal and decentralized systems such as markets.[205]

Terrorists, criminals and rogue states may use other forms of weaponized AI such as advanced digital warfare and lethal autonomous weapons. By 2015, over fifty countries were reported to be researching battlefield robots.[206]

Machine-learning AI is also able to design tens of thousands of toxic molecules in a matter of hours.[207]

Algorithmic bias

AI programs can become biased after learning from real-world data. It is not typically introduced by the system designers but is learned by the program, and thus the programmers are often unaware that the bias exists.[208] Bias can be inadvertently introduced by the way training data is selected.[209] It can also emerge from correlations: AI is used to classify individuals into groups and then make predictions assuming that the individual will resemble other members of the group. In some cases, this assumption may be unfair.[210] An example of this is COMPAS, a commercial program widely used by U.S. courts to assess the likelihood of a defendant becoming a recidivist. ProPublica claims that the COMPAS-assigned recidivism risk level of black defendants is far more likely to be overestimated than that of white defendants, despite the fact that the program was not told the races of the defendants.[211]

Health equity issues may also be exacerbated when many-to-many mapping is done without taking steps to ensure equity for populations at risk for bias. At this time equity-focused tools and regulations are not in place to ensure equity application representation and usage.[212] Other examples where algorithmic bias can lead to unfair outcomes are when AI is used for credit rating or hiring.

At its 2022 Conference on Fairness, Accountability, and Transparency (ACM FAccT 2022) the Association for Computing Machinery, in Seoul, South Korea, presented and published findings recommending that until AI and robotics systems are demonstrated to be free of bias mistakes, they are unsafe and the use of self-learning neural networks trained on vast, unregulated sources of flawed internet data should be curtailed.[213]

Existential risk

Superintelligent AI may be able to improve itself to the point that humans could not control it. This could, as physicist Stephen Hawking puts it, "spell the end of the human race".[214] Philosopher Nick Bostrom argues that sufficiently intelligent AI, if it chooses actions based on achieving some goal, will exhibit convergent behavior such as acquiring resources or protecting itself from being shut down. If this AI's goals do not fully reflect humanity's, it might need to harm humanity to acquire more resources or prevent itself from being shut down, ultimately to better achieve its goal. He concludes that AI poses a risk to mankind, however humble or "friendly" its stated goals might be.[215] Political scientist Charles T. Rubin argues that "any sufficiently advanced benevolence may be indistinguishable from malevolence." Humans should not assume machines or robots would treat us favorably because there is no a priori reason to believe that they would share our system of morality.[216]

The opinion of experts and industry insiders is mixed, with sizable fractions both concerned and unconcerned by risk from eventual superhumanly-capable AI.[217] Physicist Stephen Hawking, Microsoft founder Bill Gates, and SpaceX founder Elon Musk have expressed concern about existential risk from AI.[218] In 2023 AI pioneers Geoffrey Hinton, Yoshua Bengio, Demis Hassabis, and Sam Altman joined others to sign an open letter stating "Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war"; others such as Yann LeCun dismissed such concerns as unfounded.[219] </ref> Mark Zuckerberg (CEO, Facebook) has said that artificial intelligence is helpful in its current form and will continue to assist humans.[220] Other experts argue is that the risks are far enough in the future to not be worth researching, or that humans will be valuable from the perspective of a superintelligent machine.[221] Rodney Brooks, in particular, has said that "malevolent" AI is still centuries away.[lower-alpha 21]

Copyright

In order to leverage as large a dataset as is feasible, generative AI is often trained on unlicensed copyrighted works, including in domains such as images or computer code; the output is then used under a rationale of "fair use". Experts disagree about how well, and under what circumstances, this rationale will hold up in courts of law; relevant factors may include "the purpose and character of the use of the copyrighted work" and "the effect upon the potential market for the copyrighted work".[223]

Ethical machines

Friendly AI are machines that have been designed from the beginning to minimize risks and to make choices that benefit humans. Eliezer Yudkowsky, who coined the term, argues that developing friendly AI should be a higher research priority: it may require a large investment and it must be completed before AI becomes an existential risk.[224]

Machines with intelligence have the potential to use their intelligence to make ethical decisions. The field of machine ethics provides machines with ethical principles and procedures for resolving ethical dilemmas.[225] The field of machine ethics is also called computational morality,[225] and was founded at an AAAI symposium in 2005.[226]

Other approaches include Wendell Wallach's "artificial moral agents"[227] and Stuart J. Russell's three principles for developing provably beneficial machines.[228]

Regulation

The regulation of artificial intelligence is the development of public sector policies and laws for promoting and regulating artificial intelligence (AI); it is therefore related to the broader regulation of algorithms.[229] The regulatory and policy landscape for AI is an emerging issue in jurisdictions globally.[230] According to AI Index at Stanford, the annual number of AI-related laws passed in the 127 survey countries jumped from one passed in 2016 to 37 passed in 2022 alone.[231][232] Between 2016 and 2020, more than 30 countries adopted dedicated strategies for AI.[35] Most EU member states had released national AI strategies, as had Canada, China, India, Japan, Mauritius, the Russian Federation, Saudi Arabia, United Arab Emirates, US and Vietnam. Others were in the process of elaborating their own AI strategy, including Bangladesh, Malaysia and Tunisia.[35] The Global Partnership on Artificial Intelligence was launched in June 2020, stating a need for AI to be developed in accordance with human rights and democratic values, to ensure public confidence and trust in the technology.[35] Henry Kissinger, Eric Schmidt, and Daniel Huttenlocher published a joint statement in November 2021 calling for a government commission to regulate AI.[233] In 2023, OpenAI leaders published recommendations for the governance of superintelligence, which they believe may happen in less than 10 years.[234]

In a 2022 Ipsos survey, attitudes towards AI varied greatly by country; 78% of Chinese citizens, but only 35% of Americans, agreed that "products and services using AI have more benefits than drawbacks".[231] A 2023 Reuters /Ipsos poll found that 61% of Americans agree, and 22% disagree, that AI poses risks to humanity.[235] In a 2023 Fox News poll, 35% of Americans thought it "very important", and an additional 41% thought it "somewhat important", for the federal government to regulate AI, versus 13% responding "not very important" and 8% responding "not at all important".[236][237]

In fiction

Thought-capable artificial beings have appeared as storytelling devices since antiquity,[1] and have been a persistent theme in science fiction.[3]

A common trope in these works began with Mary Shelley's Frankenstein, where a human creation becomes a threat to its masters. This includes such works as Arthur C. Clarke's and Stanley Kubrick's 2001: A Space Odyssey (both 1968), with HAL 9000, the murderous computer in charge of the Discovery One spaceship, as well as The Terminator (1984) and The Matrix (1999). In contrast, the rare loyal robots such as Gort from The Day the Earth Stood Still (1951) and Bishop from Aliens (1986) are less prominent in popular culture.[238]

Isaac Asimov introduced the Three Laws of Robotics in many books and stories, most notably the "Multivac" series about a super-intelligent computer of the same name. Asimov's laws are often brought up during lay discussions of machine ethics;[239] while almost all artificial intelligence researchers are familiar with Asimov's laws through popular culture, they generally consider the laws useless for many reasons, one of which is their ambiguity.[240]

Several works use AI to force us to confront the fundamental question of what makes us human, showing us artificial beings that have the ability to feel, and thus to suffer. This appears in Karel Čapek's R.U.R., the films A.I. Artificial Intelligence and Ex Machina, as well as the novel Do Androids Dream of Electric Sheep?, by Philip K. Dick. Dick considers the idea that our understanding of human subjectivity is altered by technology created with artificial intelligence.[241]

See also

- AI safety – Artificial intelligence field of study

- AI alignment – AI conformance to the intended objective

- Artificial intelligence in healthcare – Machine-learning algorithms and software in the analysis, presentation, and comprehension of complex medical and health care data

- Artificial intelligence arms race

- Behavior selection algorithm – Algorithm that selects actions for intelligent agents

- Business process automation – Technology-enabled automation of complex business processes

- Case-based reasoning – Process of solving new problems based on the solutions of similar past problems

- Emergent algorithm – Algorithm exhibiting emergent behavior

- Female gendering of AI technologies – Design of digital assistants as female

- Glossary of artificial intelligence – List of concepts in artificial intelligence

- Operations research – Discipline concerning the application of advanced analytical methods

- Robotic process automation – Form of business process automation technology

- Synthetic intelligence – Alternate term for or form of artificial intelligence

- Universal basic income

- Weak artificial intelligence – Form of artificial intelligence

- Data sources – The list of data sources for study and research

Explanatory notes

- ↑ 1.0 1.1 This list of intelligent traits is based on the topics covered by the major AI textbooks, including: (Russell Norvig), (Luger Stubblefield), (Poole Mackworth) and (Nilsson 1998)

- ↑ This statement comes from the proposal for the Dartmouth workshop of 1956, which reads: "Every aspect of learning or any other feature of intelligence can be so precisely described that a machine can be made to simulate it."[13]

- ↑ Russel and Norvig note in the textbook Artificial Intelligence: A Modern Approach (4th ed.), section 1.5: "In the longer term, we face the difficult problem of controlling superintelligent AI systems that may evolve in unpredictable ways." while referring to computer scientists, philosophers, and technologists.

- ↑ Daniel Crevier wrote, "the conference is generally recognized as the official birthdate of the new science."[9] Russell and Norvig call the conference "the birth of artificial intelligence."[10]

- ↑ Russell and Norvig wrote "for the next 20 years the field would be dominated by these people and their students."[10]

- ↑ Russell and Norvig wrote "it was astonishing whenever a computer did anything kind of smartish".[12]

- ↑ The programs described are Arthur Samuel's checkers program for the IBM 701, Daniel Bobrow's STUDENT, Newell and Simon's Logic Theorist and Terry Winograd's SHRDLU.

- ↑ Embodied approaches to AI[25] were championed by Hans Moravec[26] and Rodney Brooks[27] and went by many names: Nouvelle AI,[27] Developmental robotics,[28] situated AI, behavior-based AI as well as others. A similar movement in cognitive science was the embodied mind thesis.

- ↑ Clark wrote: "After a half-decade of quiet breakthroughs in artificial intelligence, 2015 has been a landmark year. Computers are smarter and learning faster than ever."[33]

- ↑ Alan Turing discussed the centrality of learning as early as 1950, in his classic paper "Computing Machinery and Intelligence".[56] In 1956, at the original Dartmouth AI summer conference, Ray Solomonoff wrote a report on unsupervised probabilistic machine learning: "An Inductive Inference Machine".[57]

- ↑ This is a form of Tom Mitchell's widely quoted definition of machine learning: "A computer program is set to learn from an experience E with respect to some task T and some performance measure P if its performance on T as measured by P improves with experience E."[58]

- ↑ Alan Turing suggested in "Computing Machinery and Intelligence" that a "thinking machine" would need to be educated like a child.[56] Developmental robotics is a modern version of the idea.[28]

- ↑ Compared with symbolic logic, formal Bayesian inference is computationally expensive. For inference to be tractable, most observations must be conditionally independent of one another. AdSense uses a Bayesian network with over 300 million edges to learn which ads to serve.[97]

- ↑ Expectation-maximization, one of the most popular algorithms in machine learning, allows clustering in the presence of unknown latent variables.[99]

- ↑ The Smithsonian reports: "Pluribus has bested poker pros in a series of six-player no-limit Texas Hold'em games, reaching a milestone in artificial intelligence research. It is the first bot to beat humans in a complex multiplayer competition."[143]

- ↑ See Problem of other minds

- ↑ Nils Nilsson wrote in 1983: "Simply put, there is wide disagreement in the field about what AI is all about."[170]

- ↑ Daniel Crevier wrote that "time has proven the accuracy and perceptiveness of some of Dreyfus's comments. Had he formulated them less aggressively, constructive actions they suggested might have been taken much earlier."[175]

- ↑ Searle presented this definition of "Strong AI" in 1999.[186] Searle's original formulation was "The appropriately programmed computer really is a mind, in the sense that computers given the right programs can be literally said to understand and have other cognitive states."[187] Strong AI is defined similarly by Russell and Norvig: "The assertion that machines could possibly act intelligently (or, perhaps better, act as if they were intelligent) is called the 'weak AI' hypothesis by philosophers, and the assertion that machines that do so are actually thinking (as opposed to simulating thinking) is called the 'strong AI' hypothesis."[182]

- ↑ See table 4; 9% is both the OECD average and the US average.[200]

- ↑ Rodney Brooks writes, "I think it is a mistake to be worrying about us developing malevolent AI anytime in the next few hundred years. I think the worry stems from a fundamental error in not distinguishing the difference between the very real recent advances in a particular aspect of AI and the enormity and complexity of building sentient volitional intelligence."[222]

References

- ↑ 1.0 1.1

AI in myth:

- (McCorduck 2004)

- (Russell Norvig)

- ↑ McCorduck (2004), pp. 17–25.

- ↑ 3.0 3.1 McCorduck (2004), pp. 340–400.

- ↑ Berlinski (2000).

- ↑

AI's immediate precursors:

- (McCorduck 2004)

- (Crevier 1993)

- (Russell Norvig)

- (Moravec 1988)

- ↑ Russell & Norvig (2009), p. 16.

- ↑ Manyika 2022, p. 9.

- ↑ Manyika 2022, p. 10.

- ↑ Crevier (1993), pp. 47–49.

- ↑ 10.0 10.1 Russell & Norvig (2003), p. 17.

- ↑

Dartmouth workshop:

- (Russell Norvig)

- (McCorduck 2004)

- (NRC 1999)

- (McCarthy Minsky)

- ↑ Russell & Norvig (2003), p. 18.

- ↑

Successful Symbolic AI programs:

- (McCorduck 2004)

- (Crevier 1993)

- (Moravec 1988)

- (Russell Norvig)

- ↑

AI heavily funded in the 1960s:

- (McCorduck 2004)

- (Crevier 1993)

- (NRC 1999)

- ↑ Howe (1994).

- ↑ Newquist (1994), pp. 86–86.

- ↑ (Simon 1965) quoted in (Crevier 1993)

- ↑ (Minsky 1967) quoted in (Crevier 1993)

- ↑ Lighthill (1973).

- ↑

First AI Winter, Lighthill report, Mansfield Amendment

- (Crevier 1993)

- (Russell Norvig)

- (NRC 1999)

- (Howe 1994)

- (Newquist 1994)

- ↑

Expert systems:

- (Russell Norvig)

- (Luger Stubblefield)

- (Nilsson 1998)

- (McCorduck 2004)

- (Crevier 1993)

- (Newquist 1994)

- ↑

Funding initiatives in the early 80s: Fifth Generation Project (Japan), Alvey (UK), Microelectronics and Computer Technology Corporation (US), Strategic Computing Initiative (US):

- (McCorduck 2004)

- (Crevier 1993)

- (Russell Norvig)

- (NRC 1999)

- (Newquist 1994)

- ↑

Second AI Winter:

- (McCorduck 2004)

- (Crevier 1993)

- (NRC 1999)

- (Newquist 1994)

- ↑ Nilsson (1998), p. 7.

- ↑ McCorduck (2004), pp. 454–462.

- ↑ Moravec (1988).

- ↑ 27.0 27.1 Brooks (1990).

- ↑ 28.0 28.1

Developmental robotics:

- (Weng McClelland)

- (Lungarella Metta)

- (Asada Hosoda)

- (Oudeyer 2010)

- ↑

Revival of connectionism:

- (Crevier 1993)

- (Russell Norvig)

- ↑

Formal and narrow methods adopted in the 1990s:

- (Russell Norvig)

- (McCorduck 2004)

- ↑

AI widely used in the late 1990s:

- (Russell Norvig)

- (Kurzweil 2005)

- (NRC 1999)

- (Newquist 1994)

- ↑ McKinsey (2018).

- ↑ 33.0 33.1 Clark (2015b).

- ↑ (MIT Sloan Management Review 2018); (Lorica 2017)

- ↑ 35.0 35.1 35.2 35.3 UNESCO (2021).

- ↑ DiFeliciantonio, Chase (3 April 2023). "AI has already changed the world. This report shows how". San Francisco Chronicle. https://www.sfchronicle.com/tech/article/ai-artificial-intelligence-report-stanford-17869558.php.

- ↑ Goswami, Rohan (5 April 2023). "Here's where the A.I. jobs are" (in en). CNBC. https://www.cnbc.com/2023/04/05/ai-jobs-see-the-state-by-state-data-from-a-stanford-study.html.

- ↑ (Pennachin Goertzel); (Roberts 2016)

- ↑

Problem solving, puzzle solving, game playing and deduction:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑

Uncertain reasoning:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑ 41.0 41.1 41.2

Intractability and efficiency and the combinatorial explosion:

- (Russell Norvig)

- ↑ 42.0 42.1 42.2

Psychological evidence of the prevalence sub-symbolic reasoning and knowledge:

- (Kahneman 2011)

- (Wason Shapiro)

- (Kahneman Slovic)

- (Dreyfus Dreyfus)

- ↑

Knowledge representation and knowledge engineering:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑ Russell & Norvig (2003), pp. 320–328.

- ↑ 45.0 45.1 45.2

Representing categories and relations: Semantic networks, description logics, inheritance (including frames and scripts):

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑ 46.0 46.1 Representing events and time:Situation calculus, event calculus, fluent calculus (including solving the frame problem):

- (Russell Norvig),

- (Poole Mackworth),

- (Nilsson 1998)

- ↑ 47.0 47.1

Causal calculus:

- (Poole Mackworth)

- ↑ 48.0 48.1

Representing knowledge about knowledge: Belief calculus, modal logics:

- (Russell Norvig),

- (Poole Mackworth)

- ↑ 49.0 49.1

Default reasoning, Frame problem, default logic, non-monotonic logics, circumscription, closed world assumption, abduction:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑ 50.0 50.1

Breadth of commonsense knowledge:

- (Russell Norvig),

- (Crevier 1993),

- (Moravec 1988),

- (Lenat Guha)

- ↑ Smoliar & Zhang (1994).

- ↑ Neumann & Möller (2008).

- ↑ Kuperman, Reichley & Bailey (2006).

- ↑ McGarry (2005).

- ↑ Bertini, Del Bimbo & Torniai (2006).

- ↑ 56.0 56.1 Turing (1950).

- ↑ Solomonoff (1956).

- ↑ Russell & Norvig (2003), pp. 649–788.

- ↑

Learning:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑

Reinforcement learning:

- (Russell Norvig)

- (Luger Stubblefield)

- ↑ The Economist (2016).

- ↑ Jordan & Mitchell (2015).

- ↑

Natural language processing (NLP):

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- ↑

Applications of NLP:

- (Russell Norvig)

- (Luger Stubblefield)

- ↑ Modern statistical approaches to NLP:

- (Cambria White)

- ↑ Vincent (2019).

- ↑

Machine perception:

- (Russell Norvig)

- (Nilsson 1998)

- ↑

Speech recognition:

- (Russell Norvig)

- ↑

Object recognition:

- (Russell Norvig)

- ↑

Computer vision:

- (Russell Norvig)

- (Nilsson 1998)

- ↑ MIT AIL (2014).

- ↑

Affective computing:

- (Thro 1993)

- (Edelson 1991)

- (Tao Tan)

- (Scassellati 2002)

- ↑ Waddell (2018).

- ↑ Poria et al. (2017).

- ↑

The Society of Mind:

- (Minsky 1986)

- (Moravec 1988)

- (Russell Norvig)

- (Nilsson 1998)

- ↑ Domingos (2015), Chpt. 9.

- ↑

Artificial brain as an approach to AGI:

- (Russell Norvig)

- (Crevier 1993)

- (Goertzel et al. 2010)

- (Moravec 1988)

- (Kurzweil 2005)

- (Hawkins Blakeslee)

- ↑

Search algorithms:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑ Forward chaining, backward chaining, Horn clauses, and logical deduction as search:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑

State space search and planning:

- (Russell Norvig)

- (Poole Mackworth)

- (Nilsson 1998)

- ↑ Moving and configuration space:

- (Russell Norvig)

- ↑ Uninformed searches (breadth first search, depth-first search and general state space search):

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑

Heuristic or informed searches (e.g., greedy best first and A*):

- (Russell Norvig)

- (Poole Mackworth)

- (Poole Mackworth)

- (Luger Stubblefield)

- ↑ Tecuci (2012).

- ↑ Optimization searches:

- (Russell Norvig)

- (Poole Mackworth)

- (Luger Stubblefield)

- ↑

Genetic programming and genetic algorithms:

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑

Artificial life and society based learning:

- (Luger Stubblefield)

- (Merkle Middendorf)

- ↑

Logic:

- (Russell Norvig),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑

Satplan:

- (Russell Norvig),

- (Poole Mackworth),

- (Nilsson 1998)

- ↑

Explanation based learning, relevance based learning, inductive logic programming, case based reasoning:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑

Propositional logic:

- (Russell Norvig),

- (Luger Stubblefield)

- (Nilsson 1998)

- ↑ First-order logic and features such as equality:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑

Fuzzy logic:

- (Russell Norvig)

- (Scientific American 1999)

- ↑ Abe, Jair Minoro; Nakamatsu, Kazumi (2009). "Multi-agent Systems and Paraconsistent Knowledge". Knowledge Processing and Decision Making in Agent-Based Systems. Studies in Computational Intelligence. 170. Springer Berlin Heidelberg. pp. 101–121. doi:10.1007/978-3-540-88049-3_5. ISBN 978-3-540-88048-6. https://link.springer.com/chapter/10.1007/978-3-540-88049-3_5. Retrieved 2 August 2022.

- ↑

Stochastic methods for uncertain reasoning:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑

Bayesian networks:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑ Domingos (2015), chapter 6.

- ↑

Bayesian inference algorithm:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- ↑ Domingos (2015), p. 210.

- ↑

Bayesian learning and the expectation-maximization algorithm:

- (Russell Norvig),

- (Poole Mackworth),

- (Nilsson 1998)

- (Domingos 2015)

- ↑ Bayesian decision theory and Bayesian decision networks:

- (Russell Norvig)

- ↑ 102.0 102.1 102.2 Stochastic temporal models:

- (Russell Norvig)

- (Russell Norvig)

- (Russell Norvig)

- (Russell Norvig)

- ↑

decision theory and decision analysis:

- (Russell Norvig),

- (Poole Mackworth)

- ↑

Information value theory:

- (Russell Norvig)

- ↑ Markov decision processes and dynamic decision networks:

- (Russell Norvig)

- ↑ Game theory and mechanism design:

- (Russell Norvig)

- ↑

Statistical learning methods and classifiers:

- (Russell Norvig),

- (Luger Stubblefield)

- ↑

Decision tree:

- (Domingos 2015)

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield)

- ↑

K-nearest neighbor algorithm:

- (Domingos 2015)

- (Russell Norvig)

- ↑

kernel methods such as the support vector machine:

- (Domingos 2015)

- (Russell Norvig)

- (Russell Norvig)

- ↑ Domingos (2015), p. 152.

- ↑

Naive Bayes classifier:

- (Domingos 2015)

- (Russell Norvig)

- ↑ 113.0 113.1

Neural networks:

- (Russell Norvig),

- (Poole Mackworth),

- (Luger Stubblefield),

- (Nilsson 1998)

- (Domingos 2015)

- ↑

Classifier performance:

- (van der Walt Bernard)

- (Russell Norvig)

- ↑

Backpropagation:

- (Russell Norvig),

- (Luger Stubblefield),

- (Nilsson 1998)

- (Werbos 1974); (Werbos 1982)

- (Linnainmaa 1970); (Griewank 2012)

- ↑

Competitive learning, Hebbian coincidence learning, Hopfield networks and attractor networks:

- (Luger Stubblefield)

- ↑

Feedforward neural networks, perceptrons and radial basis networks:

- (Russell Norvig)

- (Luger Stubblefield)

- ↑ Schulz & Behnke (2012).

- ↑

Deep learning:

- (Goodfellow Bengio)

- (Hinton et al. 2016)

- (Schmidhuber 2015)

- ↑ Deng & Yu (2014), pp. 199–200.

- ↑ Ciresan, Meier & Schmidhuber (2012).

- ↑ Habibi (2017).

- ↑ Fukushima (2007).

- ↑

Recurrent neural networks, Hopfield nets:

- (Russell Norvig)

- (Luger Stubblefield)

- (Schmidhuber 2015)

- ↑ Schmidhuber (2015).

- ↑ (Werbos 1988); (Robinson Fallside); (Williams Zipser)

- ↑ (Goodfellow Bengio); ( Hochreiter 1991)

- ↑ (Hochreiter Schmidhuber); ( Gers Schraudolph)

- ↑ Kobielus, James (27 November 2019). "GPUs Continue to Dominate the AI Accelerator Market for Now" (in en). InformationWeek. https://www.informationweek.com/ai-or-machine-learning/gpus-continue-to-dominate-the-ai-accelerator-market-for-now.

- ↑ Russell & Norvig (2009), p. 1.

- ↑ European Commission (2020), p. 1.

- ↑ CNN (2006).

- ↑

Targeted advertising:

- (Russell Norvig)

- (Economist 2016)

- (Lohr 2016)

- ↑ Lohr (2016).

- ↑ Smith (2016).

- ↑ Rowinski (2013).

- ↑ Frangoul (2019).

- ↑ Brown (2019).

- ↑ "Artificial intelligence, immune to fear or favour, is helping to make China's foreign policy | South China Morning Post". 2023-03-25. https://www.scmp.com/news/china/society/article/2157223/artificial-intelligence-immune-fear-or-favour-helping-make.

- ↑ McCorduck (2004), pp. 480–483.

- ↑ Markoff (2011).

- ↑ (Google 2016); (BBC 2016)

- ↑ Solly (2019).

- ↑ Bowling et al. (2015).

- ↑ Sample (2017).

- ↑ Heath (2020).

- ↑ Aletras et al. (2016).

- ↑ Anadiotis (2020).

- ↑ Vogels, Emily A. (24 May 2023). "A majority of Americans have heard of ChatGPT, but few have tried it themselves". Pew Research Center. https://www.pewresearch.org/short-reads/2023/05/24/a-majority-of-americans-have-heard-of-chatgpt-but-few-have-tried-it-themselves/.