Technological unemployment

Topic: Engineering

From HandWiki - Reading time: 58 min

From HandWiki - Reading time: 58 min

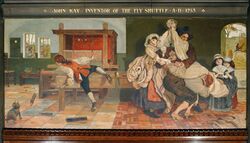

Technological unemployment is the loss of jobs due to technological change.[1][2][3] It is a key type of structural unemployment. Technological change typically includes the introduction of labour-saving "mechanical-muscle" machines or more efficient "mechanical-mind" processes (automation) and, in doing so, humans' role in these processes are minimized.[4] Historical examples include artisan weavers losing work due to the introduction of mechanized looms, leading to protests by the Luddites. A contemporary example of technological unemployment is the displacement of retail cashiers by self-service tills and cashierless stores.

Technological change can cause short-term job losses in a specific profession or industry. Whether it leads to lasting increases in unemployment has been debated. The phrase "technological unemployment" was popularised by John Maynard Keynes in the 1930s, who said it was "only a temporary phase of maladjustment".[5]

Advances in artificial intelligence (AI) have reignited debates about the possibility of mass unemployment, or even the end of employment altogether. Some experts, such as Geoffrey Hinton, believe that the development of artificial general intelligence and advanced robotics will eventually enable the automation of all intellectual and physical tasks, suggesting the need for a basic income for non-workers to subsist.[6][7] Others, like Daron Acemoğlu, argue that humans will remain necessary for certain tasks, or complementary to AI, disrupting the labor market without necessarily causing mass unemployment.[8][9] The World Bank's 2019 World Development Report argues that while automation displaces workers, technological innovation creates more new industries and jobs on balance.[10]

History

Classical era

The issue of machines displacing human labour has been discussed since at least Aristotle's time.[11][12]

According to author Gregory Woirol, the phenomenon of technological unemployment is likely to have existed since at least the invention of the wheel.[13] Ancient societies had various methods for relieving the poverty of those unable to support themselves with their own labour. Ancient China and ancient Egypt may have had various centrally run relief programmes in response to technological unemployment dating back to at least the second millennium BC.[14] Ancient Hebrews and adherents of the ancient Vedic religion had decentralised responses where aiding the poor was encouraged by their faiths.[14] In ancient Greece, free labourers could find themselves unemployed due to both the effects of ancient labour saving technology and to competition from slaves ("machines of flesh and blood"[15]). Sometimes, these unemployed workers would starve to death or were forced into slavery themselves although in other cases they were supported by handouts. Pericles responded to perceived technological unemployment by launching public works programmes to provide paid work to the jobless. Pericle's programmes were criticized for wasting public money but these criticisms were defeated.[16]

Perhaps the earliest example of a scholar discussing the phenomenon of technological unemployment occurs with Aristotle, who speculated in Book One of Politics that if machines could become sufficiently advanced, there would be no more need for human labour.[17] Similar to the Greeks, ancient Romans responded to the problem of technological unemployment by relieving poverty with handouts (such as the Cura Annonae). Several hundred thousand families were sometimes supported like this at once.[14] Less often, jobs were directly created with public works programmes, such as those launched by the Gracchi. Various emperors even went as far as to refuse or ban labour saving innovations.[18][19] In one instance, the introduction of a labor-saving invention was blocked, when Emperor Vespasian refused to allow a new method of low-cost transportation of heavy goods, saying "You must allow my poor hauliers to earn their bread."[20] Labour shortages began to develop in the Roman empire towards the end of the second century AD, and from this point mass unemployment in Europe appears to have largely receded for over a millennium.[21]

Post-classical era

The medieval and early renaissance period saw the widespread adoption of newly invented technologies, as well as older ones which had been conceived yet barely used in the Classical era.[22] Some were invented in Europe while others were invented in more Eastern countries like China, India, Arabia and Persia. The Black Death left fewer workers across Europe. Mass unemployment began to reappear in Europe, especially in Western, Central and Southern Europe in the 15th century, partly as a result of population growth, and partly due to changes in the availability of land for subsistence farming caused by early enclosures.[23] As a result of the threat of unemployment, there was less tolerance for disruptive new technologies. European authorities would often side with groups representing subsections of the working population, such as Guilds, banning new technologies and sometimes even executing those who tried to promote or trade in them.[24]

16th to 18th century

In Great Britain, the ruling elite began to take a less restrictive approach to innovation somewhat earlier than in much of continental Europe, which has been cited as a possible reason for Britain's early lead in driving the Industrial Revolution.[25] Yet concern over the impact of innovation on employment remained strong through the 16th and early 17th century. A famous example of new technology being refused occurred when the inventor William Lee invited Queen Elizabeth I to view a labour saving knitting machine. The Queen declined to issue a patent on the grounds that the technology might cause unemployment among textile workers. After moving to France and also failing to achieve success in promoting his invention, Lee returned to England but was again refused by Elizabeth's successor James I for the same reason.[26]

After the Glorious Revolution, authorities became less sympathetic to workers concerns about losing their jobs due to innovation. An increasingly influential strand of Mercantilist thought held that introducing labour saving technology would actually reduce unemployment, as it would allow British firms to increase their market share against foreign competition. From the early 18th century workers could no longer rely on support from the authorities against the perceived threat of technological unemployment. They would sometimes take direct action, such as machine breaking, in attempts to protect themselves from disruptive innovation. Joseph Schumpeter notes that as the 18th century progressed, thinkers would raise the alarm about technological unemployment with increasing frequency, with von Justi being a prominent example.[27] Yet Schumpeter also notes that the prevailing view among the elite solidified on the position that technological unemployment would not be a long-term problem.[26][23]

19th century

It was only in the 19th century that debates over technological unemployment became intense, especially in Great Britain where many economic thinkers of the time were concentrated. Building on the work of Dean Tucker and Adam Smith, political economists began to create what would become the modern discipline of economics.[note 1] While rejecting much of mercantilism, members of the new discipline largely agreed that technological unemployment would not be an enduring problem. In the first few decades of the 19th century, several prominent political economists did, however, argue against the optimistic view, claiming that innovation could cause long-term unemployment. These included Sismondi,[28] Malthus, J S Mill, and from 1821, David Ricardo himself.[29] As arguably the most respected political economist of his age, Ricardo's view was challenging to others in the discipline. The first major economist to respond was Jean-Baptiste Say, who argued that no one would introduce machinery if they were going to reduce the amount of product,[note 2] and that as Say's law states that supply creates its own demand, any displaced workers would automatically find work elsewhere once the market had had time to adjust.[30] Ramsey McCulloch expanded and formalised Say's optimistic views on technological unemployment, and was supported by others such as Charles Babbage, Nassau Senior and many other lesser known political economists. Towards the middle of the 19th century, Karl Marx joined the debates. Building on the work of Ricardo and Mill, Marx went much further, presenting a deeply pessimistic view of technological unemployment; his views attracted many followers and founded an enduring school of thought but mainstream economics was not dramatically changed. By the 1870s, at least in Great Britain, technological unemployment faded both as a popular concern and as an issue for academic debate. It had become increasingly apparent that innovation was increasing prosperity for all sections of British society, including the working class. As the classical school of thought gave way to neoclassical economics, mainstream thinking was tightened to take into account and refute the pessimistic arguments of Mill and Ricardo.[31]

20th century

For the first two decades of the 20th century, mass unemployment was not the major problem it had been in the first half of the 19th. While the Marxist school and a few other thinkers continued to challenge the optimistic view, technological unemployment was not a significant concern for mainstream economic thinking until the mid to late 1920s. In the 1920s mass unemployment re-emerged as a pressing issue within Europe. At this time the U.S. was generally more prosperous, but even there urban unemployment had begun to increase from 1927. Rural American workers had been suffering job losses from the start of the 1920s; many had been displaced by improved agricultural technology, such as the tractor. The centre of gravity for economic debates had by this time moved from Great Britain to the United States, and it was here that the 20th century's two great periods of debate over technological unemployment largely occurred.[32]

The peak periods for the two debates were in the 1930s and the 1960s. According to economic historian Gregory R Woirol, the two episodes share several similarities.[33] In both cases academic debates were preceded by an outbreak of popular concern, sparked by recent rises in unemployment. In both cases the debates were not conclusively settled, but faded away as unemployment was reduced by an outbreak of war – World War II for the debate of the 1930s, and the Vietnam War for the 1960s episodes. In both cases, the debates were conducted within the prevailing paradigm at the time, with little reference to earlier thought. In the 1930s, optimists based their arguments largely on neo-classical beliefs in the self-correcting power of markets to reduce any short-term unemployment via compensation effects. In the 1960s, belief in compensation effects was less strong, but the mainstream Keynesian economists of the time largely believed government intervention would be able to counter any persistent technological unemployment that was not cleared by market forces. Another similarity was the publication of a major Federal study towards the end of each episode, which broadly found that long-term technological unemployment was not occurring (though the studies did agree innovation was a major factor in the short term displacement of workers, and advised government action to provide assistance).[note 3][33]

As the golden age of capitalism came to a close in the 1970s, unemployment once again rose, and this time generally remained relatively high for the rest of the century, across most advanced economies. Several economists once again argued that this may be due to innovation, with perhaps the most prominent being Paul Samuelson.[34] Overall, the closing decades of the 20th century saw most concern expressed over technological unemployment in Europe, though there were several examples in the U.S.[35] A number of popular works warning of technological unemployment were also published. These included James S. Albus's 1976 book titled Peoples' Capitalism: The Economics of the Robot Revolution;[36][37] David F. Noble with works published in 1984[38] and 1993;[39] Jeremy Rifkin and his 1995 book The End of Work;[40] and the 1996 book The Global Trap.[41] Yet for the most part, other than during the periods of intense debate in the 1930s and 60s, the consensus in the 20th century among both professional economists and the general public remained that technology does not cause long-term joblessness.[42]

21st century

Opinions

Prof. Mark MacCarthy (2014)[43]

The general consensus that innovation does not cause long-term unemployment held strong for the first decade of the 21st century although it continued to be challenged by a number of academic works,[44][45] and by popular works such as Marshall Brain's Robotic Nation[46] and Martin Ford's The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future.[47]

Since the publication of their 2011 book Race Against the Machine, MIT professors Andrew McAfee and Erik Brynjolfsson have been prominent among those raising concern about technological unemployment. The two professors remain relatively optimistic, however, stating "the key to winning the race is not to compete against machines but to compete with machines".[48][49][50][51][52][53][54]

Concern about technological unemployment grew in 2013 due in part to a number of studies predicting substantially increased technological unemployment in forthcoming decades and empirical evidence that, in certain sectors, employment is falling worldwide despite rising output, thus discounting globalization and offshoring as the only causes of increasing unemployment.[55][26][56]

In 2013, professor Nick Bloom of Stanford University stated there had recently been a major change of heart concerning technological unemployment among his fellow economists.[57] In 2014 the Financial Times reported that the impact of innovation on jobs has been a dominant theme in recent economic discussion.[58] According to the academic and former politician Michael Ignatieff writing in 2014, questions concerning the effects of technological change have been "haunting democratic politics everywhere".[59] Concerns have included evidence showing worldwide falls in employment across sectors such as manufacturing; falls in pay for low and medium skilled workers stretching back several decades even as productivity continues to rise; the increase in often precarious platform mediated employment; and the occurrence of "jobless recoveries" after recent recessions. The 21st century has seen a variety of skilled tasks partially taken over by machines, including translation, legal research and even low level journalism. Care work, entertainment, and other tasks requiring empathy, previously thought safe from automation, have also begun to be performed by robots.[55][26][60][61][62][63]

Former U.S. Treasury Secretary and Professor of Economics at Harvard University Lawrence Summers stated in 2014 that he no longer believed automation would always create new jobs and that "This isn't some hypothetical future possibility. This is something that's emerging before us right now." Summers noted that already, more labor sectors were losing jobs than creating new ones.[note 4][64][65][66][67] While himself doubtful about technological unemployment, professor Mark MacCarthy stated in the fall of 2014 that it is now the "prevailing opinion" that the era of technological unemployment has arrived.[43]

At the 2014 Davos meeting, Thomas Friedman reported that the link between technology and unemployment seemed to have been the dominant theme of that year's discussions. A survey at Davos 2014 found that 80% of 147 respondents agreed that technology was driving jobless growth.[68] At the 2015 Davos, Gillian Tett found that almost all delegates attending a discussion on inequality and technology expected an increase in inequality over the next five years, and gives the reason for this as the technological displacement of jobs.[69] 2015 saw Martin Ford win the Financial Times and McKinsey Business Book of the Year Award for his Rise of the Robots: Technology and the Threat of a Jobless Future, and saw the first world summit on technological unemployment, held in New York. In late 2015, further warnings of potential worsening for technological unemployment came from Andy Haldane, the Bank of England's chief economist, and from Ignazio Visco, the governor of the Bank of Italy.[70][71] In an October 2016 interview, US President Barack Obama said that due to the growth of artificial intelligence, society would be debating "unconditional free money for everyone" within 10 to 20 years.[72] In 2019, computer scientist and artificial intelligence expert Stuart J. Russell stated that "in the long run nearly all current jobs will go away, so we need fairly radical policy changes to prepare for a very different future economy." In a book he authored, Russell claims that "One rapidly emerging picture is that of an economy where far fewer people work because work is unnecessary." However, he predicted that employment in healthcare, home care, and construction would increase.[73]

Other economists[who?] have argued that long-term technological unemployment is unlikely. In 2014, Pew Research canvassed 1,896 technology professionals and economists and found a split of opinion: 48% of respondents believed that new technologies would displace more jobs than they would create by the year 2025, while 52% maintained that they would not.[74] Economics professor Bruce Chapman from Australian National University has advised that studies such as Frey and Osborne's tend to overstate the probability of future job losses, as they don't account for new employment likely to be created, due to technology, in what are currently unknown areas.[75] Looking deeper into this, small and mid-sized businesses have created a large amount of new jobs around the world, which allows for entrepreneurs and investors to have the freedom to create and grow businesses, which is extremely vital with new technologies emerging everyday.[76] With all of these new buinesses there will be a large number of workers that will be required to work for these companies, which would improve the world's employment situation, replacing jobs that were previously lost.

General public surveys have often found an expectation that automation would impact jobs widely, but not the jobs held by those particular people surveyed.[77]

In the early 2020s, generative AI and automation reignited debate over systemic job displacement versus emergent roles in AI-enabled services and new digital industries. Economic analyses point to increased worker transitions into AI supervision and creative task domains.[78] In July 2025, Ford CEO Jim Farley predicted that "artificial intelligence is going to replace literally half of all white-collar workers in the U.S."[79] In October 2025, U.S. Senator Bernie Sanders raised concerns about job displacement due to artificial intelligence, citing a report that estimated potential job losses of up to 100 million over the next decade.[80] He proposed a "robot tax" that would protect workers from the impacts of artificial intelligence.[81]

Studies

A number of studies have predicted that automation will take a large proportion of jobs in the future, but estimates of the level of unemployment this will cause vary. Research by Carl Benedikt Frey and Michael Osborne of the Oxford Martin School showed that employees engaged in "tasks following well-defined procedures that can easily be performed by sophisticated algorithms" are at risk of displacement. The study, published in 2013, shows that automation can affect both skilled and unskilled work and both high and low-paying occupations; however, low-paid physical occupations are most at risk. It estimated that 47% of US jobs were at high risk of automation.[26] In 2014, the economic think tank Bruegel released a study, based on the Frey and Osborne approach, claiming that across the European Union's 28 member states, 54% of jobs were at risk of automation. The countries where jobs were least vulnerable to automation were Sweden, with 46.69% of jobs vulnerable, the UK at 47.17%, the Netherlands at 49.50%, and France and Denmark, both at 49.54%. The countries where jobs were found to be most vulnerable were Romania at 61.93%, Portugal at 58.94%, Croatia at 57.9%, and Bulgaria at 56.56%.[82][83] A 2015 report by the Taub Center found that 41% of jobs in Israel were at risk of being automated within the next two decades.[84] In January 2016, a joint study by the Oxford Martin School and Citibank, based on previous studies on automation and data from the World Bank, found that the risk of automation in developing countries was much higher than in developed countries. It found that 77% of jobs in China, 69% of jobs in India, 85% of jobs in Ethiopia, and 55% of jobs in Uzbekistan were at risk of automation.[85] The World Bank similarly employed the methodology of Frey and Osborne. A 2016 study by the International Labour Organization found 74% of salaried electrical & electronics industry positions in Thailand, 75% of salaried electrical & electronics industry positions in Vietnam, 63% of salaried electrical & electronics industry positions in Indonesia, and 81% of salaried electrical & electronics industry positions in the Philippines were at high risk of automation.[86] A 2016 United Nations report stated that 75% of jobs in the developing world were at risk of automation, and predicted that more jobs might be lost when corporations stop outsourcing to developing countries after automation in industrialized countries makes it less lucrative to outsource to countries with lower labor costs.[87]

The Council of Economic Advisers, a US government agency tasked with providing economic research for the White House, in the 2016 Economic Report of the President, used the data from the Frey and Osborne study to estimate that 83% of jobs with an hourly wage below $20, 31% of jobs with an hourly wage between $20 and $40, and 4% of jobs with an hourly wage above $40 were at risk of automation.[88] A 2016 study by Ryerson University (now Toronto Metropolitan University) found that 42% of jobs in Canada were at risk of automation, dividing them into two categories - "high risk" jobs and "low risk" jobs. High risk jobs were mainly lower-income jobs that required lower education levels than average. Low risk jobs were on average more skilled positions. The report found a 70% chance that high risk jobs and a 30% chance that low risk jobs would be affected by automation in the next 10–20 years.[89] A 2017 study by PricewaterhouseCoopers found that up to 38% of jobs in the US, 35% of jobs in Germany, 30% of jobs in the UK, and 21% of jobs in Japan were at high risk of being automated by the early 2030s.[90] A 2017 study by Ball State University found about half of American jobs were at risk of automation, many of them low-income jobs.[91] A September 2017 report by McKinsey & Company found that as of 2015, 478 billion out of 749 billion working hours per year dedicated to manufacturing, or $2.7 trillion out of $5.1 trillion in labor, were already automatable. In low-skill areas, 82% of labor in apparel goods, 80% of agriculture processing, 76% of food manufacturing, and 60% of beverage manufacturing were subject to automation. In mid-skill areas, 72% of basic materials production and 70% of furniture manufacturing was automatable. In high-skill areas, 52% of aerospace and defense labor and 50% of advanced electronics labor could be automated.[92] In October 2017, a survey of information technology decision makers in the US and UK found that a majority believed that most business processes could be automated by 2022. On average, they said that 59% of business processes were subject to automation.[93] A November 2017 report by the McKinsey Global Institute that analyzed around 800 occupations in 46 countries estimated that between 400 million and 800 million jobs could be lost due to robotic automation by 2030. It estimated that jobs were more at risk in developed countries than developing countries due to a greater availability of capital to invest in automation.[94] Job losses and downward mobility blamed on automation has been cited as one of many factors in the resurgence of nationalist and protectionist politics in the US, UK and France, among other countries.[95][96][97][98][99]

However, not all recent empirical studies have found evidence to support the idea that automation will cause widespread unemployment. A study released in 2015, examining the impact of industrial robots in 17 countries between 1993 and 2007, found no overall reduction in employment was caused by the robots, and that there was a slight increase in overall wages.[100] According to a study published in McKinsey Quarterly[101] in 2015 the impact of computerization in most cases is not replacement of employees but automation of portions of the tasks they perform.[102] A 2016 OECD study found that among the 21 OECD countries surveyed, on average only 9% of jobs were in foreseeable danger of automation, but this varied greatly among countries: for example in South Korea the figure of at-risk jobs was 6% while in Austria it was 12%.[103] In contrast to other studies, the OECD study does not primarily base its assessment on the tasks that a job entails, but also includes demographic variables, including sex, education and age. In 2017, Forrester estimated that automation would result in a net loss of about 7% of jobs in the US by 2027, replacing 17% of jobs while creating new jobs equivalent to 10% of the workforce.[104] Another study argued that the risk of US jobs to automation had been overestimated due to factors such as the heterogeneity of tasks within occupations and the adaptability of jobs being neglected. The study found that once this was taken into account, the number of occupations at risk to automation in the US drops, ceteris paribus, from 38% to 9%.[105] A 2017 study on the effect of automation on Germany found no evidence that automation caused total job losses but that they do affect the jobs people are employed in; losses in the industrial sector due to automation were offset by gains in the service sector. Manufacturing workers were also not at risk from automation and were in fact more likely to remain employed, though not necessarily doing the same tasks. However, automation did result in a decrease in labour's income share as it raised productivity but not wages.[106]

A 2018 Brookings Institution study that analyzed 28 industries in 18 OECD countries from 1970 to 2018 found that automation was responsible for holding down wages. Although it concluded that automation did not reduce the overall number of jobs available and even increased them, it found that from the 1970s to the 2010s, it had reduced the share of human labor in the value added to the work, and thus had helped to slow wage growth.[107] In April 2018, Adair Turner, former Chairman of the Financial Services Authority and head of the Institute for New Economic Thinking, stated that it would already be possible to automate 50% of jobs with current technology, and that it will be possible to automate all jobs by 2060.[108]

Premature deindustrialization

Premature deindustrialization occurs when developing nations deindustrialize without first becoming rich, as happened with the advanced economies. The concept was popularized by Dani Rodrik in 2013, who went on to publish several papers showing the growing empirical evidence for the phenomena. Premature deindustrialization adds to concern over technological unemployment for developing countries – as traditional compensation effects that advanced economy workers enjoyed, such being able to get well paid work in the service sector after losing their factory jobs – may not be available.[109][110]

Some commentators, such as Carl Benedikt Frey, argue that with the right responses, the negative effects of further automation on workers in developing economies can still be avoided.[111]

Artificial intelligence

Since about 2017, a new wave of concern over technological unemployment had become prominent, this time over the effects of artificial intelligence (AI).[112] Commentators including Calum Chace and Daniel Hulme have warned that if unchecked, AI threatens to cause an "economic singularity", with job churn too rapid for humans to adapt to, leading to widespread technological unemployment. However, they also advise that with the right responses by business leaders, policy makers and society, the impact of AI could be a net positive for workers.[113][114]

Morgan R. Frank et al. cautions that there are several barriers preventing researchers from making accurate predictions of the effects AI will have on future job markets.[115] Marian Krakovsky has argued that the jobs most likely to be completely replaced by AI are in middle-class areas, such as professional services. Often, the practical solution is to find another job, but workers may not have the qualifications for high-level jobs and so must drop to lower level jobs. However, Krakovsky (2018) predicts that AI will largely take the route of "complementing people", rather than "replicating people". Suggesting that the goal of people implementing AI is to improve the life of workers, not replace them.[116] Studies have also shown that rather than solely destroying jobs AI can also create work: albeit low-skill jobs to train AI in low-income countries.[117]

Following Russian president Vladimir Putin's 2017 statement that whichever country first achieves mastery in AI "will become the ruler of the world", various national and supranational governments have announced AI strategies. Concerns on not falling behind in the AI arms race have been more prominent than worries over AI's potential to cause unemployment. Several strategies suggest that achieving a leading role in AI should help their citizens get more rewarding jobs. Finland has aimed to help the citizens of other EU nations acquire the skills they need to compete in the post-AI jobs market, making a free course on "The Elements of AI" available in multiple European languages.[118][119][120] Oracle CEO Mark Hurd predicted that AI "will actually create more jobs, not less jobs" as humans will be needed to manage AI systems.[121]

Martin Ford argues that many jobs are routine, repetitive and (to an AI) predictable; Ford warns that these jobs may be automated in the next couple of decades, and that many of the new jobs may not be "accessible to people with average capability", even with retraining.[122]

Certain digital technologies are predicted to result in more job losses than others. For example, in recent years, the adoption of modern robotics has led to net employment growth. However, many businesses anticipate that automation, or employing robots would result in job losses in the future. This is especially true for companies in Central and Eastern Europe.[123][124][125]

Other digital technologies, such as platforms or big data, are projected to have a more neutral impact on employment.[123][125]

Entry-level job offers in the U.S. and U.K. have dropped by 33%. Unemployment among university graduates hit a record high, reaching higher than the general unemployment rate for the first time.[126] In the US, the unemployment rate for college graduates was about 5.8% in 2025, a jump of about 30% since 2022.[127] In a survey of more than 850 business leaders across the UK, US, France, Germany, Australia, China and Japan, 41% of bosses reported that AI was allowing them to cut staffing at their firms.[128] In Australia 49% of larger firms and 37% of Small and Medium Enterprises have cut roles as a result of AI.[129]

In 2024, rightwing think tank American Enterprise Institute argued that the jobpocalypse did not exist.[130]

Issues within the debates

Long-term effects on employment

Lawrence Summers[64]

Participants in the technological employment debates agree that temporary job losses can result from technological innovation. Similarly, there is no dispute that innovation sometimes has positive effects on workers. Disagreement focuses on whether it is possible for innovation to have a lasting negative impact on overall employment. Levels of persistent unemployment can be quantified empirically, but the causes are subject to debate. Optimists accept short term unemployment may be caused by innovation, yet claim that after a while, compensation effects will always create at least as many jobs as were originally destroyed. While this optimistic view has been continually challenged, it was dominant among mainstream economists for most of the 19th and 20th centuries.[131][132] For example, labor economists Jacob Mincer and Stephan Danninger developed an empirical study using data from the Panel Study of Income Dynamics, and find that although in the short run, technological progress seems to have unclear effects on aggregate unemployment, it reduces unemployment in the long run. When they include a 5-year lag, however, the evidence supporting a short-run employment effect of technology seems to disappear as well, suggesting that technological unemployment "appears to be a myth".[133] Other studies, on the other hand, suggest that the labour-market effects of technologies such as industrial robots strongly depend on domestic institutional context.[134]

The concept of structural unemployment, a lasting level of joblessness that does not disappear even at the high point of the business cycle, became popular in the 1960s. For pessimists, technological unemployment is one of the factors driving the wider phenomena of structural unemployment. Since the 1980s, even optimistic economists have increasingly accepted that structural unemployment has indeed risen in advanced economies, but they have tended to attribute this on globalisation and offshoring rather than technological change. Others claim a chief cause of the lasting increase in unemployment has been the reluctance of governments to pursue expansionary policies since the displacement of Keynesianism that occurred in the 1970s and early 80s.[131][135][44] In the 21st century, and especially since 2013, pessimists have been arguing with increasing frequency that lasting worldwide technological unemployment is a growing threat.[132][55][26][136]

Compensation effects

Compensation effects are labour-friendly consequences of innovation which "compensate" workers for job losses initially caused by new technology. In the 1820s, several compensation effects were described by Jean-Baptiste Say in response to Ricardo's statement that long-term technological unemployment could occur. Soon after, a whole system of effects was developed by Ramsey McCulloch. The system was labelled "compensation theory" by Karl Marx, who criticized its ideas, arguing that none of the effects were guaranteed to operate. Disagreement over the effectiveness of compensation effects has remained a central part of academic debates on technological unemployment ever since.[44][137]

Compensation effects include:

- By new machines. (The labour needed to build the new equipment that applied innovation requires.)

- By new investments. (Enabled by the cost savings and therefore increased profits from the new technology.)

- By changes in wages. (In cases where unemployment does occur, this can cause a lowering of wages, thus allowing more workers to be re-employed at the now lower cost. On the other hand, sometimes workers will enjoy wage increases as their profitability rises. This leads to increased income and therefore increased spending, which in turn encourages job creation.)

- By lower prices. (Which then lead to more demand, and therefore more employment.) Lower prices can also help offset wage cuts, as cheaper goods will increase workers' buying power.

- By new products. (Where innovation directly creates new jobs.)

The "by new machines" effect is now rarely discussed by economists; it is often accepted that Marx successfully refuted it.[44] Even pessimists often concede that product innovation associated with the "by new products" effect can sometimes have a positive effect on employment. An important distinction can be drawn between 'process' and 'product' innovations.[note 5] Evidence from Latin America seems to suggest that product innovation significantly contributes to the employment growth at the firm level, more so than process innovation.[138] The extent to which the other effects are successful in compensating the workforce for job losses has been extensively debated throughout the history of modern economics; the issue is still not resolved.[44][45] One such effect that potentially complements the compensation effect is job multiplier. According to research developed by Enrico Moretti, with each additional skilled job created in high tech industries in a given city, more than two jobs are created in the non-tradable sector. His findings suggest that technological growth and the resulting job-creation in high-tech industries might have a more significant spillover effect than anticipated.[139] Evidence from Europe also supports such a job multiplier effect, showing local high-tech jobs could create five additional low-tech jobs.[140]

Many economists pessimistic about technological unemployment accept that compensation effects did largely operate as the optimists claimed through most of the 19th and 20th century. Yet they hold that the advent of computerisation means that compensation effects have become less effective. An early example of this argument was made by Wassily Leontief in 1983. He conceded that after some disruption, the advance of mechanization during the Industrial Revolution increased the demand for labour as well as increasing pay due to effects that flow from increased productivity.[141] While early machines lowered the demand for muscle power, they were unintelligent and needed large numbers of human operators to remain productive. Yet since the introduction of computers into the workplace, there is now less need not just for muscle power but also for human brain power. Hence even as productivity continues to rise, the lower demand for human labour may mean less pay and employment.[44][26]

Luddite fallacy

The term "Luddite fallacy" is sometimes used to express the view that those concerned about long-term technological unemployment are committing a fallacy, as they fail to account for compensation effects. People who use the term typically expect that technological progress will have no long-term impact on employment levels, and eventually will raise wages for all workers, because progress helps to increase the overall wealth of society. The term is originating on from the Luddites, members of an early 19th century English anti-textile-machinery organisation. During the 20th century and the first decade of the 21st century, the dominant view among economists has been that belief in long-term technological unemployment was indeed a fallacy. More recently, there has been increased support for the view that the benefits of automation are not equally distributed.[132][142][143]

There are two different theories for why long-term difficulty could develop.

- Traditionally ascribed to the Luddites (accurately or not), that there is a finite amount of work available and if machines do it, there can be none left for humans. Economists may call this the lump of labour fallacy, arguing that in reality no such limitation exists.

- A long-term difficulty can arise that has nothing to do with any lump of labour. In this view, the amount of work that can exist is infinite, but

- machines can do most of the "easy" work that requires less skill, talent, knowledge, or insight

- the definition of what is "easy" expands as information technology progresses, and

- the work that lies beyond "easy" may require greater brainpower than most people have.

This second view is supported by many modern advocates of the possibility of long-term, systemic technological unemployment.

In his 2018 book Bullshit Jobs, David Graeber argues that the real reason that mass unemployment has never materialised, despite a widespread expectation, that total hours worked and the length of the workweek have not substantially declined since the 1930s, and that instead overwork is considered a pervasive problem, is that genuinely necessary jobs that have been lost to automation have been replaced by jobs, typically white-collar jobs, whose relevance to the economy is unclear and which do not respond to any genuine market demand (as opposed especially to essential labour such as care work, part of critical infrastructure), and which even those who are employed in these jobs themselves often cannot justify and find pointless.[144]

Skill levels and technological unemployment

A frequent view among those discussing the effect of innovation on the labour market has been that it mainly hurts those with low skills, while often benefiting skilled workers. According to scholars such as Lawrence F. Katz, this may have been true for much of the twentieth century, yet in the 19th century, innovations in the workplace largely displaced costly skilled artisans, and generally benefited the low skilled. While 21st century innovation has been replacing some unskilled work, other low skilled occupations remain resistant to automation, while white collar work requiring intermediate skills is increasingly being performed by autonomous computer programs.[145][146][147]

Some recent studies however, such as a 2015 paper by Georg Graetz and Guy Michaels, found that at least in the area they studied – the impact of industrial robots – innovation is boosting pay for highly skilled workers while having a more negative impact on those with low to medium skills.[100] A 2015 report by Carl Benedikt Frey, Michael Osborne and Citi Research agreed that innovation had been disruptive mostly to middle-skilled jobs, yet predicted that in the next ten years the impact of automation would fall most heavily on those with low skills.[148]

Geoffrey Colvin at Forbes argued that predictions on the kind of work a computer will never be able to do have proven inaccurate. A better approach to anticipate the skills on which humans will provide value would be to find out activities where we will insist that humans remain accountable for important decisions, such as with judges, CEOs, bus drivers and government leaders, or where human nature can only be satisfied by deep interpersonal connections, even if those tasks could be automated.[149]

In contrast, others see even skilled human laborers being obsolete. Oxford academics Carl Benedikt Frey and Michael A Osborne have predicted computerization could make nearly half of jobs redundant;[150] of the 702 professions assessed, they found a strong correlation between education and income with ability to be automated, with office jobs and service work being some of the more at risk.[151] In 2012 co-founder of Sun Microsystems Vinod Khosla predicted that 80% of medical doctors' jobs would be lost in the next two decades to automated machine learning medical diagnostic software.[152] A recent study by researchers at the University of Oxford also shows that certain applications of artificial intelligence, such as machine translation, have significantly reduced the demand for translators and language skills in the US.[153]

The issue of redundant job places is elaborated by the 2019 paper by Natalya Kozlova, according to which over 50% of workers in Russia perform work that requires low levels of education and can be replaced by applying digital technologies. Only 13% of those people possess education that exceeds the level of intellectual computer systems present today and expected within the following decade.[154]

Empirical findings

There has been a significant amount of empirical research that attempts to quantify the impact of technological unemployment, mainly at the microeconomic level. Most existing firm-level research has found a labor-friendly nature of technological innovations. For example, German economists Stefan Lachenmaier and Horst Rottmann find that both product and process innovation have a positive effect on employment. They also find that process innovation has a more significant job creation effect than product innovation.[155] This result is supported by evidence in the United States as well, which shows that manufacturing firm innovations have a positive effect on the total number of jobs, not just limited to firm-specific behavior.[156]

At the industry level, however, researchers have found mixed results with regard to the employment effect of technological changes. A 2017 study on manufacturing and service sectors in 11 European countries suggests that positive employment effects of technological innovations only exist in the medium- and high-tech sectors. There also seems to be a negative correlation between employment and capital formation, which suggests that technological progress could potentially be labor-saving given that process innovation is often incorporated in investment.[157]

Limited macroeconomic analysis has been done to study the relationship between technological shocks and unemployment. The small amount of existing research, however, suggests mixed results. Italian economist Marco Vivarelli finds that the labor-saving effect of process innovation appears to have affected the Italian economy more negatively than the United States. On the other hand, the job creating effect of product innovation could only be observed in the United States, not Italy.[158] Another study in 2013 finds a more transitory, rather than permanent, unemployment effect of technological change.[159]

A 2019 study found it unlikely that new technologies would "cause wages for all workers to fall." The study found that under plausible assumptions, new technologies "will cause average wages to rise if the prices of investment goods fall relative to consumer goods (a condition supported by the data)."[160]

A 2013 study found that the labor market effects of technology tended to be geographically dispersed across the United States, in contrast to the labor market effects of trade which tended to be more concentrated geographically.[161]

Measures of technological innovation

There have been four main approaches that attempt to capture and document technological innovation quantitatively. The first one, proposed by Jordi Gali in 1999 and further developed by Neville Francis and Valerie A. Ramey in 2005, is to use long-run restrictions in a vector autoregression (VAR) to identify technological shocks, assuming that only technology affects long-run productivity.[162][163]

The second approach is from Susanto Basu, John Fernald and Miles Kimball.[164] They create a measure of aggregate technology change with augmented Solow residuals, controlling for aggregate, non-technological effects such as non-constant returns and imperfect competition.[citation needed]

The third method, initially developed by John Shea in 1999, takes a more direct approach and employs observable indicators such as research and development (R&D) spending, and number of patent applications.[165] This measure of technological innovation is widely used in empirical research, since it does not rely on the assumption that only technology affects long-run productivity, and fairly accurately captures output variation based on input variation. However, there are limitations with direct measures such as R&D. For example, since R&D only measures the input in innovation, the output is unlikely to be perfectly correlated with the input. In addition, R&D fails to capture the indeterminate lag between developing a new product or service, and bringing it to market.[166]

The fourth approach, constructed by Michelle Alexopoulos, looks at the number of new titles published in the fields of technology and computer science to reflect technological progress, which he found to be consistent with R&D expenditure data.[167] Compared with R&D, this indicator captures the lag between changes in technology.

Solutions

Preventing net job losses

Banning/refusing innovation

Historically, innovations were sometimes banned due to concerns about their impact on employment. Since the development of modern economics, however, this option has generally not even been considered as a solution, at least not for the advanced economies. Even commentators who are pessimistic about long-term technological unemployment invariably consider innovation to be an overall benefit to society, with J. S. Mill being perhaps the only prominent western political economist to have suggested prohibiting the use of technology as a possible solution to unemployment.[137]

Gandhian economics called for a delay in the uptake of labour saving machines until unemployment was alleviated, however this advice was largely rejected by Nehru who was to become prime minister once India achieved its independence. The policy of slowing the introduction of innovation so as to avoid technological unemployment was, however, implemented in the 20th century within China under Mao's administration.[169][170][171]

Shorter working hours

In 1870, the average American worker clocked up about 75 hours per week. Just prior to World War II working hours had fallen to about 42 per week, and the fall was similar in other advanced economies. According to Wassily Leontief, this was a voluntary increase in technological unemployment. The reduction in working hours helped share out available work, and was favoured by workers who were happy to reduce hours to gain extra leisure, as innovation was at the time generally helping to increase their rates of pay.[141]

Further reductions in working hours have been proposed as a possible solution to unemployment by economists including John R. Commons, Lord Keynes and Luigi Pasinetti. Yet once working hours have reached about 40 hours per week, workers have been less enthusiastic about further reductions, both to prevent loss of income and as many value engaging in work for its own sake. Generally, 20th-century economists had argued against further reductions as a solution to unemployment, saying it reflects a lump of labour fallacy.[172] In 2014, Google's co-founder, Larry Page, suggested a four-day workweek, so as technology continues to displace jobs, more people can find employment.[65][173][174]

Public works

Programmes of public works have traditionally been used as way for governments to directly boost employment, though this has often been opposed by some, but not all, conservatives. Jean-Baptiste Say, although generally associated with free market economics, advised that public works could be a solution to technological unemployment.[175] Some commentators, such as professor Mathew Forstater, have advised that public works and guaranteed jobs in the public sector may be the ideal solution to technological unemployment, as unlike welfare or guaranteed income schemes they provide people with the social recognition and meaningful engagement that comes with work.[176][177]

For less developed economies, public works may be an easier to administrate solution compared to universal welfare programmes.[141] A partial exception is for spending on infrastructure, which has been recommended as a solution to technological unemployment even by economists previously associated with a neoliberal agenda, such as Larry Summers.[178]

Education

Improved availability to quality education, including skills training for adults, is a solution that in principle at least is not opposed by any side of the political spectrum, and welcomed even by those who are optimistic about long-term technological employment. Improved education paid for by government tends to be especially popular with industry. However, several academics have argued that improved education alone will not be sufficient to solve technological unemployment, pointing to recent declines in the demand for many intermediate skills, and suggesting that not everyone is capable in becoming proficient in the most advanced skills.[145][146][147] Kim Taipale has said that "The era of bell curve distributions that supported a bulging social middle class is over... Education per se is not going to make up the difference."[179] while back in 2011 Paul Krugman argued that better education would be an insufficient solution to technological unemployment.[180]

A 2026 study in the American Economic Review found that rural young women in Norway, who were displaced as hand-milkers by the widespread adoption of milking machines on Norwegians firms, moved to urban areas where they received more education and subsequently got higher-paying skilled jobs.[181]

Living with technological unemployment

Welfare payments

The use of various forms of subsidies has often been accepted as a solution to technological unemployment even by conservatives and by those who are optimistic about the long-term effect on jobs. Welfare programmes have historically tended to be more durable once established, compared with other solutions to unemployment such as directly creating jobs with public works. Despite being the first person to create a formal system describing compensation effects, Ramsey McCulloch and most other classical economists advocated government aid for those suffering from technological unemployment, as they understood that market adjustment to new technology was not instantaneous and that those displaced by labour-saving technology would not always be able to immediately obtain alternative employment through their own efforts.[137]

Basic income

Several commentators have argued that traditional forms of welfare payment may be inadequate as a response to the future challenges posed by technological unemployment, and have suggested a basic income as an alternative.[182] People advocating some form of basic income as a solution to technological unemployment include Martin Ford, [183] Erik Brynjolfsson,[58] Robert Reich, Andrew Yang, Elon Musk, Zoltan Istvan, and Guy Standing. Reich has gone as far as to say the introduction of a basic income, perhaps implemented as a negative income tax is "almost inevitable",[184] while Standing has said he considers that a basic income is becoming "politically essential".[185] Since late 2015, new basic income pilots have been announced in Finland, the Netherlands, and Canada. Further recent advocacy for basic income has arisen from a number of technology entrepreneurs, the most prominent being Sam Altman, co-founder of Loopt and CEO of OpenAI.[186][187]

Skepticism about basic income includes both right and left elements, and proposals for different forms of it have come from all segments of the spectrum. For example, while the best-known proposed forms (with taxation and distribution) are usually thought of as left-leaning ideas that right-leaning people try to defend against, other forms have been proposed even by libertarians, such as von Hayek and Friedman. In the United States, President Richard Nixon's Family Assistance Plan (FAP) of 1969, which had much in common with basic income, passed in the House but was defeated in the Senate.[188]

One objection to basic income is that it could be a disincentive to work, but evidence from older pilots in India, Africa, and Canada indicates that this does not happen and that a basic income encourages low-level entrepreneurship and more productive, collaborative work. Another objection is that funding it sustainably is a huge challenge. While new revenue-raising ideas have been proposed such as Martin Ford's wage recapture tax, how to fund a generous basic income remains a debated question, and skeptics have dismissed it as utopian. Even from a progressive viewpoint, there are concerns that a basic income set too low may not help the economically vulnerable, especially if financed largely from cuts to other forms of welfare.[185][189][190][191]

To better address both the funding concerns and concerns about government control, one alternative model is that the cost and control would be distributed across the private sector instead of the public sector. Companies across the economy would be required to employ humans, but the job descriptions would be left to private innovation, and individuals would have to compete to be hired and retained. This would be a for-profit sector analog of basic income, that is, a market-based form of basic income. It differs from a job guarantee in that the government is not the employer (rather, companies are) and there is no aspect of having employees who "cannot be fired", a problem that interferes with economic dynamism. The economic salvation in this model is not that every individual is guaranteed a job, but rather just that enough jobs exist that massive unemployment is avoided and employment is no longer solely the privilege of only the very smartest or highly trained 20% of the population. Another option for a market-based form of basic income has been proposed by the Center for Economic and Social Justice (CESJ) as part of "a Just Third Way" (a Third Way with greater justice) through widely distributed power and liberty. Called the Capital Homestead Act,[192] it is reminiscent of James S. Albus's Peoples' Capitalism[36][37] in that money creation and securities ownership are widely and directly distributed to individuals rather than flowing through, or being concentrated in, centralized or elite mechanisms.

Broadening the ownership of technological assets

Several solutions have been proposed which do not fall easily into the traditional left-right political spectrum. This includes broadening the ownership of robots and other productive capital assets. Enlarging the ownership of technologies has been advocated by people including James S. Albus[36][193] John Lanchester,[194] Richard B. Freeman,[190] and Noah Smith.[195] Jaron Lanier has proposed a somewhat similar solution: a mechanism where ordinary people receive "nano payments" for the big data they generate by their regular surfing and other aspects of their online presence.[196]

Structural changes towards a post-scarcity economy

The Zeitgeist Movement (TZM), The Venus Project (TVP) as well as various individuals and organizations propose structural changes towards a form of a post-scarcity economy in which people are 'freed' from their automatable, monotonous jobs, instead of 'losing' their jobs. In the system proposed by TZM all jobs are either automated, abolished for bringing no true value for society (such as ordinary advertising), rationalized by more efficient, sustainable and open processes and collaboration or carried out based on altruism and social relevance, opposed to compulsion or monetary gain.[197][198][199][200][201] The movement also speculates that the free time made available to people will permit a renaissance of creativity, invention, community and social capital as well as reducing stress.[197]

Other approaches

The threat of technological unemployment has occasionally been used by free market economists as a justification for supply side reforms, to make it easier for employers to hire and fire workers. Conversely, it has also been used as a reason to justify an increase in employee protection.[135][202]

Economists including Larry Summers have advised a package of measures may be needed. He advised vigorous cooperative efforts to address the "myriad devices" – such as tax havens, bank secrecy, money laundering, and regulatory arbitrage – which enable the holders of great wealth to avoid paying taxes, and to make it more difficult to accumulate great fortunes without requiring "great social contributions" in return. Summers suggested more vigorous enforcement of anti-monopoly laws; reductions in "excessive" protection for intellectual property; greater encouragement of profit-sharing schemes that may benefit workers and give them a stake in wealth accumulation; strengthening of collective bargaining arrangements; improvements in corporate governance; strengthening of financial regulation to eliminate subsidies to financial activity; easing of land-use restrictions that may cause estates to keep rising in value; better training for young people and retraining for displaced workers; and increased public and private investment in infrastructure development, such as energy production and transportation.[64][65][66][203]

Michael Spence has advised that responding to the future impact of technology will require a detailed understanding of the global forces and flows technology has set in motion. Adapting to them "will require shifts in mindsets, policies, investments (especially in human capital), and quite possibly models of employment and distribution".[note 6][204]

See also

- Autonomous car

- Disruptive innovation

- Emerging technologies

- Fourth Industrial Revolution

- Futures studies

- Fully Automated Luxury Communism

- Historical materialism

- Humans Need Not Apply

- Industrial society

- Lucas Plan

- Lump of labor fallacy

- Parable of the broken window

- Player Piano

- Post-work society

- Robot tax

- Salary inversion

- Technological revolution

- Technological singularity

- Technological transitions

- Technophobia

- The End of Work

- The Future of Work and Death

- The Triple Revolution

- Working time

Notes

- ↑ Smith did not directly address the problem of technological unemployment, but the Dean had, saying in 1757 that in the long term, the introduction of machinery would allow more employment than would have been possible without them.

- ↑ Typically the introduction of machinery would both increase output and lower cost per unit.

- ↑ In the 1930s, this study was Unemployment and technological change(Report no. G-70, 1940) by Corrington Calhoun Gill of the 'National Research Project on Reemployment Opportunities and Recent changes in Industrial Techniques'. Some earlier Federal reports took a pessimistic view of technological unemployment, e.g. Memorandum on Technological Unemployment (1933) by Ewan Clague Bureau of Labor Statistics. Some authorities – e.g. Udo Sautter in Chpt 5 of Three Cheers for the Unemployed: Government and Unemployment Before the New Deal (Cambridge University Press, 1991) – say that in the early 1930s there was near consensus among US experts that technological unemployment was a major problem. Other's though like Bruce Bartlett in Is Industrial Innovation Destroying Jobs (Cato Journal 1984) argue that most economists remained optimistic even during the 1930s. In the 1960s episode, the major Federal study that bookmarked the end of the period of intense debate was Technology and the American economy (1966) by the 'National Commission on Technology, Automation, and Economic Progress' established by president Lyndon Johnson in 1964

- ↑ Other recent statements by Summers include warnings on the "devastating consequences" for those who perform routine tasks arising from robots, 3-D printing, artificial intelligence, and similar technologies. In his view, "already there are more American men on disability insurance than doing production work in manufacturing. And the trends are all in the wrong direction, particularly for the less skilled, as the capacity of capital embodying artificial intelligence to replace white-collar as well as blue-collar work will increase rapidly in the years ahead." Summers has also said that "[T]here are many reasons to think the software revolution will be even more profound than the agricultural revolution. This time around, change will come faster and affect a much larger share of the economy. [...] [T]here are more sectors losing jobs than creating jobs. And the general-purpose aspect of software technology means that even the industries and jobs that it creates are not forever. [...] If current trends continue, it could well be that a generation from now a quarter of middle-aged men will be out of work at any given moment."

- ↑ Labour-displacing technologies can be classified under the headings of mechanization, automation, and process improvement. The first two fundamentally involve transferring tasks from humans to machines. The third often involves the elimination of tasks altogether. The common theme of all three is that tasks are removed from the workforce, decreasing employment. In practice, the categories often overlap: a process improvement can include an automating or mechanizing achievement. The line between mechanization and automation is also subjective, as sometimes mechanization can involve sufficient control to be viewed as part of automation.

- ↑ Spence also wrote that "Now comes a ... powerful, wave of digital technology that is replacing labor in increasingly complex tasks. This process of labor substitution and disintermediation has been underway for some time in service sectors – think of ATMs, online banking, enterprise resource planning, customer relationship management, mobile payment systems, and much more. This revolution is spreading to the production of goods, where robots and 3D printing are displacing labor." In his view, the vast majority of the cost of digital technologies comes at the start, in the design of hardware (e.g. sensors) and, more important, in creating the software that enables machines to carry out various tasks. "Once this is achieved, the marginal cost of the hardware is relatively low (and declines as scale rises), and the marginal cost of replicating the software is essentially zero. With a huge potential global market to amortize the upfront fixed costs of design and testing, the incentives to invest [in digital technologies] are compelling." Spence believes that, unlike prior digital technologies, which drove firms to deploy underutilized pools of valuable labor around the world, the motivating force in the current wave of digital technologies "is cost reduction via the replacement of labor." For example, as the cost of 3D printing technology declines, it is "easy to imagine" that production may become "extremely" local and customized. Moreover, production may occur in response to actual demand, not anticipated or forecast demand. "Meanwhile, the impact of robotics ... is not confined to production. Though self-driving cars and drones are the most attention-getting examples, the impact on logistics is no less transformative. Computers and robotic cranes that schedule and move containers around and load ships now control the Port of Singapore, one of the most efficient in the world." Spence believes that labor, no matter how inexpensive, will become a less important asset for growth and employment expansion, with labor-intensive, process-oriented manufacturing becoming less effective, and that re-localization will appear globally. In his view, production will not disappear, but it will be less labor-intensive, and all countries will eventually need to rebuild their growth models around digital technologies and the human capital supporting their deployment and expansion.

References

Citations

- ↑ Peters, Michael A. (2020). "Beyond technological unemployment: the future of work". Educational Philosophy and Theory 52 (5): 485–491. doi:10.1080/00131857.2019.1608625.

- ↑ Peters, Michael A. (2017). "Technological unemployment: Educating for the fourth industrial revolution". Educational Philosophy and Theory 49 (1): 1–6. doi:10.1080/00131857.2016.1177412.

- ↑ Lima, Yuri; Barbosa, Carlos Eduardo; dos Santos, Herbert Salazar; de Souza, Jano Moreira (2021). "Understanding Technological Unemployment: A Review of Causes, Consequences, and Solutions". Societies 11 (2): 50. doi:10.3390/soc11020050.

- ↑ Chuang, Szufang; Graham, Carroll Marion (3 September 2018). "Embracing the sobering reality of technological influences on jobs, employment and human resource development: A systematic literature review" (in en). European Journal of Training and Development 42 (7/8): 400–416. doi:10.1108/EJTD-03-2018-0030. ISSN 2046-9012.

- ↑ The Economic Possibilities of our Grandchildren (1930). E McGaughey, 'Will Robots Automate Your Job Away? Full Employment, Basic Income, and Economic Democracy' (2022) 51(3) Industrial Law Journal 511, part 2(2)

- ↑ Varanasi, Lakshmi. "Will AI replace human jobs and make universal basic income necessary? Here's what AI leaders have said about UBI." (in en-US). https://www.businessinsider.com/universal-basic-income-ai.

- ↑ Marr, Bernard. "Will AI Make Universal Basic Income Inevitable?" (in en). https://www.forbes.com/sites/bernardmarr/2024/12/12/will-ai-make-universal-basic-income-inevitable/.

- ↑ "Transcript: Rethinking the AI boom, with Daron Acemoğlu". Financial Times. 2 September 2024. https://www.ft.com/content/780559a8-f699-4c1b-878e-f9899c8e0d8b.

- ↑ "Why AI will not lead to technological unemployment" (in en). 15 August 2024. https://www.weforum.org/stories/2024/08/why-ai-will-not-lead-to-a-world-without-work/.

- ↑ "The Changing Nature of Work". http://www.worldbank.org/en/publication/wdr2019.

- ↑ Bhorat, Ziyaad (2022). "Automation, Slavery, and Work in Aristotle's Politics Book I". Polis: The Journal for Ancient Greek and Roman Political Thought 39 (2): 279–302. doi:10.1163/20512996-12340366.

- ↑ Devecka, Martin (2013). "Did the Greeks Believe in Their Robots?". The Cambridge Classical Journal 59: 52–69. doi:10.1017/S1750270513000079.

- ↑ Woirol 1996, p. 17

- ↑ 14.0 14.1 14.2 "Relief". The San Bernardino County Sun (California). 3 March 1940. https://www.newspapers.com/newspage/48947293/.

- ↑ Forbes 1932, p2

- ↑ Forbes 1932, pp24 -30

- ↑ Campa, Riccardo (Feb 2014). "Technological Growth and Unemployment: A Global Scenario Analysis". Journal of Evolution and Technology. ISSN 1541-0099. http://jetpress.org/v24/campa2.htm.

- ↑ Forbes 1993, chapter 2

- ↑ Forbes 1932, passim, see esp. pp. 49–53

- ↑ See book eight, chapt XVIII of Suetonius's The Twelve Caesars.

- ↑ Forbes 1932, pp147 -150

- ↑ Roberto Sabatino Lopez (1976). "Chpt. 2,3". The Commercial Revolution of the Middle Ages, 950-1350. Cambridge University Press. ISBN 978-0521290463.

- ↑ 23.0 23.1 Schumpeter 1987, Chpt 6

- ↑ On occasion these executions were carried out with methods normally reserved for only the worst criminals, for example on a single occasion in the south of France, 58 people were broken on the Catherine wheel for selling forbidden goods. See Chpt 1 of The Worldly Philosophers.

- ↑ E.g by Sir John Habakkuk in American and British Technology in the Nineteenth Century (1962), Cambridge University Press – Habakkuk also went on to say that due to labour shortages, compared with their British counterparts there was far less resistance from U.S. workers to the introduction of technology, leading to more update of innovation, and hence to the more efficient American system of manufacturing

- ↑ 26.0 26.1 26.2 26.3 26.4 26.5 26.6 "The future of employment: how susceptible are jobs to computerisation". Oxford University, Oxford Martin School. 17 September 2013. http://www.oxfordmartin.ox.ac.uk/downloads/academic/The_Future_of_Employment.pdf.

- ↑ Schumpeter 1987, Chpt 4

- ↑ Sowell, T. (2006), "Chapter 5: Sismondi: A Neglected Pioneer", On Classical Economics

- ↑ While initially of the view that innovation benefited the whole population, Ricardo was persuaded by Malthus that technology could both push down wages for the working class, and cause long-term unemployment. He famously expressed these views in a chapter called "on Machinery", added to the third and final (1821) edition of On the Principles of Political Economy and Taxation

- ↑ Bartlett, Bruce (18 January 2014). "Is industrial innovation destroying jobs?". Cato Journal. https://www.academia.edu/3465367. Retrieved 14 July 2015.

- ↑ Woirol 1996, pp. 2, 20–22

- ↑ Woirol 1996, pp. 2, 8–12

- ↑ 33.0 33.1 Woirol 1996, pp. 8–12

- ↑ Samuelson, Paul (1989). "Ricardo Was Right!". The Scandinavian Journal of Economics 91 (1): 47–62. doi:10.2307/3440162. https://ideas.repec.org/a/bla/scandj/v91y1989i1p47-62.html.

- ↑ Woirol 1996, pp. 143–144

- ↑ 36.0 36.1 36.2 James S. Albus, Peoples' Capitalism: The Economics of the Robot Revolution (free download)

- ↑ 37.0 37.1 James S. Albus, People's Capitalism main website

- ↑ Noble 1984

- ↑ Noble 1993.

- ↑ Rifkin 1995

- ↑ The Global Trap defines a possible "20/80 society" that may emerge in the 21st century. In this potential society, 20% of the working age population will be enough to keep the world economy going. The authors describe how at a conference at the invitation of Mikhail Gorbachev with 500 leading politicians, business leaders and academics from all continents from 27 September – 1 October 1995 at the Fairmont Hotel in San Francisco, the term "one-fifth-society" arose. The authors describe an increase in productivity caused by the decrease in the amount of work, so this could be done by one-fifth of the global labor force and leave four-fifths of the working age people out of work.

- ↑ Woirol 1996, p. 3

- ↑ 43.0 43.1 MacCarthy, Mark (30 September 2014). "Time to kill the tech job-killing myth". The Hill. https://thehill.com/blogs/congress-blog/technology/219224-time-to-kill-the-tech-job-killing-myth/.

- ↑ 44.0 44.1 44.2 44.3 44.4 44.5 Vivarelli, Marco (January 2012). "Innovation, Employment and Skills in Advanced and Developing Countries: A Survey of the Literature". Institute for the Study of Labor. http://ftp.iza.org/dp6291.pdf.

- ↑ 45.0 45.1 Vivarelli, Marco (February 2007). "Innovation and Employment: : A Survey". Institute for the Study of Labor. http://ftp.iza.org/dp2621.pdf.

- ↑ Brain 2003.

- ↑ Ford 2009.

- ↑ Lohr, Steve (23 October 2011). "More Jobs Predicted for Machines, Not People". The New York Times. https://www.nytimes.com/2011/10/24/technology/economists-see-more-jobs-for-machines-not-people.html.

- ↑ Andrew Keen, Keen On How The Internet Is Making Us Both Richer and More Unequal (TCTV), interview with Andrew McAfee and Erik Brynjolfsson, TechCrunch, 2011.11.15

- ↑ Krasny, Jill (25 November 2011). "MIT Professors: The 99% Should Shake Their Fists At The Tech Boom". Business Insider. http://www.businessinsider.com/mit-professors-the-99-should-shake-their-fists-at-the-tech-boom-2011-11.

- ↑ Timberg, Scott (18 December 2011). "The Clerk, RIP". Salon.com. http://www.salon.com/2011/12/18/the_clerk_rip/.

- ↑ Leonard, Andrew (17 January 2014). "Robots are stealing your job: How technology threatens to wipe out the middle class". Salon.com. http://www.salon.com/2014/01/17/robots_are_stealing_your_job_how_technology_threatens_to_wipe_out_the_middle_class/.

- ↑ Rotman, David (June 2015). "How Technology Is Destroying Jobs". MIT. http://www.technologyreview.com/featuredstory/515926/how-technology-is-destroying-jobs/.

- ↑ "The FT's Summer books 2015" ((registration required)). Financial Times. 26 June 2015. https://www.ft.com/content/bd4a767c-1b99-11e5-8201-cbdb03d71480.

- ↑ 55.0 55.1 55.2 Waters, Richard (3 March 2014). "Technology: Rise of the replicants" ((registration required)). Financial Times. https://www.ft.com/content/dc895d54-a2bf-11e3-9685-00144feab7de.

- ↑ Thompson, Derek (23 January 2014). "What Jobs Will the Robots Take?". The Nation. https://www.theatlantic.com/business/archive/2014/01/what-jobs-will-the-robots-take/283239/.

- ↑ Special Report (29 March 2013). "A mighty contest: Job destruction by robots could outweigh creation". The Economist. https://www.economist.com/news/special-report/21599525-job-destruction-robots-could-outweigh-creation-mighty-contest.

- ↑ 58.0 58.1 Cardiff Garcia, Erik Brynjolfsson and Mariana Mazzucato (3 July 2014). Robots are still in our control ((registration required)). The Financial Times. Retrieved 14 July 2015.

- ↑ Ignatieff, Michael (10 February 2014). "We need a new Bismarck to tame the machines". Financial Times. https://www.ft.com/content/1c4cb838-8cfd-11e3-ad57-00144feab7de.

- ↑ Lord Skidelsky (19 February 2013). "Rise of the robots: what will the future of work look like?". The Guardian. https://www.theguardian.com/business/2013/feb/19/rise-of-robots-future-of-work.

- ↑ Bria, Francesca (February 2016). "The robot economy may already have arrived". openDemocracy. https://www.opendemocracy.net/can-europe-make-it/francesca-bria/robot-economy-full-automation-work-future.

- ↑ Srnicek, Nick (March 2016). "4 Reasons Why Technological Unemployment Might Really Be Different This Time". novara wire. http://wire.novaramedia.com/2015/03/4-reasons-why-technological-unemployment-might-really-be-different-this-time/.

- ↑ Andrew McAfee and Erik Brynjolfsson (2014). "passim, see esp Chpt. 9". The Second Machine Age: Work, Progress, and Prosperity in a Time of Brilliant Technologies. W. W. Norton & Company. ISBN 978-0393239355.

- ↑ 64.0 64.1 64.2 "Lawrence H. Summers on the Economic Challenge of the Future: Jobs". 7 July 2014. https://online.wsj.com/news/article_email/lawrence-h-summers-on-the-economic-challenge-of-the-future-jobs-1404762501-lMyQjAxMTA0MDIwMjEyNDIyWj.

- ↑ 65.0 65.1 65.2 Miller, Claire Cain (15 December 2014). "As Robots Grow Smarter, American Workers Struggle to Keep Up". The New York Times. https://www.nytimes.com/2014/12/16/upshot/as-robots-grow-smarter-american-workers-struggle-to-keep-up.html.

- ↑ 66.0 66.1 Larry Summers, The Inequality Puzzle, Democracy: A Journal of Ideas, Issue #32, Spring 2014

- ↑ Winick, Erin (12 December 2017). "Lawyer-bots are shaking up jobs". MIT Technology Review. https://www.technologyreview.com/s/609556/lawyer-bots-are-shaking-up-jobs/.

- ↑ "Forum Debate: Rethinking Technology and Employment <--Centrality of work, 1:02 - 1:04 -->". World Economic Forum. Jan 2014. http://www.weforum.org/node/138333.

- ↑ Gillian Tett (21 January 2015). technology would continue to displace jobs over the next five years ((registration required)). The Financial Times. Retrieved 14 July 2015.

- ↑ Haldane, Andy (November 2015). "Labour's Share". Bank of England. http://www.bankofengland.co.uk/publications/Pages/speeches/2015/864.aspx.

- ↑ Visco, Ignazio (12 November 2015). Ignazio Visco: "For the times they are a-changin'...". https://www.bis.org/review/r151112a.htm.