Federated learning

From HandWiki - Reading time: 22 min

From HandWiki - Reading time: 22 min

Federated learning (also known as collaborative learning) is a machine learning technique in a setting where multiple entities (often called clients) collaboratively train a model while keeping their data decentralized,[1] rather than centrally stored. A defining characteristic of federated learning is data heterogeneity. Because client data is decentralized, data samples held by each client may not be independently and identically distributed.

Federated learning is generally concerned with and motivated by issues such as data privacy, data minimization, and data access rights. Its applications involve a variety of research areas including defence, telecommunications, the Internet of things, and pharmaceuticals.

Definition

Federated learning aims at training a machine learning algorithm, for instance deep neural networks, on multiple local datasets contained in local nodes without explicitly exchanging data samples. The general principle consists in training local models on local data samples and exchanging parameters (e.g. the weights and biases of a deep neural network) between these local nodes at some frequency to generate a global model shared by all nodes.

The main difference between federated learning and distributed learning lies in the assumptions made on the properties of the local datasets,[2] as distributed learning originally aims at parallelizing computing power where federated learning originally aims at training on heterogeneous datasets. While distributed learning also aims at training a single model on multiple servers, a common underlying assumption is that the local datasets are independent and identically distributed (i.i.d.) and roughly have the same size. None of these hypotheses are made for federated learning; instead, the datasets are typically heterogeneous and their sizes may span several orders of magnitude. Moreover, the clients involved in federated learning may be unreliable as they are subject to more failures or drop out since they commonly rely on less powerful communication media (i.e. Wi-Fi) and battery-powered systems (i.e. smartphones and IoT devices) compared to distributed learning where nodes are typically datacenters that have powerful computational capabilities and are connected to one another with fast networks.[3]

Mathematical formulation

The objective function for federated learning is as follows:

where is the number of nodes, are the weights of model as viewed by node , and is node 's local objective function, which describes how model weights conforms to node 's local dataset.

The goal of federated learning is to train a common model on all of the nodes' local datasets, in other words:

- Optimizing the objective function .

- Achieving consensus on . In other words, converge to some common at the end of the training process.

Centralized federated learning

In the centralized federated learning setting, a central server is used to orchestrate the different steps of the algorithms and coordinate all the participating nodes during the learning process. The server is responsible for the nodes selection at the beginning of the training process and for the aggregation of the received model updates. Since all the selected nodes have to send updates to a single entity, the server may become a bottleneck of the system.[3]

Decentralized federated learning

In the decentralized federated learning setting, the nodes are able to coordinate themselves to obtain the global model. This setup prevents single point failures as the model updates are exchanged only between interconnected nodes without the orchestration of the central server. Nevertheless, the specific network topology may affect the performances of the learning process.[3] See blockchain-based federated learning[4] and the references therein.

Personalized federated learning

In many application domains, not all clients are the same. Clients may share the same model architecture, but each have a different data distribution—which is known as Personalized federated learning (PFL), or even have different model architectures, like mobile phones and IoT devices—which is known as Heterogeneous federated learning.[5] The key challenge in training a PFL model is to keep each client different from others, and at the same time benefit from generalizing across clients.[6]

Heterogeneous federated learning

Most of the existing federated learning strategies assume that local models share the same global model architecture. Recently, a new federated learning framework named HeteroFL was developed to address heterogeneous clients equipped with very different computation and communication capabilities.[7] The HeteroFL technique can enable the training of heterogeneous local models with dynamically varying computation and non-IID data complexities while still producing a single accurate global inference model.[7][8][9]

Main features

Iterative learning

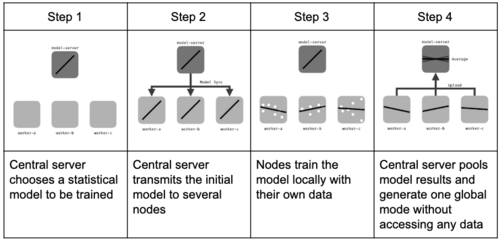

The iterative process of federated learning is composed of a series of fundamental client-server interactions, each of which is known as a federated learning round. Each round of this process consists in transmitting the current global model state to participating nodes, training local models on these local nodes to produce a set of potential model updates at each node, and then aggregating and processing these local updates into a single global update and applying it to the global model.[3]

In the methodology below, a central server is used for aggregation, while local nodes perform local training depending on the central server's orders. However, other strategies lead to the same results without central servers, in a peer-to-peer approach, using gossip[10] or consensus methodologies.[11]

Assuming a federated round composed by one iteration of the learning process, the learning procedure can be summarized as follows:[12]

- Initialization: according to the server inputs, a machine learning model (e.g., linear regression, neural network, boosting) is chosen to be trained on local nodes and initialized. Then, nodes are activated and wait for the central server to give the calculation tasks.

- Client selection: a fraction of local nodes are selected to start training on local data. The selected nodes acquire the current statistical model while the others wait for the next federated round.

- Configuration: the central server orders selected nodes to undergo training of the model on their local data in a pre-specified fashion (e.g., for some mini-batch updates of gradient descent).

- Reporting: each selected node sends its local model to the server for aggregation. The central server aggregates the received models and sends back the model updates to the nodes. It also handles failures for disconnected nodes or lost model updates. The next federated round is started returning to the client selection phase.

- Termination: once a pre-defined termination criterion is met (e.g., a maximum number of iterations is reached or the model accuracy is greater than a threshold) the central server aggregates the updates and finalizes the global model.

The procedure considered before assumes synchronized model updates. Recent federated learning developments introduced novel techniques to tackle asynchronicity during the training process, or training with dynamically varying models.[7] Compared to synchronous approaches where local models are exchanged once the computations have been performed for all layers of the neural network, asynchronous ones leverage the properties of neural networks to exchange model updates as soon as the computations of a certain layer are available. These techniques are also commonly referred to as split learning[13][14] and they can be applied both at training and inference time regardless of centralized or decentralized federated learning settings.[3][7]

Non-IID data

In most cases, the assumption of independent and identically distributed samples across local nodes does not hold for federated learning setups. Under this setting, the performances of the training process may vary significantly according to the unbalanced local data samples as well as the particular probability distribution of the training examples (i.e., features and labels) stored at the local nodes. To further investigate the effects of non-IID data, the following description considers the main categories presented in the preprint by Peter Kairouz et al. from 2019.[3]

The description of non-IID data relies on the analysis of the joint probability between features and labels for each node. This allows decoupling of each contribution according to the specific distribution available at the local nodes. The main categories for non-iid data can be summarized as follows:[3]

- Covariate shift: local nodes may store examples that have different statistical distributions compared to other nodes. An example occurs in natural language processing datasets where people typically write the same digits/letters with different stroke widths or slants.[3]

- Prior probability shift: local nodes may store labels that have different statistical distributions compared to other nodes. This can happen if datasets are regional and/or demographically partitioned. For example, datasets containing images of animals vary significantly from country to country.[3]

- Concept drift (same label, different features): local nodes may share the same labels but some of them correspond to different features at different local nodes. For example, images that depict a particular object can vary according to the weather condition in which they were captured.[3]

- Concept shift (same features, different labels): local nodes may share the same features but some of them correspond to different labels at different local nodes. For example, in natural language processing, the sentiment analysis may yield different sentiments even if the same text is observed.[3]

- Unbalanced: the amount of data available at the local nodes may vary significantly in size.[3][7]

The loss in accuracy due to non-iid data can be bounded through using more sophisticated means of doing data normalization, rather than batch normalization.[15]

Algorithmic hyper-parameters

Network topology

The way the statistical local outputs are pooled and the way the nodes communicate with each other can change from the centralized model explained in the previous section. This leads to a variety of federated learning approaches: for instance no central orchestrating server, or stochastic communication.[16]

In particular, orchestrator-less distributed networks are one important variation. In this case, there is no central server dispatching queries to local nodes and aggregating local models. Each local node sends its outputs to several randomly-selected others, which aggregate their results locally. This restrains the number of transactions, thereby sometimes reducing training time and computing cost.[17]

Federated learning parameters

Once the topology of the node network is chosen, one can control different parameters of the federated learning process (in addition to the machine learning model's own hyperparameters) to optimize learning:

- Number of federated learning rounds:

- Total number of nodes used in the process:

- Fraction of nodes used at each iteration for each node:

- Local batch size used at each learning iteration:

Other model-dependent parameters can also be tinkered with, such as:

- Number of iterations for local training before pooling:

- Local learning rate:

Those parameters have to be optimized depending on the constraints of the machine learning application (e.g., available computing power, available memory, bandwidth). For instance, stochastically choosing a limited fraction of nodes for each iteration diminishes computing cost and may prevent overfitting,[18] in the same way that stochastic gradient descent can reduce overfitting.

Limitations

Technical

Federated learning requires frequent communication between nodes during the learning process. Thus, it requires not only enough local computing power and memory, but also high bandwidth connections to be able to exchange parameters of the machine learning model. However, the technology also avoids data communication, which can require significant resources before starting centralized machine learning. Nevertheless, the devices typically employed in federated learning are communication-constrained, for example IoT devices or smartphones are generally connected to Wi-Fi networks, thus, even if the models are commonly less expensive to be transmitted compared to raw data, federated learning mechanisms may not be suitable in their general form.[3]

Federated learning raises several statistical challenges:

- Heterogeneity between the different local datasets: each node may have some bias with respect to the general population, and the size of the datasets may vary significantly;[7]

- Temporal heterogeneity: each local dataset's distribution may vary with time;

- Interoperability of each node's dataset is a prerequisite;

- Each node's dataset may require regular curations;

- Hiding training data might allow attackers to inject backdoors into the global model;[19]

- Lack of access to global training data makes it harder to identify unwanted biases entering the training e.g. age, gender, sexual orientation;

- Partial or total loss of model updates due to node failures affecting the global model;[3]

- Lack of annotations or labels on the client side.[20]

- Heterogeneity between processing platforms[21]

Governance

Governance considerations may also limit the applicability of federated learning, in particular when applied in cross-organisational settings. Most federated learning frameworks rely on a central coordinating server, which introduces questions regarding infrastructure control, model ownership, and decision-making authority among collaborators.[22][23] In multi-party deployments, especially those spanning competitive or collaborative data markets, the absence of agreed-upon governance structures can create uncertainty about responsibilities for validating model updates, auditing system behaviour, and managing security incidents. These organisational issues may deter participants from contributing to shared models and can complicate regulatory compliance, thereby constraining adoption.

A further governance-related issue concerns the distribution of value among participants as multi-party federated learning systems scale. As more clients contribute to training, model accuracy may approach a performance threshold,[22] after which additional contributions yield diminishing marginal utility. This dynamic can create tensions within collaborative arrangements, as early participants may perceive later contributions as offering reduced value while still benefiting from the shared model. Such asymmetries may affect incentives to join or remain in a consortium and complicate the design of fair participation, ownership, or reward structures. Establishing transparent rules for contribution assessment and benefit allocation is therefore an important organisational challenge and represents a direction for future research.

Algorithms

A number of different algorithms for federated optimization have been proposed.

Federated stochastic gradient descent (FedSGD)

Stochastic gradient descent is an approach used in deep learning, where gradients are computed on a random subset of the total dataset and then used to make one step of the gradient descent..

Federated stochastic gradient descent[24] is the analog of this algorithm to the federated setting, but uses a random subset of the nodes, each node using all its data. The server averages the gradients in proportion to the number of training data on each node, and uses the average to make a gradient descent step.

Federated averaging (FedAvg)

Federated averaging (FedAvg) is a generalization of FedSGD which allows nodes to do more than one batch update on local data and exchange updated weights rather than gradients. This reduces communication and is equivalent to averaging the weights if all nodes start with the same weights. It does not seem to hurt the resulting averaged model's performance compared to FedSGD.[25] FedAvg variations have been proposed based on adaptive optimizers such as ADAM and AdaGrad, and tend to outperform FedAvg.[26]

Federated Proximal (FedProx)

Federated Proximal (FedProx) extends FedAvg by adding a proximal term to the local objective in the form of , where is the FedProx hyper parameter, in order to address data heterogeneity across clients. The proximal term constrains local updates, helping reduce client drift when client data are non-IID.[27]

Federated learning with dynamic regularization (FedDyn)

Federated learning methods suffer when node datasets are distributed heterogeneously, because then minimizing the node losses is not the same as minimizing the global loss. In 2021, Acar et al.[28] introduced a solution called FedDyn, which dynamically regularizes each node loss function so that they converge to the global loss. Since the local losses are aligned, FedDyn is robust to the different heterogeneity levels and so it can safely perform full minimization in each device. In theory, FedDyn converges to the optimal (a stationary point for nonconvex losses) by being agnostic to the heterogeneity levels. These claims are verified with extensive experiments on various datasets.[28]

Besides reducing communication, it is also beneficial to reduce local computation. To do this, FedDynOneGD [28] modifies FedDyn to calculate only one gradient per node per round, regularizes it and updates the global model with it. Hence, the computational complexity is linear in local dataset size. Moreover, gradient computation can be parallelized on each node, unlike successive SGD steps. In theory, FedDynOneGD achieves the same convergence guarantees as in FedDyn with less local computation.[28]

Personalized federated learning by pruning (Sub-FedAvg)

Federated learning methods have poor global performance under non-IID settings. This motivates clients to yield personalized models in federation. To change this, Vahidian et al.[29] recently introduced the algorithm Sub-FedAvg which does hybrid pruning (structured and unstructured pruning) with averaging on the intersection of clients' drawn subnetworks. This simultaneously addresses communication efficiency, resource constraints and personalized models accuracies.[29]

Sub-FedAvg also extends the "lottery ticket hypothesis" of centrally trained neural networks to federated learning, with the research question: "Do winning tickets exist for clients' neural networks being trained in federated learning? If so, how to effectively draw the personalized subnetworks for each client?" Sub-FedAvg experimentally answers "yes"[29] and proposes two algorithms to effectively draw the personalized subnetworks.[29]

Dynamic aggregation - inverse distance aggregation

IDA (Inverse Distance Aggregation) is a novel adaptive weighting approach for federated learning nodes based on meta-information which handles unbalanced and non-iid data. It uses the distance of the model parameters as a strategy to minimize the effect of outliers and improve the model's convergence rate.[30]

Hybrid federated dual coordinate ascent (HyFDCA)

Very few methods for hybrid federated learning, where clients only hold subsets of both features and samples, exist. Yet, this scenario is very important in practical settings. Hybrid Federated Dual Coordinate Ascent (HyFDCA)[31] is a novel algorithm proposed in 2024 that solves convex problems in the hybrid FL setting. This algorithm extends CoCoA, a primal-dual distributed optimization algorithm introduced by Jaggi et al. (2014)[32] and Smith et al. (2017),[33] to the case where both samples and features are partitioned across clients.

HyFDCA claims several improvement over existing algorithms:

- HyFDCA is a provably convergent primal-dual algorithm for hybrid FL in at least the following settings.

- Hybrid Federated Setting with Complete Client Participation

- Horizontal Federated Setting with Random Subsets of Available Clients

- The authors show HyFDCA enjoys a convergence rate of O(1⁄t) which matches the convergence rate of FedAvg (see below).[34]

- Vertical Federated Setting with Incomplete Client Participation

- The authors show HyFDCA enjoys a convergence rate of O(log(t)⁄t) whereas FedBCD[35] exhibits a slower O(1⁄sqrt(t)) convergence rate and requires full client participation.

- HyFDCA provides the privacy steps that ensure privacy of client data in the primal-dual setting. These principles apply to future efforts in developing primal-dual algorithms for FL.

- HyFDCA empirically outperforms HyFEM and FedAvg in loss function value and validation accuracy across a multitude of problem settings and datasets (see below for more details). The authors also introduce a hyperparameter selection framework for FL with competing metrics using ideas from multiobjective optimization.

There is only one other algorithm that focuses on hybrid FL, HyFEM proposed by Zhang et al. (2020).[36] This algorithm uses a feature matching formulation that balances clients building accurate local models and the server learning an accurate global model. This requires a matching regularizer constant that must be tuned based on user goals and results in disparate local and global models. Furthermore, the convergence results provided for HyFEM only prove convergence of the matching formulation not of the original global problem. This work is substantially different than HyFDCA's approach which uses data on local clients to build a global model that converges to the same solution as if the model was trained centrally. Furthermore, the local and global models are synchronized and do not require the adjustment of a matching parameter between local and global models. However, HyFEM is suitable for a vast array of architectures including deep learning architectures, whereas HyFDCA is designed for convex problems like logistic regression and support vector machines.

HyFDCA is empirically benchmarked against the aforementioned HyFEM as well as the popular FedAvg in solving convex problem (specifically classification problems) for several popular datasets (MNIST, Covtype, and News20). The authors found HyFDCA converges to a lower loss value and higher validation accuracy in less overall time in 33 of 36 comparisons examined and 36 of 36 comparisons examined with respect to the number of outer iterations.[31] Lastly, HyFDCA only requires tuning of one hyperparameter, the number of inner iterations, as opposed to FedAvg (which requires tuning three) or HyFEM (which requires tuning four). In addition to FedAvg and HyFEM being quite difficult to optimize hyperparameters in turn greatly affecting convergence, HyFDCA's single hyperparameter allows for simpler practical implementations and hyperparameter selection methodologies.

Current research topics

Federated learning has started to emerge as an important research topic in 2015[2] and 2016,[37] with the first publications on federated averaging in telecommunication settings. Before that, in a thesis work titled "A Framework for Multi-source Prefetching Through Adaptive Weight",[38] an approach to aggregate predictions from multiple models trained at three location of a request response cycle with was proposed. Another important aspect of active research is the reduction of the communication burden during the federated learning process. In 2017 and 2018, publications have emphasized the development of resource allocation strategies, especially to reduce communication[25] requirements[39] between nodes with gossip algorithms[40] as well as on the characterization of the robustness to differential privacy attacks.[41] Other research activities focus on the reduction of the bandwidth during training through sparsification and quantization methods,[39] where the machine learning models are sparsified and/or compressed before they are shared with other nodes. Developing ultra-light DNN architectures is essential for device-/edge- learning and recent work recognises both the energy efficiency requirements [42] for future federated learning and the need to compress deep learning, especially during learning.[43]

Recent research advancements are starting to consider real-world propagating channels[44] as in previous implementations ideal channels were assumed. Another active direction of research is to develop Federated learning for training heterogeneous local models with varying computation complexities and producing a single powerful global inference model.[7]

A learning framework named Assisted learning was recently developed to improve each agent's learning capabilities without transmitting private data, models, and even learning objectives.[45] Compared with Federated learning that often requires a central controller to orchestrate the learning and optimization, Assisted learning aims to provide protocols for the agents to optimize and learn among themselves without a global model.

Use cases

Federated learning typically applies when individual actors need to train models on larger datasets than their own, but cannot afford to share the data in itself with others (e.g., for legal, strategic or economic reasons). The technology yet requires good connections between local servers and minimum computational power for each node.[3]

Transportation: self-driving cars

Self-driving cars encapsulate many machine learning technologies to function: computer vision for analyzing obstacles, machine learning for adapting their pace to the environment (e.g., bumpiness of the road). Due to the potential high number of self-driving cars and the need for them to quickly respond to real world situations, traditional cloud approach may generate safety risks. Federated learning can represent a solution for limiting volume of data transfer and accelerating learning processes.[46][47]

Industry 4.0: smart manufacturing

In Industry 4.0, there is a widespread adoption of machine learning techniques[48] to improve the efficiency and effectiveness of industrial process while guaranteeing a high level of safety. Nevertheless, privacy of sensitive data for industries and manufacturing companies is of paramount importance. Federated learning algorithms can be applied to these problems as they do not disclose any sensitive data.[37] In addition, FL also implemented for PM2.5 prediction to support Smart city sensing applications.[49]

Medicine: digital health

Federated learning seeks to address the problem of data governance and privacy by training algorithms collaboratively without exchanging the data itself. Today's standard approach of centralizing data from multiple centers comes at the cost of critical concerns regarding patient privacy and data protection. To solve this problem, the ability to train machine learning models at scale across multiple medical institutions without moving the data is a critical technology. Nature Digital Medicine published the paper "The Future of Digital Health with Federated Learning"[50] in September 2020, in which the authors explore how federated learning may provide a solution for the future of digital health, and highlight the challenges and considerations that need to be addressed. Recently, a collaboration of 20 different institutions around the world validated the utility of training AI models using federated learning. In a paper published in Nature Medicine "Federated learning for predicting clinical outcomes in patients with COVID-19",[51] they showcased the accuracy and generalizability of a federated AI model for the prediction of oxygen needs in patients with COVID-19 infections. Furthermore, in a published paper "A Systematic Review of Federated Learning in the Healthcare Area: From the Perspective of Data Properties and Applications", the authors trying to provide a set of challenges on FL challenges on medical data-centric perspective.[52]

A coalition from industry and academia has developed MedPerf,[53] an open source platform that enables validation of medical AI models in real world data. The platform relies technically on federated evaluation of AI models aiming to alleviate concerns of patient privacy and conceptually on diverse benchmark committees to build the specifications of neutral clinically impactful benchmarks.[54]

Robotics

Robotics includes a wide range of applications of machine learning methods: from perception and decision-making to control. As robotic technologies have been increasingly deployed from simple and repetitive tasks (e.g. repetitive manipulation) to complex and unpredictable tasks (e.g. autonomous navigation), the need for machine learning grows. Federated Learning provides a solution to improve over conventional machine learning training methods. In the paper,[55] mobile robots learned navigation over diverse environments using the FL-based method, helping generalization. In the paper,[56] Federated Learning is applied to improve multi-robot navigation under limited communication bandwidth scenarios, which is a current challenge in real-world learning-based robotic tasks. In the paper,[57] Federated Learning is used to learn Vision-based navigation, helping better sim-to-real transfer.

Biometrics

Federated Learning (FL) is transforming biometric recognition by enabling collaborative model training across distributed data sources while preserving privacy. By eliminating the need to share sensitive biometric templates like fingerprints, facial images, and iris scans, FL addresses privacy concerns and regulatory constraints, allowing for improved model accuracy and generalizability. It mitigates challenges of data fragmentation by leveraging scattered datasets, making it particularly effective for diverse biometric applications such as facial and iris recognition. However, FL faces challenges, including model and data heterogeneity, computational overhead, and vulnerability to security threats like inference attacks. Future directions include developing personalized FL frameworks, enhancing system efficiency, and expanding FL applications to biometric presentation attack detection (PAD) and quality assessment, fostering innovation and robust solutions in privacy-sensitive environments.[58]

See also

References

- ↑ Kairouz, Peter; McMahan, H. Brendan; Avent, Brendan; Bellet, Aurélien; Bennis, Mehdi; Bhagoji, Arjun Nitin; Bonawitz, Kallista; Charles, Zachary et al. (2021-06-22). "Advances and Open Problems in Federated Learning" (in English). Foundations and Trends in Machine Learning 14 (1–2): 1–210. doi:10.1561/2200000083. ISSN 1935-8237. https://www.nowpublishers.com/article/Details/MAL-083.

- ↑ 2.0 2.1 Konečný, Jakub; McMahan, Brendan; Ramage, Daniel (2015). "Federated Optimization: Distributed Optimization Beyond the Datacenter". arXiv:1511.03575 [cs.LG].

- ↑ 3.00 3.01 3.02 3.03 3.04 3.05 3.06 3.07 3.08 3.09 3.10 3.11 3.12 3.13 3.14 Kairouz, Peter; Brendan McMahan, H.; Avent, Brendan; Bellet, Aurélien; Bennis, Mehdi; Arjun Nitin Bhagoji; Bonawitz, Keith; Charles, Zachary; Cormode, Graham; Cummings, Rachel; D'Oliveira, Rafael G. L.; Salim El Rouayheb; Evans, David; Gardner, Josh; Garrett, Zachary; Gascón, Adrià; Ghazi, Badih; Gibbons, Phillip B.; Gruteser, Marco; Harchaoui, Zaid; He, Chaoyang; He, Lie; Huo, Zhouyuan; Hutchinson, Ben; Hsu, Justin; Jaggi, Martin; Javidi, Tara; Joshi, Gauri; Khodak, Mikhail; et al. (10 December 2019). "Advances and Open Problems in Federated Learning". arXiv:1912.04977 [cs.LG].

- ↑ Pokhrel, Shiva Raj; Choi, Jinho (2020). "Federated Learning with Blockchain for Autonomous Vehicles: Analysis and Design Challenges". IEEE Transactions on Communications 68 (8): 4734–4746. doi:10.1109/TCOMM.2020.2990686. Bibcode: 2020ITCom..68.4734P.

- ↑ Xu, Zirui; Yu, Fuxun; Xiong, Jinjun; Chen, Xiang (December 2021). "Helios: Heterogeneity-Aware Federated Learning with Dynamically Balanced Collaboration". 2021 58th ACM/IEEE Design Automation Conference (DAC). pp. 997–1002. doi:10.1109/DAC18074.2021.9586241. ISBN 978-1-6654-3274-0. https://ieeexplore.ieee.org/document/9586241/;jsessionid=6HjI-LT80gn9nOJ9Vu4Qzeg-O-fNSeKUxKXGc2BDlkOkxCXpbVHu!1467215501.

- ↑ Shamsian, Aviv; Navon, Aviv; Fetaya, Ethan; Chechik, Gal. "Personalized Federated Learning using Hypernetworks". Proceedings of the 38th International Conference on Machine Learning (PMLR) 139: 9489–9502. https://proceedings.mlr.press/v139/shamsian21a/shamsian21a.pdf.

- ↑ 7.0 7.1 7.2 7.3 7.4 7.5 7.6 Diao, Enmao; Ding, Jie; Tarokh, Vahid (2020-10-02). "HeteroFL: Computation and Communication Efficient Federated Learning for Heterogeneous Clients". arXiv:2010.01264 [cs.LG].

- ↑ Yu, Fuxun; Zhang, Weishan; Qin, Zhuwei; Xu, Zirui; Wang, Di; Liu, Chenchen; Tian, Zhi; Chen, Xiang (2021-08-14). "Fed2". Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining. KDD '21. New York, NY, USA: Association for Computing Machinery. pp. 2066–2074. doi:10.1145/3447548.3467309. ISBN 978-1-4503-8332-5. https://doi.org/10.1145/3447548.3467309.

- ↑ Litany, Or; Maron, Haggai; Acuna, David; Kautz, Jan; Chechik, Gal; Fidler, Sanja (2022-01-20), Federated Learning with Heterogeneous Architectures using Graph HyperNetworks

- ↑ Decentralized Collaborative Learning of Personalized Models over Networks Paul Vanhaesebrouck, Aurélien Bellet, Marc Tommasi, 2017

- ↑ Savazzi, Stefano; Nicoli, Monica; Rampa, Vittorio (May 2020). "Federated Learning With Cooperating Devices: A Consensus Approach for Massive IoT Networks". IEEE Internet of Things Journal 7 (5): 4641–4654. doi:10.1109/JIOT.2020.2964162. Bibcode: 2020IITJ....7.4641S.

- ↑ Towards federated learning at scale: system design, Keith Bonawitz Hubert Eichner and al., 2019

- ↑ Gupta, Otkrist; Raskar, Ramesh (14 October 2018). "Distributed learning of deep neural network over multiple agents". arXiv:1810.06060 [cs.LG].

- ↑ Vepakomma, Praneeth; Gupta, Otkrist; Swedish, Tristan; Raskar, Ramesh (3 December 2018). "Split learning for health: Distributed deep learning without sharing raw patient data". arXiv:1812.00564 [cs.LG].

- ↑ Hsieh, Kevin; Phanishayee, Amar; Mutlu, Onur; Gibbons, Phillip (2020-11-21). "The Non-IID Data Quagmire of Decentralized Machine Learning" (in en). International Conference on Machine Learning (PMLR): 4387–4398. http://proceedings.mlr.press/v119/hsieh20a.html.

- ↑ Collaborative Deep Learning in Fixed Topology Networks, Zhanhong Jiang, Mukesh Yadaw, Chinmay Hegde, Soumik Sarkar, 2017

- ↑ GossipGraD: Scalable Deep Learning using Gossip Communication based Asynchronous Gradient Descent, Jeff Daily, Abhinav Vishnu, Charles Siegel, Thomas Warfel, Vinay Amatya, 2018

- ↑ Soumia Zohra El Mestari; Maciej Krzysztof Zuziak; Lenzini, Gabriele (September 16, 2025). "Poison to detect: Detection of targeted overfitting in federated learning". arXiv:2509.11974 [cs.CR].

- ↑ Bagdasaryan, Eugene; Veit, Andreas; Hua, Yiqing (2019-08-06). "How To Backdoor Federated Learning". arXiv:1807.00459 [cs.CR].

- ↑ Vahid, Diao; Ding, Enmao; Tarokh, Jie (2021-06-02). SemiFL: Communication Efficient Semi-Supervised Federated Learning with Unlabeled Clients. OCLC 1269554828.

- ↑ "Apache Wayang - Home". https://wayang.apache.org.

- ↑ 22.0 22.1 Barbereau, Tom; Delgado Fernandez, Joaquin; Potenciano Menci, Sergio (2025). "The governance of federated learning: a decision framework for organisational archetypes" (in en). Data & Policy 7. doi:10.1017/dap.2025.10020. ISSN 2632-3249. https://www.cambridge.org/core/product/identifier/S2632324925100205/type/journal_article.

- ↑ Eden, Rebekah; Chukwudi, Ignatius; Bain, Chris; Barbieri, Sebastiano; Callaway, Leonie; de Jersey, Susan; George, Yasmeen; Gorse, Alain-Dominique et al. (2025-07-10). "A scoping review of the governance of federated learning in healthcare" (in en). npj Digital Medicine 8 (1): 427. doi:10.1038/s41746-025-01836-3. ISSN 2398-6352. PMID 40640574.

- ↑ Privacy Preserving Deep Learning, R. Shokri and V. Shmatikov, 2015

- ↑ 25.0 25.1 Communication-Efficient Learning of Deep Networks from Decentralized Data, H. Brendan McMahan and al. 2017

- ↑ Reddi, Sashank; Charles, Zachary; Zaheer, Manzil; Garrett, Zachary; Rush, Keith; Konečný, Jakub; Kumar, Sanjiv; McMahan, H. Brendan (2021-09-08). "Adaptive Federated Optimization". arXiv:2003.00295 [cs.LG].

- ↑ Li, T. (2020). "Federated Optimization in Heterogeneous Networks". Proceedings of Machine Learning Research 119: 4292–4304.

- ↑ 28.0 28.1 28.2 28.3 Acar, Durmus Alp Emre; Zhao, Yue; Navarro, Ramon Matas; Mattina, Matthew; Whatmough, Paul N.; Saligrama, Venkatesh (2021). "Federated Learning Based on Dynamic Regularization". ICLR.

- ↑ 29.0 29.1 29.2 29.3 Vahidian, Saeed; Morafah, Mahdi; Lin, Bill (2021). "Personalized Federated Learning by Structured and Unstructured Pruning under Data Heterogeneity". Icdcs-W.

- ↑ Yeganeh, Yousef; Farshad, Azade; Navab, Nassir; Albarqouni, Shadi (2020). "Inverse Distance Aggregation for Federated Learning with Non-IID Data". Icdcs-W.

- ↑ 31.0 31.1 Overman, Tom; Blum, Garrett; Klabjan, Diego (2024). "A Primal-Dual Algorithm for Hybrid Federated Learning". Proceedings of the AAAI Conference on Artificial Intelligence 38 (13): 14482–14489. doi:10.1609/aaai.v38i13.29363.

- ↑ Jaggi, M., Smith, V., Takácˇ, M., Terhorst, J., Krishnan, S., Hofmann, T., and Jordan, M. I. (2014). Communication- efficient distributed dual coordinate ascent. In Pro- ceedings of the 27th International Conference on Neu- ral Information Processing Systems, volume 2, page 3068–3076.

- ↑ Smith, V., Forte, S., Ma, C., Takácˇ, M., Jordan, M. I., and Jaggi, M. (2017). Cocoa: A general framework for communication-efficient distributed optimization. Journal of Machine Learning Research, 18(1):8590–8638.

- ↑ McMahan, H. B., Moore, E., Ramage, D., Hampson, S., and y Arcas, B. A. (2017). Communication-efficient learning of deep networks from decentralized data. In AISTATS, volume 54, pages 1273–1282

- ↑ Liu, Y., Zhang, X., Kang, Y., Li, L., Chen, T., Hong, M., and Yang, Q. (2022). Fedbcd: A communication- efficient collaborative learning framework for distributed features. IEEE Transactions on Signal Processing, pages 1–12.

- ↑ Zhang, X., Yin, W., Hong, M., and Chen, T. (2020). Hybrid federated learning: Algorithms and implementation. In NeurIPS-SpicyFL 2020.

- ↑ 37.0 37.1 Federated Optimization: Distributed Machine Learning for On-Device Intelligence, Jakub Konečný, H. Brendan McMahan, Daniel Ramage and Peter Richtárik, 2016

- ↑ Berhanu, Yoseph. "A Framework for Multi-source Prefetching Through Adaptive Weight". https://www.academia.edu/8625512.

- ↑ 39.0 39.1 Konečný, Jakub; McMahan, H. Brendan; Yu, Felix X.; Richtárik, Peter; Suresh, Ananda Theertha; Bacon, Dave (30 October 2017). "Federated Learning: Strategies for Improving Communication Efficiency". arXiv:1610.05492 [cs.LG].

- ↑ Gossip training for deep learning, Michael Blot and al., 2017

- ↑ Differentially Private Federated Learning: A Client Level Perspective Robin C. Geyer and al., 2018

- ↑ Du, Zhiyong; Deng, Yansha; Guo, Weisi; Nallanathan, Arumugam; Wu, Qihui (2021). "Green Deep Reinforcement Learning for Radio Resource Management: Architecture, Algorithm Compression, and Challenges" (in en). IEEE Vehicular Technology Magazine 16: 29–39. doi:10.1109/MVT.2020.3015184.

- ↑ "Random sketch learning for deep neural networks in edge computing" (in en). Nature Computational Science 1. 2021. https://www.nature.com/articles/s43588-021-00039-6.

- ↑ Amiri, Mohammad Mohammadi; Gunduz, Deniz (10 February 2020). "Federated Learning over Wireless Fading Channels". IEEE Transactions on Wireless Communications 19 (5): 3546. doi:10.1109/TWC.2020.2974748. Bibcode: 2020ITWC...19.3546A.

- ↑ Xian, Xun; Wang, Xinran; Ding, Jie; Ghanadan, Reza (2020). "Assisted Learning: A Framework for Multi-Organization Learning" (in en). Advances in Neural Information Processing Systems 33. https://proceedings.neurips.cc//paper/2020/hash/a7b23e6eefbe6cf04b8e62a6f0915550-Abstract.html.

- ↑ Pokhrel, Shiva Raj (2020). "Federated learning meets blockchain at 6G edge: A drone-assisted networking for disaster response". Proceedings of the 2nd ACM MobiCom Workshop on Drone Assisted Wireless Communications for 5G and Beyond. pp. 49–54. doi:10.1145/3414045.3415949. ISBN 978-1-4503-8105-5.

- ↑ Elbir, Ahmet M.; Coleri, S. (2 June 2020). "Federated Learning for Vehicular Networks". arXiv:2006.01412 [eess.SP].

- ↑ Cioffi, Raffaele; Travaglioni, Marta; Piscitelli, Giuseppina; Petrillo, Antonella; De Felice, Fabio (2019). "Artificial Intelligence and Machine Learning Applications in Smart Production: Progress, Trends, and Directions" (in en). Sustainability 12 (2): 492. doi:10.3390/su12020492.

- ↑ Putra, Karisma Trinanda; Chen, Hsing-Chung; Prayitno; Ogiela, Marek R.; Chou, Chao-Lung; Weng, Chien-Erh; Shae, Zon-Yin (January 2021). "Federated Compressed Learning Edge Computing Framework with Ensuring Data Privacy for PM2.5 Prediction in Smart City Sensing Applications" (in en). Sensors 21 (13): 4586. doi:10.3390/s21134586. PMID 34283140. Bibcode: 2021Senso..21.4586P.

- ↑ Rieke, Nicola; Hancox, Jonny; Li, Wenqi; Milletarì, Fausto; Roth, Holger R.; Albarqouni, Shadi; Bakas, Spyridon; Galtier, Mathieu N. et al. (14 September 2020). "The future of digital health with federated learning". npj Digital Medicine 3 (1): 119. doi:10.1038/s41746-020-00323-1. PMID 33015372.

- ↑ Dayan, Ittai; Roth, Holger R.; Zhong, Aoxiao et al. (2021). "Federated learning for predicting clinical outcomes in patients with COVID-19". Nature Medicine 27 (10): 1735–1743. doi:10.1038/s41591-021-01506-3. PMID 34526699.

- ↑ Prayitno; Shyu, Chi-Ren; Putra, Karisma Trinanda; Chen, Hsing-Chung; Tsai, Yuan-Yu; Hossain, K. S. M. Tozammel; Jiang, Wei; Shae, Zon-Yin (January 2021). "A Systematic Review of Federated Learning in the Healthcare Area: From the Perspective of Data Properties and Applications" (in en). Applied Sciences 11 (23). doi:10.3390/app112311191.

- ↑ Karargyris, Alexandros; Umeton, Renato; Sheller, Micah J. et al. (17 July 2023). "Federated benchmarking of medical artificial intelligence with MedPerf". Nature Machine Intelligence (Springer Science and Business Media LLC) 5 (7): 799–810. doi:10.1038/s42256-023-00652-2. ISSN 2522-5839. PMID 38706981.

- ↑ "Announcing MedPerf Open Benchmarking Platform for Medical AI". 2023-07-17. https://mlcommons.org/en/news/medperf-nature-mi/.

- ↑ Liu, Boyi; Wang, Lujia; Liu, Ming (2019). "Lifelong Federated Reinforcement Learning: A Learning Architecture for Navigation in Cloud Robotic Systems". 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). pp. 1688–1695. doi:10.1109/IROS40897.2019.8967908. ISBN 978-1-7281-4004-9.

- ↑ Na, Seongin; Rouček, Tomáš; Ulrich, Jiří; Pikman, Jan; Krajník, Tomáš; Lennox, Barry; Arvin, Farshad (2023). "Federated Reinforcement Learning for Collective Navigation of Robotic Swarms". IEEE Transactions on Cognitive and Developmental Systems 15 (4): 1. doi:10.1109/TCDS.2023.3239815. Bibcode: 2023ITCDS..15.2122N.

- ↑ Yu, Xianjia; Queralta, Jorge Pena; Westerlund, Tomi (2022). "Towards Lifelong Federated Learning in Autonomous Mobile Robots with Continuous Sim-to-Real Transfer". Procedia Computer Science 210: 86–93. doi:10.1016/j.procs.2022.10.123.

- ↑ Guo, Jian; Mu, Hengyu; Liu, Xingli; Ren, Hengyi; Han, Chong (2024). "Federated learning for biometric recognition: a survey". Artificial Intelligence Review 57 (8): 208. doi:10.1007/s10462-024-10847-7.

External links

- "Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016" at eur-lex.europa.eu. Retrieved October 18, 2019.

- "Data minimisation and privacy-preserving techniques in AI systems" at UK Information Commissioners Office. Retrieved July 22, 2020

- "Realising the Potential of Data Whilst Preserving Privacy with EyA and Conclave from R3" at eya.global. Retrieved March 31, 2022.

|

KSF

KSF