IOPS

From HandWiki - Reading time: 10 min

From HandWiki - Reading time: 10 min

Input/output operations per second (IOPS, pronounced eye-ops) is an input/output performance measurement used to characterize computer storage devices like hard disk drives (HDD), solid state drives (SSD), and storage area networks (SAN). Like benchmarks, IOPS numbers published by storage device manufacturers do not directly relate to real-world application performance.[1][2]

Background

To meaningfully describe the performance characteristics of any storage device, it is necessary to specify a minimum of three metrics simultaneously: IOPS, response time, and (application) workload. Absent simultaneous specifications of response-time and workload, IOPS are essentially meaningless. In isolation, IOPS can be considered analogous to "revolutions per minute" of an automobile engine i.e. an engine capable of spinning at 10,000 RPMs with its transmission in neutral does not convey anything of value, however an engine capable of developing specified torque and horsepower at a given number of RPMs fully describes the capabilities of the engine.

The specific number of IOPS possible in any system configuration will vary greatly, depending upon the variables the tester enters into the program, including the balance of read and write operations, the mix of sequential and random access patterns, the number of worker threads and queue depth, as well as the data block sizes.[1] There are other factors which can also affect the IOPS results including the system setup, storage drivers, OS background operations etc. Also, when testing SSDs in particular, there are preconditioning considerations that must be taken into account.[3]

Performance characteristics

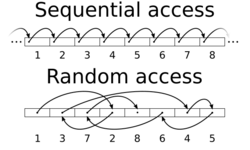

The most common performance characteristics measured are sequential and random operations. Sequential operations access locations on the storage device in a contiguous manner and are generally associated with large data transfer sizes, e.g. ≥ 128 kB. Random operations access locations on the storage device in a non-contiguous manner and are generally associated with small data transfer sizes, e.g. 4 kB.

The most common performance characteristics are as follows:

| Measurement | Description |

|---|---|

| Total IOPS | Total number of I/O operations per second (when performing a mix of read and write tests) |

| Random read IOPS | Average number of random read I/O operations per second |

| Random write IOPS | Average number of random write I/O operations per second |

| Sequential read IOPS | Average number of sequential read I/O operations per second |

| Sequential write IOPS | Average number of sequential write I/O operations per second |

For HDDs and similar electromechanical storage devices, the random IOPS numbers are primarily dependent upon the storage device's random seek time, whereas, for SSDs and similar solid state storage devices, the random IOPS numbers are primarily dependent upon the storage device's internal controller and memory interface speeds. On both types of storage devices, the sequential IOPS numbers (especially when using a large block size) typically indicate the maximum sustained bandwidth that the storage device can handle.[1] Often sequential IOPS are reported as a simple Megabytes per second number as follows:

- IOPS × (transfer size in bytes) = (bytes per second)

(Then converted to MB/s.)

Some HDDs/SSDs will improve in performance as the number of outstanding IOs (i.e. queue depth) increases. This is usually the result of more advanced controller logic on the drive performing command queuing and reordering commonly called either Tagged Command Queuing (TCQ) or Native Command Queuing (NCQ). Many consumer SATA HDDs either cannot do this, or their implementation is so poor that no performance benefit can be seen. Enterprise class SATA HDDs, such as the Western Digital Raptor and Seagate Barracuda NL will improve by nearly 100% with deep queues.[4]

While traditional HDDs have about the same IOPS for read and write operations, many NAND flash-based SSDs and USB sticks are much slower writing than reading due to the inability to rewrite directly into a previously written location forcing a procedure called garbage collection.[5][6][7] This has caused hardware test sites to start to provide independently measured results when testing IOPS performance.

Flash SSDs, such as the Intel X25-E (released 2010), have much higher IOPS than traditional HDD. In a test done by Xssist, using Iometer, 4 KB random transfers, 70/30 read/write ratio, queue depth 4, the IOPS delivered by the Intel X25-E 64 GB G1 started around 10000 IOPs, and dropped sharply after 8 minutes to 4000 IOPS, and continued to decrease gradually for the next 42 minutes. IOPS vary between 3000 and 4000 from approximately 50 minutes and onwards, for the rest of the 8+ hours the test ran.[8] Even with the drop in random IOPS after the 50th minute, the X25-E still has much higher IOPS compared to traditional hard disk drives. Some SSDs, including the OCZ RevoDrive 3 x2 PCIe using the SandForce controller, have shown much higher sustained write performance that more closely matches the read speed.[9] For example, a typical operating system has many small files (such as DLLs ≤ 128 kB), so SSD is more suitable for system drive.[10]

Examples

Mechanical hard drives

Block size used when testing significantly affects the number of IOPS performed by a given drive. See below for some typical performance figures:[11] Template:Sort-under

| colspan="2" Template:COther | Metric → | IOPS | IOPS | MB/s | IOPS | IOPS | MB/s | |

|---|---|---|---|---|---|---|---|---|

| Drive | Block size → | 4 KB | 64 KB | 64 KB | 512 KB | 512 KB | large | |

| Type | RPM | Access type → | random | random | random | random | random | sequential |

| SAS | 15 K | 188 – 203 | 175 – 192 | 11.2 – 12.3 | 115 – 135 | 58.9 – 68.9 | 91.5 – 126.3 | |

| FC | 15 K | 163 – 178 | 151 – 169 | 9.7 – 10.8 | 97 – 123 | 49.7 – 63.1 | 73.5 – 127.5 | |

| FC | 10 K | 142 – 151 | 130 – 143 | 8.3 – 9.2 | 80 – 104 | 40.9 – 53.1 | 58.1 – 107.2 | |

| SAS | 10 K | 142 – 151 | 130 – 143 | 8.3 – 9.2 | 80 – 104 | 40.9 – 53.1 | 58.1 – 107.2 | |

| SATA | 7200 | 73 – 79 | 69 – 76 | 4.4 – 4.9 | 47 – 63 | 24.3 – 32.1 | 43.4 – 97.8 | |

| SATA | 5400 | 57 | 55 | 3.5 | 44 | 22.6 | ||

Solid-state devices

| Device | Type | IOPS | Interface | Notes |

|---|---|---|---|---|

| Intel X25-M G2 (MLC) | SSD | ~8,600 IOPS[12] | SATA 3 Gbit/s | Intel's data sheet[13] claims 6,600/8,600 IOPS (80 GB/160 GB version) and 35,000 IOPS for random 4 KB writes and reads, respectively. |

| Intel X25-E (SLC) | SSD | ~5,000 IOPS[14] | SATA 3 Gbit/s | Intel's data sheet[15] claims 3,300 IOPS and 35,000 IOPS for writes and reads, respectively. 5,000 IOPS are measured for a mix. Intel X25-E G1 has around 3 times higher IOPS compared to the Intel X25-M G2.[16] |

| G.Skill Phoenix Pro | SSD | ~20,000 IOPS[17] | SATA 3 Gbit/s | SandForce-1200 based SSD drives with enhanced firmware, states up to 50,000 IOPS, but benchmarking shows for this particular drive ~25,000 IOPS for random read and ~15,000 IOPS for random write.[17] |

| Samsung SSD 850 PRO | SSD | Up toTemplate:Pov inline 100,000 read IOPS Up toTemplate:Pov inline 90,000 write IOPS[18][non-primary source needed] |

SATA 6 Gbit/s | 4 KB aligned random I/O at QD32 10,000 read IOPS, 36,000 write IOPS at QD1 550 MB/s sequential read, 520 MB/s sequential write on 256 GB and larger models 550 MB/s sequential read, 470 MB/s sequential write on 128 GB model[18] |

| Virident Systems tachIOn | SSD | Up toTemplate:Pov inline 320,000 sustained READ IOPS using 4 kB blocks, and up to 200,000 sustained WRITE IOPS using 4kB blocks[19] | PCIe | |

| OCZ RevoDrive 3 X2 | SSD | Up toTemplate:Pov inline 200,000 random write 4k IOPS[20] | PCIe | |

| DDRdrive X1 | SSD | Up toTemplate:Pov inline 300,000+ (512B random read IOPS) and 200,000+ (512B random write IOPS)[21][22][23][non-primary source needed][24] | PCIe | |

| Samsung SSD 960 EVO | SSD | NVMe over PCIe 3.0 x4, M.2 | 4 kB aligned random I/O with four workers at QD4 (effectively QD16),[25] 1 TB model 14,000 read IOPS, 50,000 write IOPS at QD1 330,000 read IOPS, 330,000 write IOPS on 500 GB model 300,000 read IOPS, 330,000 write IOPS on 250 GB model Up toTemplate:Pov inline 3.2 GB/s sequential read, 1.9 GB/s sequential write[26] | |

| Samsung SSD 960 PRO | SSD | Up toTemplate:Pov inline 440,000 read IOPS Up toTemplate:Pov inline 360,000 write IOPS[26][non-primary source needed] |

NVMe over PCIe 3.0 x4, M.2 | 4kB aligned random I/O with four workers at QD4 (effectively QD16),[25] 1 and 2 TB models 14,000 read IOPS, 50,000 write IOPS at QD1 330,000 read IOPS, 330,000 write IOPS on 512 GB model Up toTemplate:Pov inline 3.5 GB/s sequential read, 2.1 GB/s sequential write[26] |

| Kaminario K2 | SSD | Up toTemplate:Pov inline 2,000,000 IOPS.[27] 1,200,000 IOPS in SPC-1 benchmark simulating business applications[28][29] |

FC | MLC Flash |

| NetApp FAS6240 cluster | Flash/Disk | NFS, SMB, FC, FCoE, iSCSI | SPECsfs2008 is the latest version of the Standard Performance Evaluation Corporation benchmark suite measuring file server throughput and response time, providing a standardized method for comparing performance across different vendor platforms. | |

| EMC DSSD D5 | Flash | Up toTemplate:Pov inline 10 million IOPS[31][non-primary source needed] | PCIe Out of Box, up to 48 clients with high availability. | PCIe Rack Scale Flash Appliance. Product discontinued.[32] |

See also

References

- ↑ 1.0 1.1 1.2 Lowe, Scott (2010-02-12). "Calculate IOPS in a storage array". http://www.techrepublic.com/blog/datacenter/calculate-iops-in-a-storage-array/2182.

- ↑ "Getting The Hang Of IOPS v1.3". 2012-08-03. https://community.broadcom.com/symantecenterprise/communities/community-home/librarydocuments/viewdocument?DocumentKey=e6fb4a1b-fa13-4956-b763-8134185c0c0a&CommunityKey=63b01f30-d5eb-43c7-9232-72362b508207&tab=librarydocuments.

- ↑ Smith, Kent (2009-08-11). "Benchmarking SSDs: The Devil is in the Preconditioning Details". http://www.flashmemorysummit.com/English/Collaterals/Proceedings/2009/20090811_F2A_Smith.pdf.

- ↑ "SATA in the Enterprise - A 500 GB Drive Roundup | StorageReview.com - Storage Reviews". 2006-07-13. http://www.storagereview.com/articles/200607/500_6.html.

- ↑ Xiao-yu Hu; Eleftheriou, Evangelos; Haas, Robert; Iliadis, Ilias; Pletka, Roman (2009-05-04). "Write Amplification Analysis in Flash-Based Solid State Drives". SYSTOR 2009: The Israeli Experimental Systems Conference. IBM. ISBN 978-1-60558-623-6.

- ↑ "SSDs - Write Amplification, TRIM and GC". OCZ Technology. http://www.oczenterprise.com/whitepapers/ssds-write-amplification-trim-and-gc.pdf.

- ↑ "Intel Solid State Drives". Intel. http://www.intel.com/cd/channel/reseller/asmo-na/eng/products/nand/feature/index.htm.

- ↑ "Intel X25-E 64GB G1, 4KB Random IOPS, iometer benchmark". 2010-03-27. http://www.xssist.com/blog/Intel%20X25-E%2064GB%20G1,%204KB%2070%2030%20RW%20Random%20IOPS,%20iometer%20benchmark.htm.

- ↑ "OCZ RevoDrive 3 x2 PCIe SSD Review – 1.5GB Read/1.25GB Write/200,000 IOPS As Little As $699". 2011-06-28. http://thessdreview.com/our-reviews/ocz-revodrive-3-x2-480-gb-pcie-ssd-review-1-5gb-read1-25gb-write200000-iops-for-699/.

- ↑ "SSD vs. HDD: Which Do You Need?". https://www.avast.com/c-ssd-vs-hdd.

- ↑ "RAID Performance Calculator - WintelGuy.com". https://wintelguy.com/raidperf.pl.

- ↑ Schmid, Patrick; Roos, Achim (2008-09-08). "Intel's X25-M Solid State Drive Reviewed". http://www.tomshardware.com/reviews/Intel-x25-m-SSD,2012.html.

- ↑ "Intel X18-M/X25-M SATA Solid State Drive — 34 nm Product Line". Intel. January 2010. http://download.intel.com/design/flash/nand/mainstream/322296.pdf.

- ↑ Schmid, Patrick; Roos, Achim (27 February 2009). "Intel's X25-E SSD Walks All Over The Competition: They Did It Again: X25-E For Servers Takes Off". http://www.tomshardware.com/reviews/intel-x25-e-ssd,2158.html.

- ↑ "Intel X25-E SATA Solid State Drive: Product Manual". Intel. January 2009. http://download.intel.com/design/flash/nand/extreme/extreme-sata-ssd-datasheet.pdf.

- ↑ "Intel X25-E G1 vs Intel X25-M G2 Random 4 KB IOPS, iometer". May 2010. http://www.xssist.com/blog/%5BSSD%5D_Comparison_of_Intel_X25-E_G1_vs_Intel_X25-M_G2.htm.

- ↑ 17.0 17.1 "G.Skill Phoenix Pro 120 GB Test - SandForce SF-1200 SSD mit 50K IOPS - HD Tune Access Time IOPS (Diagramme) (5/12)". http://www.tweakpc.de/hardware/tests/ssd/gskill_phoenix_pro/s05.php.

- ↑ 18.0 18.1 "Samsung SSD 850 PRO Specifications". Samsung Electronics. http://www.samsung.com/semiconductor/minisite/ssd/product/consumer/850pro.html.

- ↑ "Virident's tachIOn SSD flashes by". theregister.co.uk. https://www.theregister.co.uk/2010/06/16/virident_tachion/.

- ↑ "OCZ RevoDrive 3 X2 480GB Review | StorageReview.com - Storage Reviews". 2011-06-28. http://www.storagereview.com/ocz_revodrive_3_x2_480gb_review.

- ↑ "Introducing the DDRdrive X1: Redefining Solid-State Storage". http://www.ddrdrive.com/ddrdrive_press.pdf.

- ↑ "DDRDrive - The drive for speed". http://www.ddrdrive.com/ddrdrive_brief.pdf.

- ↑ "DDRDrive - The drive for speed". http://www.ddrdrive.com/ddrdrive_bench.pdf.

- ↑ Allyn Malventano (2009-05-04). "DDRdrive hits the ground running - PCI-E RAM-based SSD | PC Perspective". http://www.pcper.com/reviews/Storage/DDRdrive-hits-ground-running-PCI-E-RAM-based-SSD.

- ↑ 25.0 25.1 Ramseyer, Chris (18 October 2016). "Samsung 960 Pro SSD Review". http://www.tomshardware.com/reviews/samsung-960-pro-ssd-review,4774.html. "Samsung tests NVMe products with four workers at QD4"

- ↑ 26.0 26.1 26.2 Cite error: Invalid

<ref>tag; no text was provided for refs namedSamsung-SSD960 - ↑ Lyle Smith. "Kaminario Boasts Over 2 Million IOPS and 20 GB/s Throughput on a Single All-Flash K2 Storage System". http://www.storagereview.com/kaminario_boasts_over_2_million_iops_and_20_gb_s_throughput_on_a_single_allflash_k2_storage_system.

- ↑ Mellor, Chris (2012-07-30). "Chris Mellor, The Register, July 30, 2012: "Million-plus IOPS: Kaminario smashes IBM in DRAM decimation"". https://www.theregister.co.uk/2012/07/30/kaminario_spc_1/.

- ↑ "Storage Performance Council: Active SPC-1 Results". Storage Performance Council. http://www.storageperformance.org/results/benchmark_results_spc1/#kaminario_spc1.

- ↑ "SpecSFS2008". http://www.spec.org/sfs2008/results/res2011q4/sfs2008-20111017-00199.html.

- ↑ "Rack-Scale Flash Appliance - DSSD D5 EMC". EMC. https://www.emc.com/en-us/storage/flash/dssd/dssd-d5/index.htm.

- ↑ "Dell kills off standalone DSSD D5, scatters remains into other gear". https://www.theregister.co.uk/2017/03/02/dell_cans_standalone_dssd/.

|

KSF

KSF