Dual process theory (moral psychology)

Topic: Philosophy

From HandWiki - Reading time: 25 min

From HandWiki - Reading time: 25 min

Dual process theory within moral psychology is an influential theory of human moral judgement that posits that human beings possess two distinct cognitive subsystems that compete in moral reasoning processes: one fast, intuitive and emotionally-driven, the other slow, requiring conscious deliberation and a higher cognitive load. Initially proposed by Joshua Greene along with Brian Sommerville, Leigh Nystrom, John Darley, Jonathan David Cohen and others,[1][2][3] the theory can be seen as a domain-specific example of more general dual process accounts in psychology, such as Daniel Kahneman's "system1"/"system 2" distinction popularised in his book, Thinking, Fast and Slow. Greene has often emphasized the normative implications of the theory,[4][5][6] which has started an extensive debate in ethics.[7][8][9][10]

The dual-process theory has had significant influence on research in moral psychology. The original fMRI investigation[1] proposing the dual process account has been cited in excess of 2000 scholarly articles, generating extensive use of similar methodology as well as criticism.

Core commitments

The dual-process theory of moral judgement asserts that moral decisions are the product of either one of two distinct mental processes.

- The automatic-emotional process is fast and unconscious, which gives way to intuitive behaviours and judgments. The factors affecting moral judgment of this type may be consciously inaccessible.[11]

- The conscious-controlled process involves slow and deliberative reasoning. Moral judgments of this type are less influenced by the immediate emotional features of decision-making. Instead, they may draw from general knowledge and abstract moral conceptions, combined with a more controlled analysis of situational features.

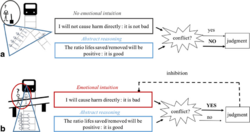

Following neuroscientific experiments, in which subjects were confronted with ethical dilemmas following the logic of Philippa Foot's famous Trolley Case (see Figure 1), Joshua Greene claims that the two processes can be linked to two classes of ethical theories respectively.[6]

He calls this the Central Tension Problem: Moral judgments that can be characterised as deontological are preferentially supported by automatic-emotional processes and intuitions. Characteristically utilitarian judgments, on the other hand, seem to be supported by conscious-controlled processes and deliberative reasoning.[6]

Camera analogy

As an illustration of his dual-process theory of moral reasoning, Greene compares the dual-process in human brains to a digital SLR camera which operates in two complementary modes: automatic and manual.[6] A photographer can either employ the automatic "point-and-shoot" setting, which is fast and highly efficient, or adjust and refine settings in manual mode, which gives the photographer greater flexibility.

Dual-process moral reasoning is an effective response to a similar efficiency-flexibility trade-off. We often rely on our "automatic settings" and allow intuitions to guide our behaviour and judgement. In "manual mode", judgments draw from both general knowledge about "how the world works" and explicit understanding of special situational features. The operations of this "manual mode" system requires effortful conscious deliberation.[6]

Greene concedes that his analogy has limited force. While a photographer can switch back-and-forth between automatic and manual mode, the automatic-intuitive processes of human reasoning are always active: conscious deliberations needs to "override" our intuitions. In addition to that, automatic settings of our brains are not necessarily "hard-wired", but can be changed through (cultural) learning.[6]

Interaction between systems

There is lack of agreement on whether and how the two processes interact with one another.[12][13][14][15] It is unclear whether deontological responders, for example, rely blindly on the intuitively cued response without any thought of utilitarian considerations or whether they recognise the alternative utilitarian response but, on consideration, decide against it. These alternative interpretations point to different models of interaction: a serial (or "default-interventionist") model, and a parallel model.[16]

Serial models assume that there is initially an exclusive focus on the intuitive system to make judgements but that this default processing might be followed by deliberative processing at a later stage. Greene et al.'s model is usually placed within this category.[14][15] In contrast, in a parallel model, it is assumed that both processes are simultaneously engaged from the beginning.[16]

Models of the former category lend support to the view that humans, in an effort to minimise cognitive effort, will choose to refrain from the more demanding deliberative system where possible. Only utilitarian responders will have opted into it. This further implies that deontological responders will not experience any conflict from the "utilitarian pull" of the dilemma: they have not engaged in the processing that gives rise to these considerations in the first place. In contrast, in a parallel model both utilitarian and deontological responders will have engaged both processing systems. Deontological responders recognise that they face conflicting responses, but they do not engage in deliberative processing to a sufficient extent to enable them to override the intuitive (deontological) response.[16]

Within generic dual process research, some scientists have argued that serial and parallel models fail to capture the true nature of the interaction between dual process systems.[17][18] They contend that some operations that are commonly said to belong to the deliberative system can in fact also be cued by the intuitive system,[19] and we need to think about hybrid models in light of this evidence.[15] Hybrid models would lend support to the notion of a "utilitarian intuition" - a utilitarian response cued by the automatic, "emotion-driven" cognitive system.[15]

Scientific evidence

Neuroimaging

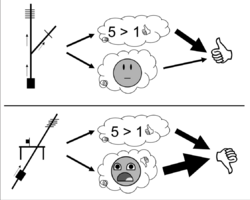

Greene uses fMRI to evaluate the brain activities and responses of people confronted with different variants of the famous Trolley problem in ethics.

There are 2 versions of trolley problem. They are trolley driver dilemma and footbridge dilemma presented as follows.

The Switch Case “You are at the wheel of a runaway trolley quickly approaching a fork in the tracks. On the tracks extending to the left is a group of five railway workmen. On the tracks extending to the right is a single railway workman. If you do nothing the trolley will proceed to the left, causing the deaths of the five workmen. The only way to avoid the deaths of these workmen is to hit a switch on your dashboard that will cause the trolley to proceed to the right, causing the death of the single workman. Is it appropriate for you to hit the switch in order to avoid the deaths of the five workmen?[9] (Most people judge that it is appropriate to hit the switch in this case.)

The Footbridge Case: “A runaway trolley is heading down the tracks toward five workmen who will be killed if the trolley proceeds on its present course. You are on a footbridge over the tracks, in between the approaching trolley and the five workmen. Next to you on this footbridge is a stranger who happens to be very large. The only way to save the lives of the five workmen is to push this stranger off the bridge and onto the tracks below where his large body will stop the trolley. The stranger will die if you do this, but the five workmen will be saved. Is it appropriate for you to push the stranger onto the tracks in order to save the five workmen?[9] (Most people judge that it is not appropriate to push the stranger onto the tracks.)

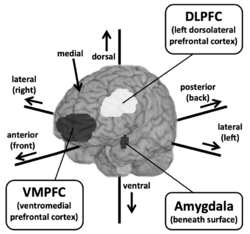

Greene[21] and his colleagues carried out fMRI experiments in order to investigate which regions of the brain were activated in subjects while responding to 'personal dilemmas' such as the footbridge dilemma and 'impersonal dilemmas' such as the switch dilemma. 'Personal dilemmas' were defined as any satisfying three conditions: a) The action in question could reasonably be expected to lead to bodily harm, b) The harm is inflicted in particular persons or members of a particular group and c) The harm is not a result of diverting a previously existing threat onto another party. All other dilemmas were classed as 'impersonal'. It was observed that when responding to personal dilemmas, the subjects displayed increased activity in regions of the brain associated with emotion (the medial Prefrontal cortex, the posterior Cingulate cortex/Precuneus, the posterior Superior temporal sulcus/Inferior parietal lobule and the Amygdala), while when they responded to impersonal dilemmas, they displayed increased activity in regions of the brain associated with working memory (the Dorsolateral prefrontal cortex and the Parietal lobe). In recent work, Greene has stated that the Amygdala is primarily responsible for the emotional response, whilst the Ventromedial prefrontal cortex is responsible for weighing up the consequentialist response against the emotional response. Thus, three brain regions are primarily implicated in the making of moral judgements.[20] This gives way to what Greene[6] calls the Central Tension Principle: "Characteristically deontological judgements are preferentially supported by automatic emotional responses, while characteristically consequentialist judgments are preferentially supported by conscious reasoning and allied processes of cognitive control".

Greene points to a large body of evidence from cognitive science suggesting that inclination to deontological or consequentialist judgment depends on whether emotional-intuitive reactions or more calculated ones were involved in the judgment-making process.[6] For example, encouraging deliberation or removing time pressure leads to an increase in consequentialist response. Being under cognitive load while making a moral judgement decreases consequentialist responses.[22] In contrast, solving a difficult math problem before making a moral judgement (meant to make participants more skeptical of their intuitions) increases the number of consequentialist responses. When asked to explain or justify their responses, subjects preferentially chose consequentialist principles – even for explaining characteristically deontological responses. Further evidence shows that consequentialist responses to trolley-problem-like dilemmas are associated with deficits in emotional awareness in people with alexithymia or psychopathic tendencies.[22] On the other hand, subjects being primed to be more emotional or empathetic give more characteristically deontological answers.

In addition, Greene's results show that some brain areas, such as the medial prefrontal cortex, the posterior cingulate/precuneus, the posterior superior temporal sulcus/inferior parietal lobe, and the amygdala, are associated with emotional processes. Subjects exhibited increased activity in these brain regions when presented with situations involving the use of personal force (e.g. the 'footbridge' case). The dorsolateral prefrontal cortex and the parietal lobe are 'cognitive' brain regions; subjects show increased activity in these two regions when presented with impersonal moral dilemmas.[6]

Arguments for the dual process theory relying on neuroimaging data have been criticized for their reliance on reverse inference.[23][24]

Brain lesions

Neuropsychological evidence from lesion studies focusing on patients with damage to the ventromedial prefrontal cortex also points to a possible dissociation between emotional and rational decision processes. Damage to this area is typically associated with antisocial personality traits and impairments of moral decision making.[25] Patients with these lesions tend to show a more frequent endorsement of the "utilitarian" path in trolley problem dilemmas.[26] Greene et al. claim that this shows that when emotional information is removed through context or damage to brain regions necessary to render such information, the process associated with rational, controlled reasoning dominates decision making.[27]

A popular medical case, studied in particular by neuroscientist Antonio Damasio,[28] was that of American railroad worker Phineas Gage. On the 13th of September 1848, while working on a railway track in Vermont, he was involved in an accident: an "iron rod used to cram down the explosive powder shot into Gage's cheek, went through the front of his brain, and exited via the top of his head".[29] Surprisingly, not only Gage survived, but he also went back to his normal life just in less than two months.[28] Although his physical capacities were restored, however, his personality and his character radically changed. He became vulgar and anti-social: "Where he had once been responsible and self-controlled, now he was impulsive, capricious, and unreliable".[29] Damasio wrote: "Gage was no longer Gage."[28] Moreover, also his moral intuitions were transformed. Further studies by means of neuroimaging showed a correlation between such "moral" and character transformations and injuries to the ventromedial prefrontal cortex.[30]

In his book Descartes' Error, commenting on Phineas Gage case, Damasio said that after the accident the railroad worker was able "To know, but not to feel."[28] As explained by David Edmonds, Joshua Greene thought that this could explain the difference in moral intuitions in different versions of the trolley problem: "We feel that we shouldn't push the fat man. But we think it better to save five rather than one life. And the feeling and the thought are distinct.”[29]

Reaction times

Another critical piece of evidence supporting the dual process account comes from reaction time data associated with moral dilemma experiments. Subjects who choose the "utilitarian" path in moral dilemmas showed increased reaction times under high cognitive load in "personal" dilemmas, while those choosing the "deontological" path remained unaffected.[31] Cognitive load, in general, is also found to increase the likelihood of "deontological" judgment[32] These laboratory findings are supplemented by work that looks at the decision-making processes of real-world altruists in life-or-death situations.[33] These heroes overwhelming described their actions as fast, intuitive, and virtually never as carefully reasoned.

Evolutionary rationale

The dual process theory is often given an evolutionary rationale (in this basic sense, the theory is an example of evolutionary psychology).

In pre-Darwinian thinking, such as Hume's ‘Treatise of Human Nature’ we find speculations about the origins of morality as deriving from natural phenomena common to all humans. For instance, he mentions the "common or natural cause of our passions" and the generation of love for others represented through self-sacrifice for the greater good of the group. Hume's work is sometimes cited as an inspiration for contemporary dual process theories.[8]

Darwin's evolutionary theory gives a better descriptive process for how these moral norms are derived from evolutionary processes and natural selection.[8] For example, selective pressures favour self-sacrifice for the benefit of the group and punish those who do not. This provides a better explanation of the cost-benefit ratio for the generation of love for others as originally mentioned by Hume.

Another example of an evolutionarily derived norm is justice, which is born out of the ability to detect those who cheat. Peter Singer explains justice from the evolutionary perspective by stating the instinct of reciprocity improved fitness for survival, therefore those who did not reciprocate were considered cheaters and cast-off from the group.[8]

Peter Singer agrees with Greene that consequentialist judgements are to be favored over deontological judgements. According to him, moral constructivism searches for reasonable grounds whereas deontological judgements rely on hasty and emotional responses.[8] [failed verification] Singer argues our most immediate moral intuitions should be challenged. A normative ethic must not be evaluated by the extent to which it matches those moral intuitions. He gives the example of a brother and sister who secretly decide to have sex with each other using contraceptives. Our first intuitive reaction is a firm condemnation of incest as morally wrong. However, a consequentialist judgement brings another conclusion. As the brother and sister did not tell anyone and they used contraceptives, the incest did not have any harmful consequences. Thus, in that case, incest is not necessarily wrong.[8]

Singer relies on evolutionary theories to justify his claim. For most of our evolutionary history, human beings have lived in small groups where violence was ubiquitous. Deontological judgements linked to emotional and intuitive responses were developed by human beings as they were confronted with personal and close interactions with others. In the past century, our social organizations were altered and so these types of interactions have become less frequent. Therefore, Singer argues that we should rely on more sophisticated consequentialist judgements that fit better in our modern times than the deontological judgements that were useful for more rudimentary interactions.[8]

Scientific criticisms

File:Ultimatum Game Extensive Form.svg Several scientific criticisms have been leveled against the dual process account. One asserts that the dual emotional/rational model ignores the motivational aspect of decision making in human social contexts.[34][35] A more specific example of this criticism focuses on the ventromedial prefrontal cortex lesion data. Although patients with this damage display characteristically "cold-blooded" behaviour in the trolley problem, they show more likelihood of endorsement of emotionally laden choices in the Ultimatum Game.[36] It is argued that moral decisions are better understood as integrating emotional, rational, and motivational information, the last of which has been shown to involve areas of the brain in the limbic system and brain stem.[37]

Methodological Worries

Other criticisms focus on the methodology of using moral dilemmas such as the trolley problem. These criticisms note the lack of affective realism in contrived moral dilemmas and their tendency to use the actions of strangers to offer a view of human moral sentiments. Paul Bloom, in particular, has argued that a multitude of attitudes towards the agents involved is important in evaluating an individual's moral stance, as well as evaluating the motivations that may inform those decisions.[38] Kahane and Shackel scrutinize the questions and dilemmas Greene et al. use, and claim that the methodology used in the neuroscientific study of intuitions needs to be improved.[39] However, after Kahane and colleagues engineered a set of moral dilemmas specifically meant to falsify Greene's theory, their moral dilemmas turned out to confirm it instead.[6]

Berker has raised three methodological worries about Greene's empirical findings.[9] First It is not the case that only deontological judgements are tied to cognitive processes. In fact, one region of the brain traditionally associated with the emotions – the posterior cingulate – appears to be activated for characteristically consequentialist judgements. While it is not clear how crucial a role this region plays in moral judgements, one can argue that all moral judgements seem to involve at least some emotional processing. This would disprove the simplest version of the dual-process hypothesis. Greene responded to this argument by proposing that emotions that drive deontological judgements are "alarmlike", whereas those that are present during consequentialist judgements are "more like currency."[40] A response which Berker regards to be without empirical backing.[9]

Berker's second methodological worry is that Greene et al. presented the response-time data to moral dilemmas in a statistically invalid way. Rather than calculating the average difference in response time between the “appropriate” responses and “inappropriate responses for each moral dilemma, Greene et al. calculated the average response time of the combined “appropriate” and the combined “inappropriate” responses. Because of this way of calculating, the differences from question to question significantly skewed the results, Berker points out that some questions involved “easy” cases that should not be classified as dilemmas. This is because of the way these cases were framed, people found one of the choices to be obviously inappropriate.

Third, Berker argues Greene's criteria to classify impersonal and personal moral dilemmas do not map onto the distinction of deontological and consequentialist moral judgements. It is not the case consequentialist judgements only arise if cases involve impersonal factors. Berkner highlights the “Lazy Susan Case”, where the only way to save five people who sit on a Lazy Susan is to push the lazy Susan into an innocent bystander, killing him. so that serves as a counter-example. Although this thought experiment involves personal harm, the philosopher Francis Kamm arrives at an intuitive consequentialist judgement, thinking it is permissible to kill one to save five.

Notwithstanding the above, the later criticism has been considered by Greene.

More recent methodological concerns stem from new evidence suggesting that deontological inclinations are not necessarily more emotional or less rational than utilitarian inclinations. For example, cognitive reflection predicts both utilitarian and deontological inclinations,[41] but only by dissociating these moral inclinations with a more advanced protocol that was not used in early dual process theoretic research.[42] Further, there is evidence that utilitarian decisions are associated with more emotional regret than deontological decisions.[43] Evidence like this complicates dual process theorists' claims that utilitarian thinking is more rational or that deontological thinking is more emotional.

Failure of the non-negotiability hypothesis

Guzmán, Barbato, Sznycer, and Cosmides pointed out that moral dilemmas were a recurrent adaptive problem for ancestral humans, whose social life created multiple responsibilities to others (siblings, parents and offspring, cooperative partners, coalitional allies, and so on). Intermediate solutions, ones that strike a balance between conflicting moral values, would have often promoted fitness better than neglecting one value to fully satisfy others. A capacity to make intermediate or “compromise” judgments would have been favored by natural selection.[44]

The dual process model, however, rules out the possibility of moral compromises. According to Greene and colleagues, people experience the footbridge problem as a dilemma because “two [dissociable psychological] processes yield different answers to the same question”.[45] On the one hand, System 2 outputs a utilitarian judgment: “push the bystander.” On the other hand, System 1 activates an “alarm bell” emotion: “do not harm the bystander.” Greene and colleagues claim that the prohibition to harm is “nonnegotiable”: It cannot be weighed against other values, including utilitarian considerations.[5][45] They argue that “[i]ntractable dilemmas arise when psychological systems produce outputs that are… non-negotiable because their outputs are processed as absolute demands, rather than fungible preferences.”[45] The dual-process model predicts that subtle changes in context will cause people to flip between extreme judgments: deontic and utilitarian. Moral compromises will be infrequent “trembling hand mistakes.”[44]

Trolley problems cannot be used to test for non-negotiability, because they force extreme responses (e.g., push or do not push).[44] So, to test the prediction, Guzmán and colleagues designed a sacrificial moral dilemma that permits compromise judgments, of the form “sacrifice x people to save y”, where N > 1 people are saved for each one sacrificed. Their experimental results show that people make many compromise judgments, which respect the axioms of rational choice. These results contradict the dual-process model, which claims that only deontic judgments are the product of moral rationality. The results indicate the existence of a moral tradeoff system that weighs competing moral considerations and finds a solution that is most right, which can be a compromise judgment.

Alleged ethical implications

Greene ties the two processes to two existing classes of ethical theories in moral philosophy.[5] He argues that the existing tension between deontological theories of ethics that focus on "right action" and utilitarian theories that focus on "best results" can be explained by the dual-process organisation of the human mind. Ethical decisions that fall under 'right action' correspond to automatic-emotional (system 1) processing, whereas 'best results' correspond to conscious-controlled reasoning (system 2).

One illustration of this tension are intuitions about trolley-cases that differ across the dimension of personal force.[6] When people are asked whether it would be right or wrong to flick a switch in order to divert a trolley from killing five people, their intuitions usually indicate that flicking the switch is the morally right choice. However, when the same scenario is presented to people, but instead of flicking a switch, subjects are asked whether they would push a fat man onto the rails in order to stop the trolley, intuitions usually have it that pushing the fat man is the wrong choice. Given that both actions lead to the saving of five people, why is one judged to be right, whereas the other one wrong? According to Greene, there is no moral justification for this difference of intuitions between the 'switch' and the 'fat man' trolley-case. Instead, what leads to such difference is the morally irrelevant fact that the 'fat man' case involves the use of personal force (thus leading most people to judge that pushing the fat man is the wrong action), whereas the 'switch' case doesn't (thus leading most people to judge that flicking the switch is the right action).

Greene takes such observations as point of departure to argue that judgments produced by automatic-emotional processes lack normative force in comparison to those produced by conscious-controlled processes. Relying on automatic, emotional responses when dealing with unfamiliar moral dilemmas would mean to bank on "cognitive miracles".[6] Greene subsequently proposes that this vindicates consequentialism. He rejects deontology as a moral framework as holds that deontological theories may be reduced to "post-hoc" rationalisations of arbitrary emotional responses.[5]

Greene's "direct route"

Greene firstly argues that scientific findings can help us reach interesting normative conclusions, without crossing the is–ought gap. For example, he considers the normative statement "capital juries make good judgements". Scientific findings could lead us to revise this judgement if it were found that capital juries were, in fact, sensitive to race if we accept the uncontroversial normative premise that capital juries ought not to be sensitive to race.[6]

Greene then states that the evidence for dual-process theory might give us reason to question judgements which are based upon moral intuitions, in cases where those moral intuitions might be based upon morally irrelevant factors. He gives the example of incestuous siblings. Intuition might tell us that this is morally wrong, but Greene suggests that this intuition is the result of incest historically being evolutionary disadvantageous. However, if the siblings take extreme precautions, such as vasectomy, in order to avoid the risk of genetic mutation in their offspring, the cause of the moral intuition is no longer relevant. In such cases, scientific findings have given us reason to ignore some of our moral intuitions, and in turn revise the moral judgements which are based upon these intuitions.[6]

Greene's "indirect route"

Greene is not making the claim that moral judgements based on emotion are categorically bad. His position is that the different “settings” are appropriate for different scenarios.

With regards to automatic settings, Greene says we should only rely on these when faced with a moral problem that is sufficiently “familiar” to us. Familiarity, on Greene's conception, can arise from three sources - evolutionary history, culture and personal experience. It is possible that fear of snakes, for instance, can be traced to genetic dispositions, whereas a reluctance to place one's hand on a stove is caused by previous experience on burning one's hand on a hot stove.[6]

The appropriateness of applying our intuitive and automatic mode of reasoning to a given moral problem thus hinges on how the process was formed in the first place. Shaped by trial-and-error experience, automatic settings will only function well when one has sufficient experience of the situation at hand.

In light of these considerations, Greene formulates the "No Cognitive Miracles Principle":[6]

When we are dealing with unfamiliar* moral problems, we ought to rely less on automatic settings (automatic emotional responses) and more on manual mode (conscious, controlled reasoning), lest we bank on cognitive miracles.

This has implications for philosophical discussion of what Greene calls "unfamiliar problems", or ethical problems ones with which we have inadequate evolutionary, cultural, or personal experience. We might have to attentively revise our intuitions for topics like climate change, genetic engineering, global terrorism, global poverty, etc. As Greene states, this doesn't mean that our intuitions will always be wrong, but it means we need to pay attention as to where they come from and how they fare compared to more rational argument.[6]

Philosophical criticisms

Greene's 2008 article "The Secret Joke of Kant's Soul"[46] argues that Kantian/deontological ethics tends to be driven by emotional respondes and is best understood as rationalization rather than rationalism—an attempt to justify intuitive moral judgments post-hoc, although the author states that his argument is speculative and will not be conclusive. Several philosophers have written critical responses, mainly criticising the necessary linking between process, automatic or controlled, intuitive or counterintuitive/rational, with containt, respectively, deontological or utilitarian.[47][48][49][50]

Thomas Nagel has argued that Joshua Greene, in his book Moral Tribes, is too quick to conclude utilitarianism specifically from the general goal of constructing an impartial morality; for example, he says, Immanuel Kant and John Rawls offer other impartial approaches to ethical questions.[51][irrelevant citation]

Robert Wright has called Joshua Greene's proposal for global harmony ambitious adding, "I like ambition!"[52] But he also claims that people have a tendency to see facts in a way that serves their ingroup, even if there's no disagreement about the underlying moral principles that govern the disputes. "If indeed we're wired for tribalism," Wright explains, "then maybe much of the problem has less to do with differing moral visions than with the simple fact that my tribe is my tribe and your tribe is your tribe. Both Greene and Paul Bloom cite studies in which people were randomly divided into two groups and immediately favored members of their own group in allocating resources -- even when they knew the assignment was random."[52] Instead, Wright proposes that "nourishing the seeds of enlightenment indigenous to the world's tribes is a better bet than trying to convert all the tribes to utilitarianism -- both more likely to succeed, and more effective if it does."[52]

Berker's criticisms

In a widely cited critique of Greene's work and the philosophical implications of the dual process theory, Harvard philosophy professor Selim Berker critically analyzed four arguments that might be inferred from Greene and Singer's conclusion.[9] He labels three of them as merely rhetoric or "bad arguments", and the last one as "the argument from irrelevant factors".[9] According to Berker, all of them are fallacious.

Three bad arguments

While the three bad arguments identified by Berker are not explicitly made by Greene and Singer, Berker considers them as implicit in their reasoning.

The first is the “Emotions Bad, Reasoning Good” argument. According to it, our deontological intuitions are driven by emotions, while consequentialist intuitions imply abstract reasoning. Therefore, deontological intuitions don't have any normative force, whereas consequentialist intuitions do. Berker claims that this is question begging for two reasons. First, because there is no support for the claim that emotionally driven intuitions are less reliable than those guided by reason. Secondly, because the argument seems to rely on the assumption that deontological intuitions involve only emotional processes whereas consequentialist intuitions involve only abstract reasoning. For Berker, this assumption also lacks empirical evidence. As a matter of fact, Greene's research[2] itself shows that consequentialist responses to personal moral dilemmas involve at least one brain region - the posterior cingulate- that is associated with emotional processes. Hence, the claim that deontological judgements are less reliable than consequentialist judgements because they are influenced by emotions cannot be justified.

The second bad argument presented by Berker is “The Argument from Heuristics”, which is an improved version of the "Emotions Bad, Reasoning Good" argument. It is claimed that emotion-driven processes tend to involve fast heuristics, which makes them unreliable. It follows that deontological intuitions, being an emotional form or reasoning themselves, should not be trusted. According to Berker this line of thought is also flawed. This is so because forms of reasoning that consist in heuristics are usually those in which we have a clear notion of what is right and wrong. Hence, in the moral domain, where these notions are highly disputed, "it is question begging to assume that the emotional processes underwriting deontological intuitions consist in heuristics".[9] Berker also challenges the very assumption that heuristics lead to unreliable judgements. Additionally, he argues that, as far as we know, consequentialist judgements may also rely on heuristics, given that it is highly unlikely that they could always be the product of accurate and comprehensive mental calculations of all the possible outcomes.

The third bad argument is “The Argument from Evolutionary History”. It draws on the idea that our different moral responses towards personal and impersonal harms are evolutionarily based. In fact, since personal violence has been known since ancient age, human developed emotional responses as innate alarm systems in order to adapt, handle and promptly respond to such situations of violence within their groups. Cases of impersonal violence, instead, do not raise the same innate alarm and therefore they leave room for more accurate and analytical judgement of the situation. Thus, according to this argument, unlike consequentialist intuitions, emotion-based deontological intuitions are the side effects of this evolutionary adaption to the pre-existing environment. Therefore, “deontological intuitions, unlike consequentialist intuitions, do not have any normative force".[9] Berker states that this is an incorrect conclusion because there is no reason to think that consequentialist intuitions are not also by-products of evolution.[9] Moreover, he argues that the invitation, advanced by Singer,[8] to separate evolutionary-based moral judgements (allegedly unreliable) from those that are based on reason, is misleading because it is based on a false dichotomy.

The Argument from Morally Irrelevant Factors

Berker argued that the most promising argument from neural "is" to moral "ought" is the following.[9]

“P1. The emotional processing that gives rise to deontological intuitions responds to factors that make a dilemma personal rather than impersonal.

P2. The factors that make a dilemma personal rather than impersonal are morally irrelevant.

C1. So, the emotional processing that gives rise to deontological intuitions responds to factors that are morally irrelevant.

C2. So, deontological intuitions, unlike consequentialist intuitions, do not have any genuine normative force.”

Berker criticises both premises and the move from C1 to C2. Regarding P1, Berker is not convinced that deontological judgments are correctly characterized as merely appealing to factors that make the dilemma personal. For example, Kamm's[53] 'Lazy Susan' trolley case is an example of a 'personal' dilemma which elicits a characteristically consequentialist response. Regarding P2, he argues that factors that make a dilemma personal or impersonal are not necessarily morally irrelevant. Moreover, he adds, P2 is 'armchair philosophizing': it cannot be deduced from neuroscientific results that the closeness of a dilemma is bears on its moral relevance.[9] Eventually, Berker concludes that even if we accept P1 and P2, C1 doesn't necessarily entail C2. This is because it may be the case that consequentialist intuitions also respond to morally irrelevant factors. Unless we can show that this is not the case, the inference from C1 to C2 is invalid.

Intuition as wisdom

Many philosophers appeal to what is colloquially known as the yuck factor, or the belief that a widespread common negative intuition towards something is evidence that there is something morally wrong about it. This opposes Greene's conclusion that intuitions should not be expected to "perform well" or give us good ethical reasoning for some ethical problems. Leon Kass's Wisdom of Repugnance presents a prime example of a feelings-based response to an ethical dilemma. Kass attempts to make a case against human cloning on the basis of the widespread strong feelings of repugnance at cloning. He lists examples of the various unpalatable consequences of cloning and appeals to notions of human nature and dignity to show that our disgust is the emotional expression of deep wisdom that is not fully articulable.[54]

There is a widespread debate on the role of moral emotions, such as guilt or empathy and their role in philosophy, and intuitions' relationship to them.[55]

The role of empathy

In particular, the role of empathy in morality has recently been heavily criticized by commentators such as Jess Prinz, who describes it as "prone to biases that render moral judgment potentially harmful.”[56] Similarly Paul Bloom, author of 'Against Empathy: The Case for Rational Compassion' labels empathy as "narrow-minded, parochial, and innumerate", [57] primarily due to the deleterious effects that can arise when entrusting emotional, un-reasoned responses to tackling complex ethical issues, which can only be adequately addressed via rationality and reflection.

An example of this is 'The Identifiable victim effect', where subjects exhibit a much stronger emotional reaction to the suffering of a known victim, as opposed to the weaker emotional response experienced when responding to the suffering of a large-scale, anonymous group (even though the benefit conferred by the subject would be of equal utility in both cases).

This exemplifies the potential for empathy to 'misfire' and motivates the widely shared consensus in the moral enhancement debate that more is required than the amplification of certain emotions. Increasing an agent's empathy by artificially raising oxytocin levels will likely be ineffective in improving their overall moral agency, because such a disposition relies heavily on psychological, social and situational contexts, as well as their deeply held convictions and beliefs.[58] Rather,

"it is likely that augmenting higher-order capacities to modulate one's moral responses in a flexible, reason-sensitive, and context-dependent way would be a more reliable, and in most cases more desirable, means to agential moral enhancement."[58]

References

- ↑ 1.0 1.1 "An fMRI investigation of emotional engagement in moral judgment". Science 293 (5537): 2105–8. September 2001. doi:10.1126/science.1062872. PMID 11557895. Bibcode: 2001Sci...293.2105G.

- ↑ 2.0 2.1 "The neural bases of cognitive conflict and control in moral judgment". Neuron 44 (2): 389–400. October 2004. doi:10.1016/j.neuron.2004.09.027. PMID 15473975.

- ↑ "The rat-a-gorical imperative: Moral intuition and the limits of affective learning". Cognition 167: 66–77. October 2017. doi:10.1016/j.cognition.2017.03.004. PMID 28343626.

- ↑ "From neural 'is' to moral 'ought': what are the moral implications of neuroscientific moral psychology?". Nature Reviews. Neuroscience 4 (10): 846–9. October 2003. doi:10.1038/nrn1224. PMID 14523384.

- ↑ 5.0 5.1 5.2 5.3 Greene, Joshua D. (2008). Sinnott-Armstrong, W.. ed. "The Secret Joke of Kant's Soul". Moral Psychology: The Neuroscience of Morality (Cambridge, MA: MIT Press): 35–79.

- ↑ 6.00 6.01 6.02 6.03 6.04 6.05 6.06 6.07 6.08 6.09 6.10 6.11 6.12 6.13 6.14 6.15 6.16 Greene, Joshua D. (2014-07-01). "Beyond Point-and-Shoot Morality: Why Cognitive (Neuro)Science Matters for Ethics". Ethics 124 (4): 695–726. doi:10.1086/675875. ISSN 0014-1704.

- ↑ Railton, Peter (July 2014). "The Affective Dog and Its Rational Tale: Intuition and Attunement". Ethics 124 (4): 813–859. doi:10.1086/675876. ISSN 0014-1704.

- ↑ 8.0 8.1 8.2 8.3 8.4 8.5 8.6 8.7 Singer, Peter (October 2005). "Ethics and Intuitions". The Journal of Ethics 9 (3–4): 331–352. doi:10.1007/s10892-005-3508-y.

- ↑ 9.00 9.01 9.02 9.03 9.04 9.05 9.06 9.07 9.08 9.09 9.10 9.11 Berker, Selim (September 2009). "The Normative Insignificance of Neuroscience". Philosophy & Public Affairs 37 (4): 293–329. doi:10.1111/j.1088-4963.2009.01164.x. ISSN 0048-3915. http://nrs.harvard.edu/urn-3:HUL.InstRepos:4391332.

- ↑ Bruni, Tommaso; Mameli, Matteo; Rini, Regina A. (2013-08-25). "The Science of Morality and its Normative Implications". Neuroethics 7 (2): 159–172. doi:10.1007/s12152-013-9191-y. https://kclpure.kcl.ac.uk/ws/files/45517552/neuromoral.pdf.

- ↑ "The role of conscious reasoning and intuition in moral judgment: testing three principles of harm". Psychological Science 17 (12): 1082–9. December 2006. doi:10.1111/j.1467-9280.2006.01834.x. PMID 17201791.

- ↑ Gürçay, Burcu; Baron, Jonathan (2017-01-02). "Challenges for the sequential two-system model of moral judgement". Thinking & Reasoning 23 (1): 49–80. doi:10.1080/13546783.2016.1216011. ISSN 1354-6783.

- ↑ Białek, Michał; Neys, Wim De (2016-07-03). "Conflict detection during moral decision-making: evidence for deontic reasoners' utilitarian sensitivity". Journal of Cognitive Psychology 28 (5): 631–639. doi:10.1080/20445911.2016.1156118. ISSN 2044-5911.

- ↑ 14.0 14.1 Koop, Gregory. J (2013). "An assessment of the temporal dynamics of moral decisions". Judgment and Decision Making 8 (5): 527–539. doi:10.1017/S1930297500003636.

- ↑ 15.0 15.1 15.2 15.3 De Neys, W, Bialek, M (2017). "Dual processes and moral conflict: Evidence for deontological reasoners' intuitive utilitarian sensitivity". Judgment and Decision Making 12 (2): 148–167. doi:10.1017/S1930297500005696. http://journal.sjdm.org/vol12.2.html.

- ↑ 16.0 16.1 16.2 Białek, Michał; De Neys, Wim (2016-03-02). "Conflict detection during moral decision-making: evidence for deontic reasoners' utilitarian sensitivity". Journal of Cognitive Psychology 28 (5): 631–639. doi:10.1080/20445911.2016.1156118. ISSN 2044-5911.

- ↑ Handley, Simon J.; Trippas, Dries (2015), "Dual Processes and the Interplay between Knowledge and Structure: A New Parallel Processing Model", Psychology of Learning and Motivation (Elsevier): pp. 33–58, doi:10.1016/bs.plm.2014.09.002, ISBN 9780128022733

- ↑ De Neys, Wim (January 2012). "Bias and Conflict". Perspectives on Psychological Science 7 (1): 28–38. doi:10.1177/1745691611429354. ISSN 1745-6916. PMID 26168420.

- ↑ Byrd, Nick; Joseph, Brianna; Gongora, Gabriela; Sirota, Miroslav (2023). "Tell Us What You Really Think: A Think Aloud Protocol Analysis of the Verbal Cognitive Reflection Test". Journal of Intelligence 11 (4): 76. doi:10.3390/jintelligence11040076. PMID 37103261.

- ↑ 20.0 20.1 20.2 Greene, Joshua (2014). Moral Tribes: Emotion, Reason, and the Gap Between Us and Them. Penguin. https://archive.org/details/moraltribesemoti0000gree.

- ↑ Greene, J. D. (2001-09-14). "An fMRI Investigation of Emotional Engagement in Moral Judgment". Science 293 (5537): 2105–2108. doi:10.1126/science.1062872. ISSN 0036-8075. PMID 11557895. Bibcode: 2001Sci...293.2105G.

- ↑ 22.0 22.1 "Beyond point-and-shoot morality: Why cognitive (neuro) science matters for ethics". The Law & Ethics of Human Rights 9 (2): 141–72. November 2015. doi:10.1515/lehr-2015-0011.

- ↑ Poldrack, R (February 2006). "Can cognitive processes be inferred from neuroimaging data?". Trends in Cognitive Sciences 10 (2): 59–63. doi:10.1016/j.tics.2005.12.004. PMID 16406760. https://hal-imt.archives-ouvertes.fr/hal-01699045/file/ccn-2017.pdf.

- ↑ Klein, Colin (5 June 2010). "The Dual Track Theory of Moral Decision-Making: a Critique of the Neuroimaging Evidence". Neuroethics 4 (2): 143–162. doi:10.1007/s12152-010-9077-1.

- ↑ "Behavioral effects of congenital ventromedial prefrontal cortex malformation". BMC Neurology 11: 151. December 2011. doi:10.1186/1471-2377-11-151. PMID 22136635.

- ↑ "Damage to the prefrontal cortex increases utilitarian moral judgements". Nature 446 (7138): 908–11. April 2007. doi:10.1038/nature05631. PMID 17377536. Bibcode: 2007Natur.446..908K.

- ↑ "Why are VMPFC patients more utilitarian? A dual-process theory of moral judgment explains". Trends in Cognitive Sciences 11 (8): 322–3; author reply 323–4. August 2007. doi:10.1016/j.tics.2007.06.004. PMID 17625951.

- ↑ 28.0 28.1 28.2 28.3 Damasio, Antonio (1994). Descartes' Error: Emotion, Reason, and the Human Brain. New York: Grosset/Putnam. ISBN 9780399138942. https://archive.org/details/descarteserrorem00dama.

- ↑ 29.0 29.1 29.2 Edmonds, David (2014). Would You Kill the Fat Man? The Trolley Problem and What Your Answer Tells Us about Right and Wrong. Princeton, NJ: Princeton University Press. pp. 137–139.

- ↑ Singer, Peter (2005). "Ethics and Intuitions". The Journal of Ethics 9 (3–4): 331–352. doi:10.1007/s10892-005-3508-y.

- ↑ "Cognitive load selectively interferes with utilitarian moral judgment". Cognition 107 (3): 1144–54. June 2008. doi:10.1016/j.cognition.2007.11.004. PMID 18158145.

- ↑ "Mortality salience and morality: thinking about death makes people less utilitarian". Cognition 124 (3): 379–84. September 2012. doi:10.1016/j.cognition.2012.05.011. PMID 22698994.

- ↑ "Risking your life without a second thought: intuitive decision-making and extreme altruism". PLOS ONE 9 (10): e109687. 2014. doi:10.1371/journal.pone.0109687. PMID 25333876. Bibcode: 2014PLoSO...9j9687R.

- ↑ "The neural basis of moral cognition: sentiments, concepts, and values". Annals of the New York Academy of Sciences 1124 (1): 161–80. March 2008. doi:10.1196/annals.1440.005. PMID 18400930. Bibcode: 2008NYASA1124..161M.

- ↑ "Moral judgment, human motivation, and neural networks". Cognitive Computation 5 (4): 566–79. December 2013. doi:10.1007/s12559-012-9181-0.

- ↑ "Irrational economic decision-making after ventromedial prefrontal damage: evidence from the Ultimatum Game". The Journal of Neuroscience 27 (4): 951–6. January 2007. doi:10.1523/JNEUROSCI.4606-06.2007. PMID 17251437.

- ↑ "Response to Greene: Moral sentiments and reason: friends or foes?". Trends in Cognitive Sciences 11 (8): 323–4. August 2007. doi:10.1016/j.tics.2007.06.011.

- ↑ "Family, community, trolley problems, and the crisis in moral psychology". The Yale Review 99 (2): 26–43. 2011. doi:10.1111/j.1467-9736.2011.00701.x.

- ↑ "Methodological Issues in the Neuroscience of Moral Judgement". Mind & Language 25 (5): 561–582. November 2010. doi:10.1111/j.1468-0017.2010.01401.x. PMID 22427714.

- ↑ Greene, Joshua (2007). "The secret joke of Kant's Soul". in Sinnott-Amstrong, Walter. Big Moral Psychology. MIT Press. pp. 35–80.

- ↑ Byrd, N., & Conway, P. (2019). Not all who ponder count costs: Arithmetic reflection predicts utilitarian tendencies, but logical reflection predicts both deontological and utilitarian tendencies. Cognition, 192, 103995. https://doi.org/10.1016/j.cognition.2019.06.007

- ↑ Conway, P., & Gawronski, B. (2013). Deontological and utilitarian inclinations in moral decision making: A process dissociation approach. Journal of Personality and Social Psychology, 104(2), 216–235. https://doi.org/10.1037/a0031021

- ↑ Goldstein-Greenwood, J., Conway, P., Summerville, A., & Johnson, B. N. (2020). (How) Do You Regret Killing One to Save Five? Affective and Cognitive Regret Differ After Utilitarian and Deontological Decisions. Personality and Social Psychology Bulletin. https://doi.org/10.1177/0146167219897662

- ↑ 44.0 44.1 44.2 Guzmán, Ricardo Andrés; Barbato, María Teresa; Sznycer, Daniel; Cosmides, Leda (2022-10-18). "A moral trade-off system produces intuitive judgments that are rational and coherent and strike a balance between conflicting moral values" (in en). Proceedings of the National Academy of Sciences 119 (42): e2214005119. doi:10.1073/pnas.2214005119. ISSN 0027-8424. PMID 36215511. Bibcode: 2022PNAS..11914005G.

- ↑ 45.0 45.1 45.2 Cushman, Fiery; Greene, Joshua D. (May 2012). "Finding faults: How moral dilemmas illuminate cognitive structure" (in en). Social Neuroscience 7 (3): 269–279. doi:10.1080/17470919.2011.614000. ISSN 1747-0919. PMID 21942995. http://www.tandfonline.com/doi/abs/10.1080/17470919.2011.614000.

- ↑ https://psycnet.apa.org/record/2007-14534-005

- ↑ Lott, Micah (October 2016). "Moral Implications from Cognitive (Neuro)Science? No Clear Route". Ethics 127 (1): 241–256. doi:10.1086/687337. https://philarchive.org/rec/LOTMIF.

- ↑ Königs, Peter (April 3, 2018). "Two types of debunking arguments". Philosophical Psychology 31 (3): 383–402. doi:10.1080/09515089.2018.1426100.

- ↑ Meyers, C. D. (May 19, 2015). "Brains, trolleys, and intuitions: Defending deontology from the Greene/Singer argument". Philosophical Psychology 28 (4): 466–486. doi:10.1080/09515089.2013.849381.

- ↑ Kahane, Guy (2012). "On the Wrong Track: Process and Content in Moral Psychology". Mind & Language 27 (5): 519–545. doi:10.1111/mila.12001. PMID 23335831.

- ↑ Nagel, Thomas (2013-11-02). "You Can't Learn About Morality from Brain Scans: The problem with moral psychology". New Republic. https://newrepublic.com/article/115279/joshua-greenes-moral-tribes-reviewed-thomas-nagel. Retrieved 24 November 2013.

- ↑ 52.0 52.1 52.2 Wright, Robert (23 October 2013). "Why Can't We All Just Get Along? The Uncertain Biological Basis of Morality". The Atlantic. https://www.theatlantic.com/magazine/archive/2013/11/why-we-fightand-can-we-stop/309525/.

- ↑ "Neuroscience and Moral Reasoning: A Note on Recent Research". Philosophy & Public Affairs 37 (4): 330–345. September 2009. doi:10.1111/j.1088-4963.2009.01165.x. ISSN 0048-3915.

- ↑ Kass, Leon (1998). The ethics of human cloning. AEI Press. ISBN 978-0844740508. OCLC 38989719. https://archive.org/details/ethicsofhumanclo0000kass.

- ↑ Scarantino, Andrea; de Sousa, Ronald (2018-09-25). "Emotion". in Zalta, Edward N.. Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/emotion/?PHPSESSID=294fbdac95a1996d91ef0a3f4d22cbd2.

- ↑ Prinz, Jesse (2011). "Against empathy". Southern Journal of Philosophy 49 (s1): 214–233. doi:10.1111/j.2041-6962.2011.00069.x.

- ↑ Cummins, Denise (Oct 20, 2013). "Why Paul Bloom Is Wrong About Empathy and Morality". https://www.psychologytoday.com/gb/blog/good-thinking/201310/why-paul-bloom-is-wrong-about-empathy-and-morality.

- ↑ 58.0 58.1 Earp, Brian (2017). Moral neuroenhancement. Routledge. OCLC 1027761018.

|

KSF

KSF