Self-play (reinforcement learning technique)

From HandWiki - Reading time: 4 min

From HandWiki - Reading time: 4 min

| Machine learning and data mining |

|---|

|

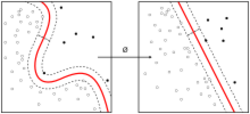

Self-play is a technique for improving the performance of reinforcement learning agents. Intuitively, agents learn to improve their performance by playing "against themselves".

Definition and motivation

In multi-agent reinforcement learning experiments, researchers try to optimize the performance of a learning agent on a given task, in cooperation or competition with one or more agents. These agents learn by trial-and-error, and researchers may choose to have the learning algorithm play the role of two or more of the different agents. When successfully executed, this technique has a double advantage:

- It provides a straightforward way to determine the actions of the other agents, resulting in a meaningful challenge.

- It increases the amount of experience that can be used to improve the policy, by a factor of two or more, since the viewpoints of each of the different agents can be used for learning.

Usage

Self-play is used by the AlphaZero program to improve its performance in the games of chess, shogi and go.[1]

Self-play is also used to train the Cicero AI system to outperform humans at the game of Diplomacy. The technique is also used in training the DeepNash system to play the game Stratego.[2][3]

Connections to other disciplines

Self-play has been compared to the epistemological concept of tabula rasa that describes the way that humans acquire knowledge from a "blank slate".[4]

Further reading

- "Survey of Self-Play in Reinforcement Learning". 2021. arXiv:2107.02850.

{{cite arXiv}}: CS1 maint: missing class (link)

References

- ↑ Silver, David; Hubert, Thomas; Schrittwieser, Julian; Antonoglou, Ioannis; Lai, Matthew; Guez, Arthur; Lanctot, Marc; Sifre, Laurent; Kumaran, Dharshan; Graepel, Thore; Lillicrap, Timothy; Simonyan, Karen; Hassabis, Demis (5 December 2017). "Mastering Chess and Shogi by Self-Play with a General Reinforcement Learning Algorithm". arXiv:1712.01815 [cs.AI].

- ↑ Snyder, Alison (2022-12-01). "Two new AI systems beat humans at complex games" (in en). https://www.axios.com/2022/12/01/ai-beats-humans-complex-games.

- ↑ Erich_Grunewald (in en). Notes on Meta's Diplomacy-Playing AI. https://www.lesswrong.com/posts/oT8fmwWddGwnZbbym/notes-on-meta-s-diplomacy-playing-ai.

- ↑ Laterre, Alexandre (2018). "Ranked Reward: Enabling Self-Play Reinforcement Learning for Combinatorial Optimization". arXiv:1712.01815.

{{cite arXiv}}: CS1 maint: missing class (link)

KSF

KSF