Transformer (machine learning model)

From HandWiki - Reading time: 15 min

From HandWiki - Reading time: 15 min

| Machine learning and data mining |

|---|

|

A transformer is a deep learning model that adopts the mechanism of self-attention, differentially weighting the significance of each part of the input data. It is used primarily in the fields of natural language processing (NLP)[1] and computer vision (CV).[2]

Like recurrent neural networks (RNNs), transformers are designed to process sequential input data, such as natural language, with applications towards tasks such as translation and text summarization. However, unlike RNNs, transformers process the entire input all at once. The attention mechanism provides context for any position in the input sequence. For example, if the input data is a natural language sentence, the transformer does not have to process one word at a time. This allows for more parallelization than RNNs and therefore reduces training times.[1]

Transformers were introduced in 2017 by a team at Google Brain[1] and are increasingly the model of choice for NLP problems,[3] replacing RNN models such as long short-term memory (LSTM). The additional training parallelization allows training on larger datasets. This led to the development of pretrained systems such as BERT (Bidirectional Encoder Representations from Transformers) and GPT (Generative Pre-trained Transformer), which were trained with large language datasets, such as the Wikipedia Corpus and Common Crawl, and can be fine-tuned for specific tasks.[4][5]

Background

Before transformers, most state-of-the-art NLP systems relied on gated RNNs, such as LSTM and gated recurrent units (GRUs), with added attention mechanisms. Transformers are built on these attention technologies without using an RNN structure, highlighting the fact that attention mechanisms alone can match the performance of RNNs with attention.

Sequential processing

Gated RNNs process tokens sequentially, maintaining a state vector that contains a representation of the data seen after every token. To process the th token, the model combines the state representing the sentence up to token with the information of the new token to create a new state, representing the sentence up to token . Theoretically, the information from one token can propagate arbitrarily far down the sequence, if at every point the state continues to encode contextual information about the token. In practice this mechanism is flawed: the vanishing gradient problem leaves the model's state at the end of a long sentence without precise, extractable information about preceding tokens. The dependency of token computations on results of previous token computations also makes it hard to parallelize computation on modern deep learning hardware. This can make the training of RNNs inefficient.

Self-Attention

These problems were addressed by attention mechanisms. Attention mechanisms let a model draw from the state at any preceding point along the sequence. The attention layer can access all previous states and weight them according to a learned measure of relevance, providing relevant information about far-away tokens.

A clear example of the value of attention is in language translation, where context is essential to assign the meaning of a word in a sentence. In an English-to-French translation system, the first word of the French output most probably depends heavily on the first few words of the English input. However, in a classic LSTM model, in order to produce the first word of the French output, the model is given only the state vector after processing the last English word. Theoretically, this vector can encode information about the whole English sentence, giving the model all necessary knowledge. In practice, this information is often poorly preserved by the LSTM. An attention mechanism can be added to address this problem: the decoder is given access to the state vectors of every English input word, not just the last, and can learn attention weights that dictate how much to attend to each English input state vector.

When added to RNNs, attention mechanisms increase performance. The development of the Transformer architecture revealed that attention mechanisms were powerful in themselves and that sequential recurrent processing of data was not necessary to achieve the quality gains of RNNs with attention. Transformers use an attention mechanism without an RNN, processing all tokens at the same time and calculating attention weights between them in successive layers. Since the attention mechanism only uses information about other tokens from lower layers, it can be computed for all tokens in parallel, which leads to improved training speed.

Architecture

Input

The input text is parsed into tokens by a byte pair encoding tokenizer, and each token is converted via a word embedding into a vector. Then, positional information of the token is added to the word embedding.

Encoder–decoder architecture

Like earlier seq2seq models, the original Transformer model used an encoder–decoder architecture. The encoder consists of encoding layers that process the input iteratively one layer after another, while the decoder consists of decoding layers that do the same thing to the encoder's output.

The function of each encoder layer is to generate encodings that contain information about which parts of the inputs are relevant to each other. It passes its encodings to the next encoder layer as inputs. Each decoder layer does the opposite, taking all the encodings and using their incorporated contextual information to generate an output sequence.[6] To achieve this, each encoder and decoder layer makes use of an attention mechanism.

For each input, attention weighs the relevance of every other input and draws from them to produce the output.[7] Each decoder layer has an additional attention mechanism that draws information from the outputs of previous decoders, before the decoder layer draws information from the encodings.

Both the encoder and decoder layers have a feed-forward neural network for additional processing of the outputs and contain residual connections and layer normalization steps.[7]

Scaled dot-product attention

The transformer building blocks are scaled dot-product attention units. When a sentence is passed into a transformer model, attention weights are calculated between every token simultaneously. The attention unit produces embeddings for every token in context that contain information about the token itself along with a weighted combination of other relevant tokens each weighted by its attention weight.

For each attention unit the transformer model learns three weight matrices; the query weights , the key weights , and the value weights . For each token , the input word embedding is multiplied with each of the three weight matrices to produce a query vector , a key vector , and a value vector . Attention weights are calculated using the query and key vectors: the attention weight from token to token is the dot product between and . The attention weights are divided by the square root of the dimension of the key vectors, , which stabilizes gradients during training, and passed through a softmax which normalizes the weights. The fact that and are different matrices allows attention to be non-symmetric: if token attends to token (i.e. is large), this does not necessarily mean that token will attend to token (i.e. could be small). The output of the attention unit for token is the weighted sum of the value vectors of all tokens, weighted by , the attention from token to each token.

The attention calculation for all tokens can be expressed as one large matrix calculation using the softmax function, which is useful for training due to computational matrix operation optimizations that quickly compute matrix operations. The matrices , and are defined as the matrices where the th rows are vectors , , and respectively.

Multi-head attention

One set of matrices is called an attention head, and each layer in a transformer model has multiple attention heads. While each attention head attends to the tokens that are relevant to each token, with multiple attention heads the model can do this for different definitions of "relevance". In addition the influence field representing relevance can become progressively dilated in successive layers. Many transformer attention heads encode relevance relations that are meaningful to humans. For example, attention heads can attend mostly to the next word, while others mainly attend from verbs to their direct objects.[8] The computations for each attention head can be performed in parallel, which allows for fast processing. The outputs for the attention layer are concatenated to pass into the feed-forward neural network layers.

Encoder

Each encoder consists of two major components: a self-attention mechanism and a feed-forward neural network. The self-attention mechanism accepts input encodings from the previous encoder and weighs their relevance to each other to generate output encodings. The feed-forward neural network further processes each output encoding individually. These output encodings are then passed to the next encoder as its input, as well as to the decoders.

The first encoder takes positional information and embeddings of the input sequence as its input, rather than encodings. The positional information is necessary for the transformer to make use of the order of the sequence, because no other part of the transformer makes use of this.[1]

The encoder is bidirectional. Attention can be placed on tokens before and after the current token.

Positional encoding

The positional encoding is defined as a function of type , where is a positive even integer, bywhere . Here, is a free parameter that should be significantly larger than the biggest that would be input into the positional encoding function. In the original paper[1], the authors chose .

The function is in a simpler form when written as a complex function of type where .

The main reason the authors chose this as the positional encoding function is that it allows one to perform shifts as linear transformations:where is the distance one wishes to shift. This allows the transformer to take any encoded position, and find the encoding of the position 1-step-ahead, or 1-step-behind, etc, by a matrix multiplication.

By taking a linear sum, any convolution can also be implemented as linear transformations: for any constants . This allows the transformer to take any encoded position and find a linear sum of the encoded locations of its neighbors. This sum of encoded positions, when fed into the attention mechanism, would create attention weights on its neighbors, much like what happens in a convolutional neural network language model. In the author's words, "we hypothesized it would allow the model to easily learn to attend by relative position".

In typical implementations, all operations are done over the real numbers, not the complex numbers, but since complex multiplication can be implemented as real 2-by-2 matrix multiplication, this is a mere notational difference.

Other positional encoding schemes exist.[9]

Decoder

Each decoder consists of three major components: a self-attention mechanism, an attention mechanism over the encodings, and a feed-forward neural network. The decoder functions in a similar fashion to the encoder, but an additional attention mechanism is inserted which instead draws relevant information from the encodings generated by the encoders. This mechanism can also be called the encoder-decoder attention.[1][7]

Like the first encoder, the first decoder takes positional information and embeddings of the output sequence as its input, rather than encodings. The transformer must not use the current or future output to predict an output, so the output sequence must be partially masked to prevent this reverse information flow.[1] This allows for autoregressive text generation. For all attention heads, attention can't be placed on following tokens. The last decoder is followed by a final linear transformation and softmax layer, to produce the output probabilities over the vocabulary.

GPT has a decoder-only architecture.

Alternatives

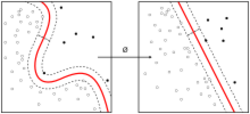

Training transformer-based architectures can be expensive, especially for long inputs.[10] Alternative architectures include the Reformer (which reduces the computational load from to ), or models like ETC/BigBird (which can reduce it to )[11] where is the length of the sequence. This is done using locality-sensitive hashing and reversible layers.[12][13]

Ordinary transformers require a memory size which is quadratic in the size of the context window. Attention Free Transformers[14] reduce this to a linear dependence while still retaining the advantages of a transformer by linking the key to the value.

A benchmark for comparing transformer architectures was introduced in late 2020.[15]

Training

Transformers typically undergo self-supervised learning involving unsupervised pretraining followed by supervised fine-tuning. Pretraining is typically done on a larger dataset than fine-tuning, due to the limited availability of labeled training data. Tasks for pretraining and fine-tuning commonly include:

- language modeling[4]

- next-sentence prediction[4]

- question answering[5]

- reading comprehension

- sentiment analysis[16]

- paraphrasing[16]

Applications

The transformer has had great success in natural language processing (NLP), for example the tasks of machine translation and time series prediction.[17] Many pretrained models such as GPT-2, GPT-3, BERT, XLNet, and RoBERTa demonstrate the ability of transformers to perform a wide variety of such NLP-related tasks, and have the potential to find real-world applications.[4][5][18] These may include:

- machine translation

- document summarization

- document generation

- named entity recognition (NER)[19]

- biological sequence analysis[20][21][22]

- video understanding.[23]

In 2020, it was shown that the transformer architecture, more specifically GPT-2, could be tuned to play chess.[24] Transformers have been applied to image processing with results competitive with convolutional neural networks.[25][26]

Implementations

The transformer model has been implemented in standard deep learning frameworks such as TensorFlow and PyTorch.

Transformers is a library produced by Hugging Face that supplies transformer-based architectures and pretrained models.[3]

See also

References

- ↑ 1.0 1.1 1.2 1.3 1.4 1.5 1.6 Vaswani, Ashish; Shazeer, Noam; Parmar, Niki; Uszkoreit, Jakob; Jones, Llion; Gomez, Aidan N.; Kaiser, Lukasz; Polosukhin, Illia (2017-06-12). "Attention Is All You Need". arXiv:1706.03762 [cs.CL].

- ↑ He, Cheng (31 December 2021). "Transformer in CV". Towards Data Science. https://towardsdatascience.com/transformer-in-cv-bbdb58bf335e.

- ↑ 3.0 3.1 Wolf, Thomas; Debut, Lysandre; Sanh, Victor; Chaumond, Julien; Delangue, Clement; Moi, Anthony; Cistac, Pierric; Rault, Tim et al. (2020). "Transformers: State-of-the-Art Natural Language Processing". Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations. pp. 38–45. doi:10.18653/v1/2020.emnlp-demos.6.

- ↑ 4.0 4.1 4.2 4.3 "Open Sourcing BERT: State-of-the-Art Pre-training for Natural Language Processing". http://ai.googleblog.com/2018/11/open-sourcing-bert-state-of-art-pre.html.

- ↑ 5.0 5.1 5.2 "Better Language Models and Their Implications". 2019-02-14. https://openai.com/blog/better-language-models/.

- ↑ "Sequence Modeling with Neural Networks (Part 2): Attention Models". 2016-04-18. https://indico.io/blog/sequence-modeling-neural-networks-part2-attention-models/.

- ↑ 7.0 7.1 7.2 Alammar, Jay. "The Illustrated Transformer". http://jalammar.github.io/illustrated-transformer/.

- ↑ Clark, Kevin; Khandelwal, Urvashi; Levy, Omer; Manning, Christopher D. (August 2019). "What Does BERT Look at? An Analysis of BERT's Attention". Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP (Florence, Italy: Association for Computational Linguistics): 276–286. doi:10.18653/v1/W19-4828. https://www.aclweb.org/anthology/W19-4828.

- ↑ Dufter, Philipp; Schmitt, Martin; Schütze, Hinrich (2022-06-06). "Position Information in Transformers: An Overview". Computational Linguistics: 1–31. doi:10.1162/coli_a_00445. ISSN 0891-2017. https://doi.org/10.1162/coli_a_00445.

- ↑ Kitaev, Nikita; Kaiser, Łukasz; Levskaya, Anselm (2020). "Reformer: The Efficient Transformer". arXiv:2001.04451 [cs.LG].

- ↑ "Constructing Transformers For Longer Sequences with Sparse Attention Methods" (in en). https://ai.googleblog.com/2021/03/constructing-transformers-for-longer.html.

- ↑ "Tasks with Long Sequences – Chatbot". https://www.coursera.org/lecture/attention-models-in-nlp/tasks-with-long-sequences-suzNH.

- ↑ "Reformer: The Efficient Transformer" (in en). http://ai.googleblog.com/2020/01/reformer-efficient-transformer.html.

- ↑ Zhai, Shuangfei; Talbott, Walter; Srivastava, Nitish; Huang, Chen; Goh, Hanlin; Zhang, Ruixiang; Susskind, Josh (2021-09-21). "An Attention Free Transformer". arXiv:2105.14103 [cs.LG].

- ↑ Tay, Yi; Dehghani, Mostafa; Abnar, Samira; Shen, Yikang; Bahri, Dara; Pham, Philip; Rao, Jinfeng; Yang, Liu; Ruder, Sebastian; Metzler, Donald (2020-11-08). "Long Range Arena: A Benchmark for Efficient Transformers". arXiv:2011.04006 [cs.LG].

- ↑ 16.0 16.1 Wang, Alex; Singh, Amanpreet; Michael, Julian; Hill, Felix; Levy, Omer; Bowman, Samuel (2018). "GLUE: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding". Proceedings of the 2018 EMNLP Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP (Stroudsburg, PA, USA: Association for Computational Linguistics): 353–355. doi:10.18653/v1/w18-5446.

- ↑ Allard, Maxime (2019-07-01). "What is a Transformer?" (in en). https://medium.com/inside-machine-learning/what-is-a-transformer-d07dd1fbec04.

- ↑ Yang, Zhilin Dai, Zihang Yang, Yiming Carbonell, Jaime Salakhutdinov, Ruslan Le, Quoc V. (2019-06-19). XLNet: Generalized Autoregressive Pretraining for Language Understanding. OCLC 1106350082.

- ↑ Monsters, Data (2017-09-26). "10 Applications of Artificial Neural Networks in Natural Language Processing" (in en). https://medium.com/@datamonsters/artificial-neural-networks-in-natural-language-processing-bcf62aa9151a.

- ↑ Rives, Alexander; Goyal, Siddharth; Meier, Joshua; Guo, Demi; Ott, Myle; Zitnick, C. Lawrence; Ma, Jerry; Fergus, Rob (2019). "Biological structure and function emerge from scaling unsupervised learning to 250 million protein sequences". bioRxiv 10.1101/622803.

- ↑ Nambiar, Ananthan; Heflin, Maeve; Liu, Simon; Maslov, Sergei; Hopkins, Mark; Ritz, Anna (2020). Transforming the Language of Life: Transformer Neural Networks for Protein Prediction Tasks.. doi:10.1145/3388440.3412467.

- ↑ Rao, Roshan; Bhattacharya, Nicholas; Thomas, Neil; Duan, Yan; Chen, Xi; Canny, John; Abbeel, Pieter; Song, Yun S. (2019). "Evaluating Protein Transfer Learning with TAPE". bioRxiv 10.1101/676825.

- ↑ Bertasias; Wang; Torresani (2021). "Is Space-Time Attention All You Need for Video Understanding?". arXiv:2102.05095 [cs.CV].

- ↑ Noever, David; Ciolino, Matt; Kalin, Josh (2020-08-21). "The Chess Transformer: Mastering Play using Generative Language Models". arXiv:2008.04057 [cs.AI].

- ↑ Dosovitskiy, Alexey; Beyer, Lucas; Kolesnikov, Alexander; Weissenborn, Dirk; Zhai, Xiaohua; Unterthiner, Thomas; Dehghani, Mostafa; Minderer, Matthias; Heigold, Georg; Gelly, Sylvain; Uszkoreit, Jakob; Houlsby, Neil (2020). "An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale". arXiv:2010.11929 [cs.CV].

{{cite arXiv}}: Cite has empty unknown parameters:|access-date=and|website=(help) - ↑ Touvron, Hugo; Cord, Matthieu; Douze, Matthijs; Massa, Francisco; Sablayrolles, Alexandre; Jégou, Hervé (2020). "Training data-efficient image transformers & distillation through attention". arXiv:2012.12877 [cs.CV].

{{cite arXiv}}: Cite has empty unknown parameters:|access-date=and|website=(help)

Further reading

- Hubert Ramsauer et al. (2020), "Hopfield Networks is All You Need", preprint submitted for ICLR 2021. arXiv:2008.02217; see also authors' blog

- – Discussion of the effect of a transformer layer as equivalent to a Hopfield update, bringing the input closer to one of the fixed points (representable patterns) of a continuous-valued Hopfield network

- Alexander Rush, The Annotated transformer, Harvard NLP group, 3 April 2018

KSF

KSF