Entropy

From Conservapedia

From Conservapedia Entropy is a quantitative measure of the "disorder" in a system. It forms the basis of the second law of thermodynamics, that entropy tends to increase. In other words, the tendency of everything to trend toward greater disorder, in the absence of intelligent intervention.

The second law of thermodynamics states that entropy tends not to decrease over time within an isolated system, defining an isolated system as one in which neither matter nor energy may enter or leave.[1]

Entropy is undeniable and yet creates perhaps insurmountable difficulties for many modern theories of physics. For example, it renders time asymmetric, resulting in an arrow of time that is impossible to reconcile with the theory of relativity. Increasing entropy renders the theory of evolution implausible, because that theory claims that order is increasing. Liberal denial is thus common in ignoring the significance of the increase in disorder.

The entropy of a system only depends on the state the system is currently in. The change in entropy therefore only depends on the initial and final states of that system, and not the path taken by the system to reach that final state.

Contents

Definitions[edit]

Thermodynamic definition[edit]

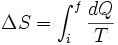

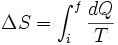

In classical thermodynamics, if a small amount of energy  is supplied to a system from a reservoir held at temperature

is supplied to a system from a reservoir held at temperature  , the small change in entropy,

, the small change in entropy,  , is given by

, is given by

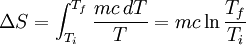

For a measurable change between two states i and f this expression integrates to

where  and

and  represent the initial and final states of the system.

represent the initial and final states of the system.

Example of Thermodynamic Entropy[edit]

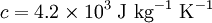

Consider a cup of coffee, of mass  and since it is mostly water, specific heat capacity

and since it is mostly water, specific heat capacity  . We shall assume that both

. We shall assume that both  and

and  are constant. If we leave the coffee for a while, it shall cool from

are constant. If we leave the coffee for a while, it shall cool from  to

to  . The change in entropy is, from above:

. The change in entropy is, from above:

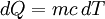

We can relate the change in heat,  , to the change in temperature,

, to the change in temperature,  by

by  . Then we can write:

. Then we can write:

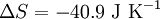

Plugging our numbers we find that the change in entropy is  . The change in entropy is negative. This does not disagree with the second law of thermodynamics, as it our cup of coffee is an open system, not an isolated system.

. The change in entropy is negative. This does not disagree with the second law of thermodynamics, as it our cup of coffee is an open system, not an isolated system.

Statistical mechanics definition 1 (Boltzmann Entropy)[edit]

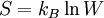

If a system can be arranged in W different ways, the entropy is

where  is Boltzmann's constant.[2]

is Boltzmann's constant.[2]

Example of Boltzmann Entropy[edit]

This example is based on that found in University Physics with Modern Physics. [2] To see an example of the statistical nature of this law, consider flipping four coins, the outcome of which can be either heads (H) or tails (T). The entropy can be calculated using the above.

| ID | Combinations | Number of Combinations | Entropy

|

|---|---|---|---|

| 1 | HHHH | 1 |

|

| 2 | THHH HTHH |

4 |

|

| 3 | TTHH THTH |

6 |

|

| 4 | TTTH TTHT |

4 |

|

| 5 | TTTT | 1 |

|

Intuitively, the macro-state with the highest entropy (or "disorder") is the third state as it corresponds the macro-state which has the greatest number of micro-states. In the same way that when you throw four coins, you expect to get two heads and two tails, it is not impossible to get four heads. It is only less likely.

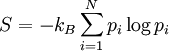

Statistical mechanics definition 2 (Gibbs Entropy)[edit]

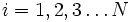

Label the different states a thermodynamic system can be in by  . If the probability of finding the system in state i is

. If the probability of finding the system in state i is  , then the entropy is

, then the entropy is

where  is the Bolzmann constant. This definition is closely related to ideas in information theory, where the definition of information content is very similar to the definition of entropy.

is the Bolzmann constant. This definition is closely related to ideas in information theory, where the definition of information content is very similar to the definition of entropy.

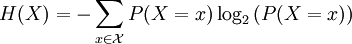

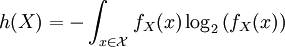

Entropy in information Theory (Shannon Entropy)[edit]

For a discrete random variable, entropy is defined as

For a continuous random variable, the analogous description for entropy, which in this case represents the number of bits necessary to quantize a signal to a desired accuracy, is given by

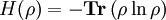

Entropy in Quantum Information Theory (Von Neumann Entropy)[edit]

In entangled systems, a useful quantity is the Von Neumann Entropy, defined (for a system with density matrix  ) by

) by

where Tr() indicates taking the Trace of a matrix (the sum of the diagonal elements). This is a useful measure of entanglement, which is zero for a pure state, and maximal for a fully mixed state.

See also[edit]

References[edit]

Categories: [Physics] [Thermodynamics] [Second Law of Thermodynamics]

↧ Download as ZWI file | Last modified: 02/10/2023 15:02:58 | 100 views

☰ Source: https://www.conservapedia.com/Entropy | License: CC BY-SA 3.0

ZWI signed:

ZWI signed: KSF

KSF